11.3: Top-down Approaches

- Page ID

- 7716

The approaches we've examined to this point start with the dyad, and see if this kind of tight structure can be extended outward. Overall structure of the network is seen as "emerging" from overlaps and couplings of smaller components. Certainly, this is a valid way of thinking about large structures and their component parts. The bottom-up approach may focus our attention on the underlying dynamic processes by which actors build networks.

Some might prefer, however, to start with the entire network as their frame of reference, rather than the dyad. Approaches of this type tend to look at the "whole" structure, and identify "sub-structures" as parts that are locally denser than the field as a whole. In a sense, this more macro lens is looking for "holes" or "vulnerabilities" or "weak spots" in the overall structure or solidarity of the network. These holes or weak spots define lines of division or cleavage in the larger group, and point to how it might be decomposed into smaller units. This top-down perspective leads us to think of dynamics that operate at the level of group-selection, and to focus on the constraints under which actors construct networks.

There are numerous ways that one might define the divisions and "weak spots" in a network. Below are some of the most common approaches.

Components

Components of a graph are sub-graphs that are connected within, but disconnected between sub-groups. If a graph contains one or more "isolates", these actors are components. More interesting components are those which divide the network into separate parts, and where each part has several actors who are connected to one another (we pay no attention to how closely connected).

For directed graphs (in contrast to simple graphs), we can define two different kinds of components. A weak component is a set of nodes that are connected, regardless of the direction of ties. A strong component requires that there be a directed path from A to B in order for the two to be in the same component.

Since the Knoke information network has a single component, it isn't very interesting as an example. Let's look instead at the network of large donors to California political campaigns, where the strength of the relation between two actors is defined by the number of times that they contributed on the same side of an issue.

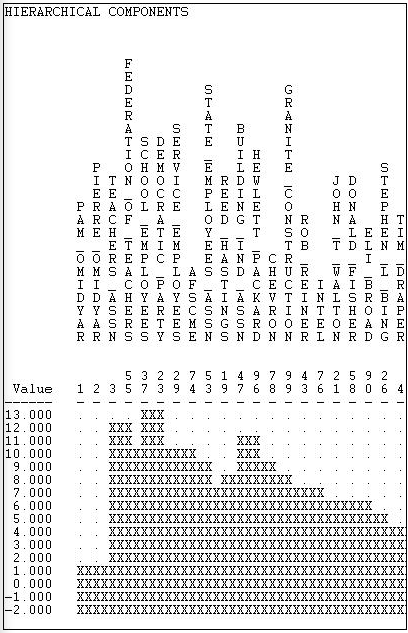

UCINET provides two algorithms for doing a census of components. Network>Regions>Components>Simple graphs is used for binary data. In addition to identifying the members of the components, it calculates a number of statistical measures of graph fragmentation. Network>Regions>Components>Valued Graphs can be used to examine the hierarchy of components as the cut-off value of tie strength is increasingly relaxed. Figure 11.10 shows partial results for the California political donations data.

Figure 11.10: Weak component hierarchy for California political donors (truncated)

If we set a very high cut-off value of 13 issues in common, then our graph has only one non-isolate component (made up of the Democratic Party and the School Employees Union). Progressively lower cut-offs produce multiple, separate components until we reach a value of 7 issues in common. At this point, the non-isolated nodes all become connected into a single component.

Just as the strict definition of a "clique" may be too strong to capture the meaning of the concept of a maximal group, the notion of a component may be too strong to find all the meaningful weak-points, holes, and locally dense sub-parts of a larger graph. So, we will examine some more flexible approaches.

Blocks and Cutpoints (Bi-components)

An alternative approach to finding the key "weak" spots in a graphs is to ask: if a node were removed, would the structure become divided into un-connected parts? If there are such nodes, they are called "cutpoints". And, one can imagine that such cutpoints may be particularly important actors - who may act as brokers among otherwise disconnected groups. The divisions into which cutpoints divide a graph are called blocks. We can find the maximal non-separable sub-graphs (blocks) of a graph by locating the cutpoints. That is, we try to find the nodes that connect the graph (if there are any). Another name for a block is a "bi-component".

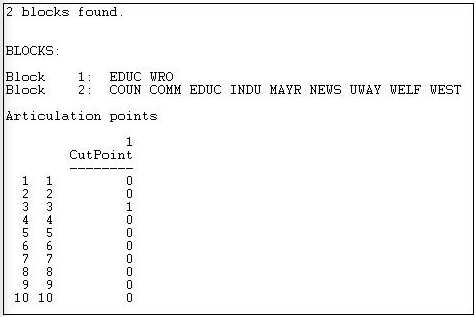

The UCINET algorithm Network>Regions>Bi-Component locates and identifies blocks and cutpoints. In Figure 11.11, we've applied it to the original Knoke symmetrized reciprocity data.

Figure 11.11: Cutpoints and blocks in the Knoke information network

Two blocks are identified, with EDUC a member of both. This means that if EDUC (node 3) were removed, the WRO would become isolated. Node 3, then, is a cutpoint. You might want to verify this by eye, by glancing back at the graph of this network.

Components analysis locates parts of the graph that are disconnected; bi-components analysis locates parts that are vulnerable. Both approaches focus attention on key actors.

Lambda Sets and Bridges

An alternative approach is to ask if there are certain connections in the graph which, if removed, would result in a disconnected structure. In our example, the only relationship that qualifies is that between EDUC and WRO. But, since this would only lop off one actor, rather than really altering the network, it is not very interesting. However, it is possible to approach the question in a more sophisticated way. The Lambda set approach ranks each of the relationships in the network in terms of importance by evaluating how much of the flow among actors in the net go through each link. It then identifies sets of relationships which, if disconnected, would most greatly disrupt the flow among all of the actors. The math and computation is rather extreme, though the idea is fairly simple.

Network>Subgroups>Lambda Set locates the vulnerable "bridges" between pairs of actors. Figure 11.12 shows the results for the Knoke (reciprocity-symmetrized) information network.

Figure 11.12: Lambda sets in the Knoke information network

This approach identifies the #2 to #5 (MAYR to COMM) linkage as the most important one in the graph - in the sense that it carries a great deal of traffic, and the graph would be most disrupted if it were removed. This result can be confirmed by looking at the graph, where we see that most actors are connected to most other actors by way of the linkage between #2 and #5. Considerably less critical are linkages between 2 and 5 and actors 1, 3, 4, 7, and 10. Again, a glance at the figure shows these organizations to be a sort of "outer circle" around the core.

The lambda set idea has moved us quite far away from the strict components idea. Rather than emphasizing the "decomposition" or separation of the structure into unconnected components, the lambda set idea is a more "continuous" one. It highlights points at which the fabric of connection is most vulnerable to disruption.

Factions

Imagine a society in which each person was closely tied to all others in their own sub-population (that is, all sub-populations are cliques), and there are no connections at all among sub-populations (that is, each sub-population is a component). Most real populations do not look like this, but the "ideal type" of complete connection within and complete disconnection between sub-groups is a useful reference point for assessing the degree of "factionalization" in a population.

If we took all the members of each "faction" in this ideal-typical society, and put their rows and columns together in an adjacency matrix (i.e. permuted the matrix), we would see a distinctive pattern of "1-blocks" and "0-blocks". All connections among actors within a faction would be present, all connections between actors in different factions would be absent.

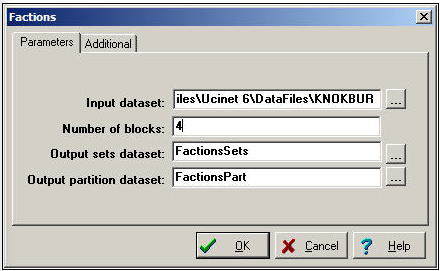

Network>Subgroups>Factions is an algorithm that finds the optimal arrangement of actors into factions to maximize similarity to the ideal type, and measures how well the data actually fit the ideal type. Figure 11.13 shows the dialog for using this tool.

Figure 11.13: Dialog for Network>Subgroups>Factions

Notice that you must specify how many factions (blocks) you would like the algorithm to find. If you have a prior hypothesis that a given population was divided into two factions, you could "test" this hypothesis by seeing how much error remained after defining two optimal factions. More commonly, we might use this tool in an exploratory way, examining the results from several runs with differing numbers of factions. As with any exploratory technique, it is a matter of judgment which solution is most helpful. After running several alternative numbers of blocks, we settled on four as meaningful for our purposes. This result is shown in Figure 11.14.

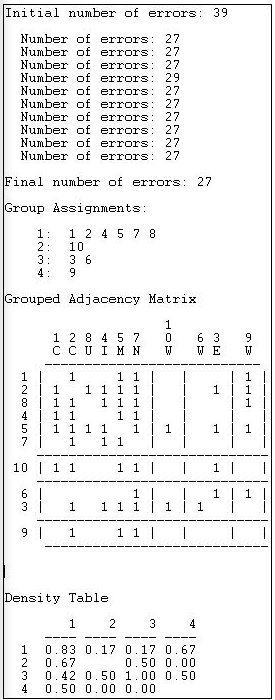

Figure 11.14: Four-faction solution for the directed Knoke information network

The "Final number of errors" can be used as a measure of the "goodness of fit" of the "blocking" of the matrix. This count (27 in this case) is the sum of the number of zeros within factions (where all the ties are supposed to be present in the ideal type) plus the number of ones in the non-diagonal blocks (ties between members of different factions, which are supposed to be absent in the ideal type). Since there are 49 total ties in our data, being wrong on the locations of 27 is not a terribly good fit. It is, however, the best we can do with four "factions".

The four factions are identified, and we note that two of them are individuals (10, 9) and one is a dyad (3,6).

The "blocked" or "grouped" adjacency matrix shows a picture of the solution. We can see that there is quite a lot of density "off the main diagonal" where there shouldn't be any. The final panel of the results reports the "block densities" as the number of ties that are present in blocks as proportions of all possible ties.

This approach corresponds nicely to the intuitive notion that the groups of a graph can be defined by a combination of local high density and the presence of "structural holes" between some sets of actors and others. The picture then not only identifies actual or potential factions, but also tells us about the relations among the factions - potential allies and enemies, in some cases.