9.2: Building Subspaces

- Page ID

- 2029

Consider the set

\[U= \left\{ \begin{pmatrix}1\\0\\0\end{pmatrix}, \begin{pmatrix}0\\1\\0\end{pmatrix} \right\} \subset \Re^{3}.\]

Because \(U\) consists of only two vectors, it clear that \(U\) is \(\textit{not}\) a vector space, since any constant multiple of these vectors should also be in \(U\). For example, the \(0\)-vector is not in \(U\), nor is \(U\) closed under vector addition.

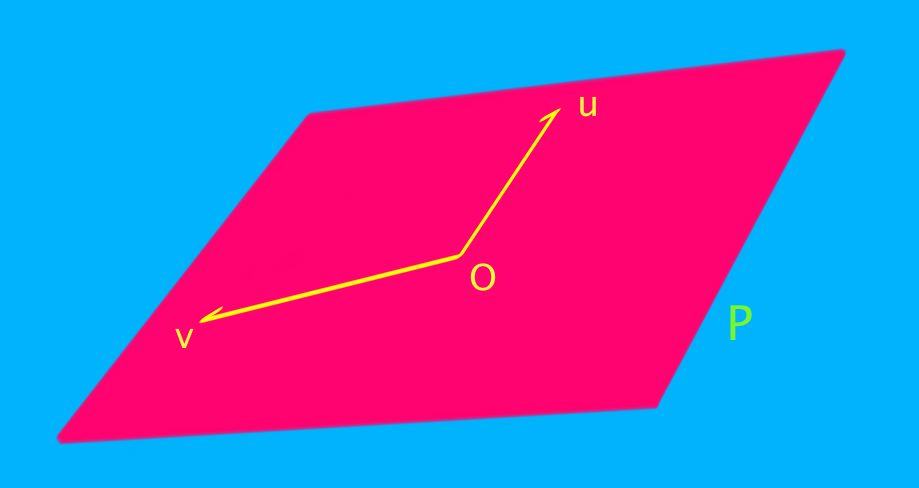

But we know that any two vectors define a plane:

In this case, the vectors in \(U\) define the \(xy\)-plane in \(\Re^{3}\). We can view the \(xy\)-plane as the set of all vectors that arise as a linear combination of the two vectors in \(U\). We call this set of all linear combinations the \(\textit{span}\) of \(U\):

\[span(U)=\left\{ x \begin{pmatrix}1\\0\\0\end{pmatrix}+y \begin{pmatrix}0\\1\\0\end{pmatrix} \middle| x,y\in \Re \right\}.\]

Notice that any vector in the \(xy\)-plane is of the form

\[\begin{pmatrix}x\\y\\0\end{pmatrix} = x \begin{pmatrix}1\\0\\0\end{pmatrix}+y \begin{pmatrix}0\\1\\0\end{pmatrix} \in span(U).\]

Definition: Span

Let \(V\) be a vector space and \(S=\{ s_{1}, s_{2}, \ldots \} \subset V\) a subset of \(V\). Then the \(\textit{span of S}\) is the set:

\[span(S):=\{ r^{1}s_{1}+r^{2}s_{2}+\cdots + r^{N}s_{N} | r^{i}\in \Re, N\in \mathbb{N} \}. \label{span}\]

That is, the span of \(S\) is the set of all finite linear combinations (usually our vector spaces are defined over \(\mathbb{R}\), but in general we can have vector spaces defined over different base fields such as \(\mathbb{C}\) or \(\mathbb{Z}_{2}\). The coefficients \(r^{i}\) should come from whatever our base field is (usually \(\mathbb{R}\)).} of elements of \(S\). Any \(\textit{finite}\) sum of the form "a constant times \(s_{1}\) plus a constant times \(s_{2}\) plus a constant times \(s_{3}\) and so on'' is in the span of \(S\).

It is important that we only allow finite linear combinations. In Equation \ref{span}, \(N\) must be a finite number. It can be any finite number, but it must be finite.

Example \(\PageIndex{1}\):

Let \(V=\Re^{3}\) and \(X\subset V\) be the \(x\)-axis. Let \(P=\begin{pmatrix}0\\1\\0\end{pmatrix}\), and set

\[S=X \cup \{P\}\, .\]

The vector \(\begin{pmatrix}2 \\ 3 \\ 0\end{pmatrix}\) is in \(span(S),\) because \(\begin{pmatrix}2\\3\\0\end{pmatrix}=\begin{pmatrix}2\\0\\0\end{pmatrix}+3\begin{pmatrix}0\\1\\0\end{pmatrix}.\) Similarly, the vector \(\begin{pmatrix}-12 \\ 17.5 \\ 0\end{pmatrix}\) is in \(span(S),\) because \(\begin{pmatrix}-12\\17.5\\0\end{pmatrix}=\begin{pmatrix}-12\\0\\0\end{pmatrix}+17.5\begin{pmatrix}0\\1\\0\end{pmatrix}.\)

Similarly, any vector of the form

\[\begin{pmatrix}x\\0\\0\end{pmatrix}+y \begin{pmatrix}0\\1\\0\end{pmatrix} = \begin{pmatrix}x\\y\\0\end{pmatrix}\]

is in \(span(S)\). On the other hand, any vector in \(span(S)\) must have a zero in the \(z\)-coordinate. (Why?)

So \(span(S)\) is the \(xy\)-plane, which is a vector space. (Try drawing a picture to verify this!)

Lemma

For any subset \(S\subset V\), \(span(S)\) is a subspace of \(V\).

Proof

We need to show that \(span(S)\) is a vector space.

It suffices to show that \(span(S)\) is closed under linear combinations. Let \(u,v\in span(S)\) and \(\lambda, \mu\) be constants. By the definition of \(span(S)\), there are constants \(c^{i}\) and \(d^{i}\) (some of which could be zero) such that:

\begin{eqnarray*}

u & = & c^{1}s_{1}+c^{2}s_{2}+\cdots \\

v & = & d^{1}s_{1}+d^{2}s_{2}+\cdots \\

\Rightarrow \lambda u + \mu v & = & \lambda (c^{1}s_{1}+c^{2}s_{2}+\cdots ) + \mu (d^{1}s_{1}+d^{2}s_{2}+\cdots ) \\

& = & (\lambda c^{1}+\mu d^{1})s_{1} + (\lambda c^{2}+\mu d^{2})s_{2} + \cdots

\end{eqnarray*}

This last sum is a linear combination of elements of \(S\), and is thus in \(span(S)\). Then \(span(S)\) is closed under linear combinations, and is thus a subspace of \(V\).

Note that this proof, like many proofs, consisted of little more than just writing out the definitions.

Example \(\PageIndex{2}\):

For which values of \(a\) does

\[span \left\{ \begin{pmatrix}1\\0\\a\end{pmatrix} , \begin{pmatrix}1\\2\\-3\end{pmatrix} , \begin{pmatrix}a\\1\\0\end{pmatrix} \right\} = \Re^{3}?\]

Given an arbitrary vector \(\begin{pmatrix}x\\y\\z\end{pmatrix}\) in \(\Re^{3}\), we need to find constants \(r^{1}, r^{2}, r^{3}\) such that

\[r^{1} \begin{pmatrix}1\\0\\a\end{pmatrix} + r^{2}\begin{pmatrix}1\\2\\-3\end{pmatrix} +r^{3} \begin{pmatrix}a\\1\\0\end{pmatrix} = \begin{pmatrix}x\\y\\z\end{pmatrix}.\]

We can write this as a linear system in the unknowns \(r^{1}, r^{2}, r^{3}\) as follows:

\[

\begin{pmatrix}

1 & 1 & a \\

0 & 2 & 1 \\

a & -3 & 0

\end{pmatrix}

\begin{pmatrix}r^{1}\\r^{2}\\r^{3}\end{pmatrix}

= \begin{pmatrix}x\\y\\z\end{pmatrix}.

\]

If the matrix

\(M=\begin{pmatrix}

1 & 1 & a \\

0 & 2 & 1 \\

a & -3 & 0

\end{pmatrix}\) is invertible, then we can find a solution

\[M^{-1}\begin{pmatrix}x\\y\\z\end{pmatrix}=\begin{pmatrix}r^{1}\\r^{2}\\r^{3}\end{pmatrix}\] for \(\textit{any}\) vector \(\begin{pmatrix}x\\y\\z\end{pmatrix} \in \Re^{3}\).

Therefore we should choose \(a\) so that \(M\) is invertible:

\[i.e.,\; 0 \neq \det M = -2a^{2} + 3 + a = -(2a-3)(a+1). \]

Then the span is \(\Re^{3}\) if and only if \(a \neq -1, \frac{3}{2}\).

Some other very important ways of building subspaces are given in the following examples.

Example \(\PageIndex{3}\): The kernel of a linear map

Suppose \(L:U\to V\) is a linear map between vector spaces. Then if

\[L(u)=0=L(u')\, ,\]

linearity tells us that

\[L(\alpha u + \beta u') = \alpha L(u) + \beta L(u') =\alpha 0 + \beta 0 = 0\, .\]

Hence, thanks to the subspace theorem, the set of all vectors in \(U\) that are mapped to the zero vector is a subspace of \(V\).

It is called the kernel of \(L\):

\[{\rm ker} L:=\{u\in U| L(u) = 0\}\subset U.\]

Note that finding kernels is a homogeneous linear systems problem.

Example \(\PageIndex{4}\): The image of a linear map

Suppose \(L:U\to V\) is a linear map between vector spaces. Then if

\[v=L(u) \mbox{ and } v'=L(u')\, ,\]

linearity tells us that

\[\alpha v + \beta v' = \alpha L(u) + \beta L(u') =L(\alpha u +\beta u')\, .\]

Hence, calling once again on the subspace theorem, the set of all vectors in \(V\) that are obtained as outputs of the map \(L\) is a subspace.

It is called the image of \(L\):

\[{\rm im} L:=\{L(u)\ u\in U \}\subset V.\]

Example \(\PageIndex{5}\): An eigenspace of a linear map

Suppose \(L:V\to V\) is a linear map and \(V\) is a vector space. Then if

\[L(u)=\lambda u \mbox{ and } L(v)=\lambda v\, ,\]

linearity tells us that

\[L(\alpha u + \beta v) = \alpha L(u) + \beta L(v) =\alpha L(u) + \beta L(v) =\alpha \lambda u + \beta \lambda v = \lambda (\alpha u + \beta v)\, .\]

Hence, again by subspace theorem, the set of all vectors in \(V\) that obey the \(\textit{eigenvector equation}\) \(L(v)=\lambda v\) is a subspace of \(V\). It is called an eigenspace

\[V_{\lambda}:=\{v\in V| L(v) = \lambda v\}.\]

For most scalars \(\lambda\), the only solution to \(L(v) = \lambda v\) will be \(v=0\), which yields the trivial subspace \(\{0\}\).

When there are nontrivial solutions to \(L(v)=\lambda v\), the number \(\lambda\) is called an eigenvalue, and carries essential information about the map \(L\).

Kernels, images and eigenspaces are discussed in great depth in chapters 16 and 12.

Contributor

David Cherney, Tom Denton, and Andrew Waldron (UC Davis)