8.1: Uniform Convergence

- Page ID

- 7972

- Explain uniform convergence

We have developed precise analytic definitions of the convergence of a sequence and continuity of a function and we have used these to prove the EVT and IVT for a continuous function. We will now draw our attention back to the question that originally motivated these definitions, “Why are Taylor series well behaved, but Fourier series are not necessarily?” More precisely, we mentioned that whenever a power series converges then whatever it converged to was continuous. Moreover, if we differentiate or integrate these series term by term then the resulting series will converge to the derivative or integral of the original series. This was not always the case for Fourier series. For example consider the function

\[\begin{align*} f(x) &= \dfrac{4}{\pi } \left ( \sum_{k=0}^{\infty }\dfrac{(-1)^k}{2k+1}\cos ((2k+1)\pi x) \right )\\ &= \dfrac{4}{\pi } \left (\cos (\pi x) - \dfrac{1}{3}\cos (2\pi x) + \dfrac{1}{5}(5\pi x) - \cdots \right ) \end{align*}\]

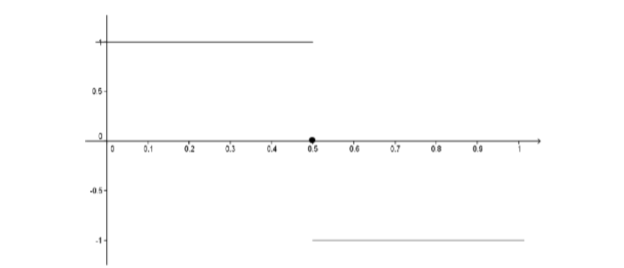

We have seen that the graph of \(f\) is given by

Figure \(\PageIndex{1}\): Graph of \(f\).

If we consider the following sequence of functions

\[\begin{align*} f_1(x) &= \dfrac{4}{\pi } \cos \left ( \dfrac{\pi }{2}x \right )\\[4pt] f_2(x) &= \dfrac{4}{\pi } \left (\cos \left ( \dfrac{\pi }{2}x \right ) - \dfrac{1}{3}\cos \left ( \dfrac{3\pi }{2}x \right ) \right )\\[4pt] f_3(x) &= \dfrac{4}{\pi } \left (\cos \left ( \dfrac{\pi }{2}x \right ) - \dfrac{1}{3}\cos \left ( \dfrac{3\pi }{2}x \right ) + \dfrac{1}{5}\cos \left ( \dfrac{5\pi }{2}x \right ) \right )\\\vdots \end{align*}\]

we see the sequence of continuous functions (\(f_n\)) converges to the non-continuous function \(f\) for each real number \(x\). This didn’t happen with Taylor series. The partial sums for a Taylor series were polynomials and hence continuous but what they converged to was continuous as well.

The difficulty is quite delicate and it took mathematicians a while to determine the problem. There are two very subtly different ways that a sequence of functions can converge: pointwise or uniformly. This distinction was touched upon by Niels Henrik Abel (1802-1829) in 1826 while studying the domain of convergence of a power series. However, the necessary formal definitions were not made explicit until Weierstrass did it in his 1841 paper Zur Theorie der Potenzreihen (On the Theory of Power Series). This was published in his collected works in 1894.

It will be instructive to take a look at an argument that doesn’t quite work before looking at the formal definitions we will need. In 1821 Augustin Cauchy “proved” that the infinite sum of continuous functions is continuous. Of course, it is obvious (to us) that this is not true because we’ve seen several counterexamples. But Cauchy, who was a first rate mathematician was so sure of the correctness of his argument that he included it in his textbook on analysis, Cours d’analyse (1821).

Find the flaw in the following “proof” that \(f\) is also continuous at \(a\).

Suppose \(f_1, f_2, f_3, f_4 ...\) are all continuous at \(a\) and that \(\sum_{n=1}^{\infty } f_n = f\). Let \(ε > 0\). Since \(f_n\) is continuous at \(a\), we can choose \(δ_n > 0\) such that if \(|x - a| < δ_n\), then \(|f_n(x) - f_n(a)| < \dfrac{ε}{2n}\). Let \(δ = \inf (δ_1, δ_2, δ_3,...)\). If \(|x - a| < δ\) then

\left | f(x) - f(a) \right | &= \left | \sum_{n=1}^{\infty } f_n(x) - \sum_{n=1}^{\infty } f_n(a) \right |\\

&= \left | \sum_{n=1}^{\infty } \left (f_n(x) - f_n(a) \right ) \right |\\

&\leq \sum_{n=1}^{\infty } \left |f_n(x) - f_n(a) \right | \\

&\leq \sum_{n=1}^{\infty } \dfrac{\varepsilon }{2^n}\\

&\leq \varepsilon \sum_{n=1}^{\infty }\dfrac{1}{2^n} \\

&= \varepsilon

\end{align*}

Thus \(f\) is continuous at \(a\).

Let \(S\) be a subset of the real number system and let \((f_n) = (f_1, f_2, f_3, ...)\) be a sequence of functions defined on \(S\). Let \(f\) be a function defined on \(S\) as well. We say that (\(f_n\)) converges to \(f\) pointwise on \(S\) provided that for all \(x ∈ S\), the sequence of real numbers (\(f_n(x)\)) converges to the number \(f(x)\). In this case we write \(f_n\xrightarrow[]{ptwise}f\) on \(S\).

Symbolically, we have \(f_n\xrightarrow[]{ptwise}f\) on \(S ⇔ ∀ x ∈ S,∀ ε > 0, ∃ N\) such that \(( n > N ⇒|f_n(x) - f(x)| < ε)\).

This is the type of convergence we have been observing to this point. By contrast we have the following new definition.

Let \(S\) be a subset of the real number system and let \((f_n) = (f_1, f_2, f_3, ...)\) be a sequence of functions defined on \(S\). Let \(f\) be a function defined on \(S\) as well. We say that (\(f_n\)) converges to \(f\) uniformly on \(S\) provided \(∀ ε > 0, ∃ N\) such that \(n > N ⇒|f_n(x) - f(x)| < ε, ∀ x ∈ S\).

In this case we write \(f_n\xrightarrow[]{unif}f\) on \(S\).

The difference between these two definitions is subtle. In pointwise convergence, we are given a fixed \(x ∈ S\) and an \(ε > 0\). Then the task is to find an \(N\) that works for that particular \(x\) and \(ε\). In uniform convergence, one is given \(ε > 0\) and must find a single \(N\) that works for that particular \(ε\) but also simultaneously (uniformly) for all \(x ∈ S\). Clearly uniform convergence implies pointwise convergence as an \(N\) which works uniformly for all \(x\), works for each individual \(x\) also. However the reverse is not true. This will become evident, but first consider the following example.

Let \(0 < b < 1\) and consider the sequence of functions (\(f_n\)) defined on \([0,b]\) by \(f_n(x) = x_n\). Use the definition to show that \(f_n\xrightarrow[]{unif}0\) on \([0 ,b]\).

- Hint

-

\(|x^n - 0| = x^n ≤ b^n\)

Uniform convergence is not only dependent on the sequence of functions but also on the set \(S\). For example, the sequence \(\left ( f_n(x) \right ) = \left ( x^n \right )_{n=0}^{\infty }\) of Problem \(\PageIndex{2}\) does not converge uniformly on \([0,1]\). We could use the negation of the definition to prove this, but instead, it will be a consequence of the following theorem.

Consider a sequence of functions (\(f_n\)) which are all continuous on an interval \(I\). Suppose \(f_n\xrightarrow[]{unif}f\) on \(I\). Then \(f\) must be continuous on \(I\).

- Sketch of Proof

-

Let \(a ∈ I\) and let \(ε > 0\). The idea is to use uniform convergence to replace \(f\) with one of the known continuous functions \(f_n\). Specifically, by uncancelling, we can write

\[\begin{align*} \left | f(x) - f(a) \right | &= \left | f(x) - f_n(x) + f_n(x) - f_n(a) + f_n(a) - f(a) \right |\\ &\leq \left |f(x) - f_n(x) \right | + \left | f_n(x) - f_n(a) \right | + \left | f_n(a) - f(a) \right | \end{align*}\]

If we choose \(n\) large enough, then we can make the first and last terms as small as we wish, noting that the uniform convergence makes the first term uniformly small for all \(x\). Once we have a specific \(n\), then we can use the continuity of \(f_n\) to find a \(δ > 0\) such that the middle term is small whenever \(x\) is within \(δ\) of \(a\).

Provide a formal proof of Theorem \(\PageIndex{1}\) based on the above ideas.

Consider the sequence of functions (\(f_n\)) defined on \([0,1]\) by \(f_n(x) = x^n\). Show that the sequence converges to the function

\[f(x) = \begin{cases} 0 & \text{ if } x\; \epsilon\; [0,1) \\ 1 & \text{ if } x= 1 \end{cases} \nonumber\]

pointwise on \([0,1]\), but not uniformly on \([0,1]\).

Notice that for the Fourier series at the beginning of this chapter,

\[f(x) = \dfrac{4}{\pi } \left (\cos \left ( \dfrac{\pi }{2}x \right ) - \dfrac{1}{3}\cos \left ( \dfrac{3\pi }{2}x \right ) + \dfrac{1}{5}\cos \left ( \dfrac{5\pi }{2}x \right ) - \dfrac{1}{7}\cos \left ( \dfrac{7\pi }{2}x \right ) + \cdots \right )\]

the convergence cannot be uniform on \((-∞,∞)\), as the function \(f\) is not continuous. This never happens with a power series, since they converge to continuous functions whenever they converge. We will also see that uniform convergence is what allows us to integrate and differentiate a power series term by term.