9.3: Lyapunov Exponent

- Page ID

- 7819

Finally, I would like to introduce one useful analytical metric that can help characterize chaos. It is called the Lyapunov exponent, which measures how quickly an infinitesimally small distance between two initially close states grows over time:

\[F^{t}(x_0 + ε)−F^{t}(x_0) ≈ εe^{λt} \label{9.2} \]

The left hand side is the distance between two initially close states after \(t\) steps, and the right hand side is the assumption that the distance grows exponentially over time. The exponent \(λ\) measured for a long period of time (ideally \(t → ∞)\) is the Lyapunov exponent. If \(λ > 0\), small distances grow indefinitely over time, which means the stretching mechanism is in effect. Or if \(λ < 0\), small distances don’t grow indefinitely, i.e., the system settles down into a periodic trajectory eventually. Note that the Lyapunov exponent characterizes only stretching, but as we discussed before, stretching is not the only mechanism of chaos. You should keep in mind that the folding mechanism is not captured in this Lyapunov exponent.

We can do a little bit of mathematical derivation to transform Equation \ref{9.2} into a more easily computable form:

\[e^{λt} ≈ \frac{|F^{t}(x_{0} + ε)−F^{t}(x_{0})|}{ ε} \label{9.3} \]

\[\begin{align} λ &= \lim _{t→∞,ε→0} \frac{1} {t} \log{\frac{|F^{t}(x_{0} +\varepsilon)-F^{t}(x_{0})|}{\varepsilon}}\label{9.4} \\[4pt] &= \lim_{ t→∞ 1, ε→0}\frac{1} {t} \log {|\frac{dF} {dx}| _{x=x_0}|}\label{9.5} \end{align} \]

(applying the chain rule of differentiation...)

\[λ = \lim_{t→∞}\frac{ 1}{ t} \log {|\frac{dF}{ dx}}|_{ x=F^{t−1}(x_0)=x_{t−1}} · \frac{dF}{ dx}|_{ x=F^{t−2}(x_0)=x_{t−2}} ···· \frac{dF}{ dx}|_{x=x_{0}}\label{9.6} \]

\[ \lim_{t \rightarrow \infty }\frac{1}{t} \sum^{t-1}_{i=0} \log{|\frac{dF}{ dx}|_{ x=x_{i} }|} \label{9.7} \]

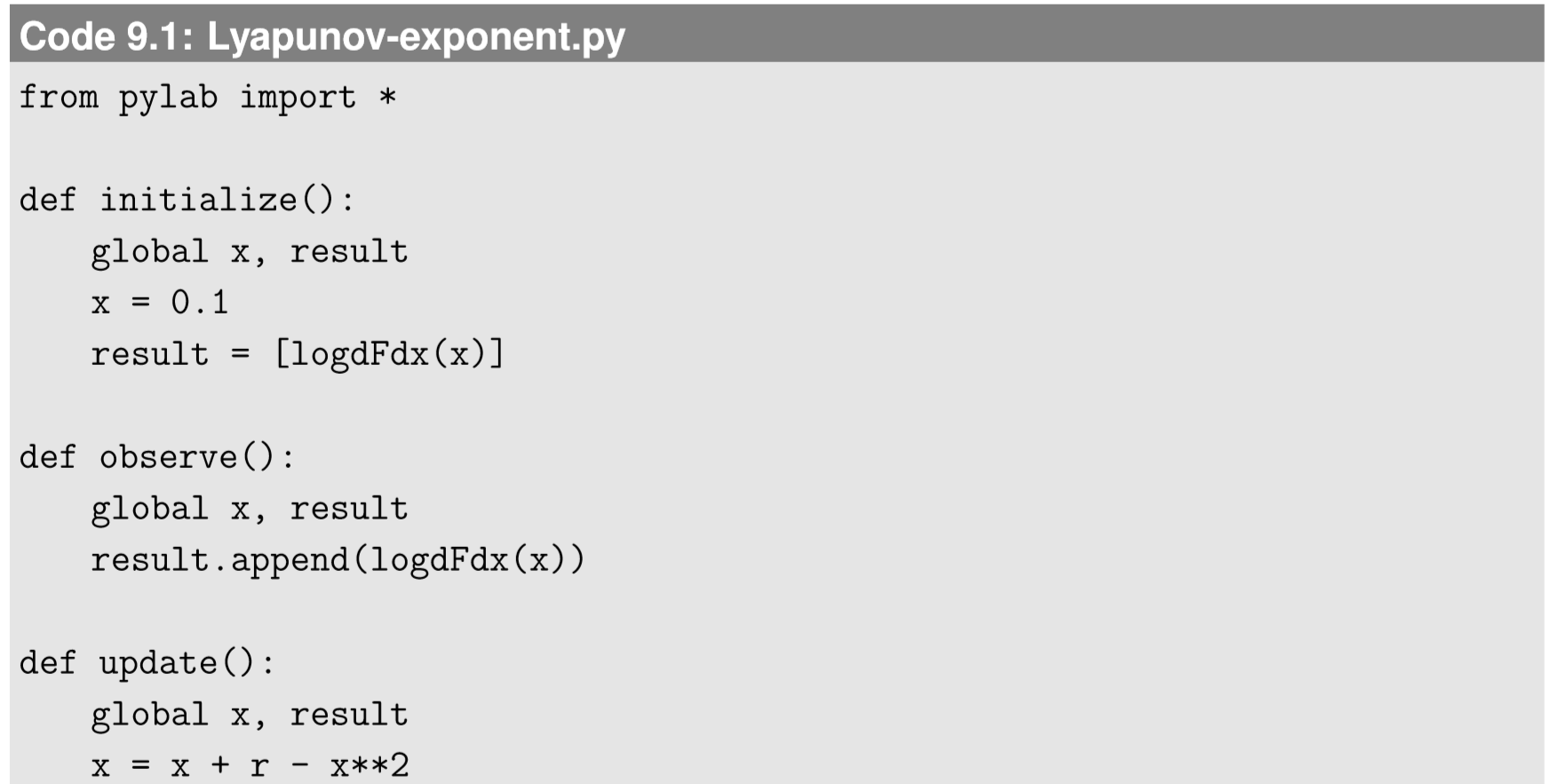

The final result is quite simple—the Lyapunov exponent is a time average of \(log|\frac{dF}{dx}|\) at every state the system visits over the course of the simulation. This is very easy to compute numerically. Here is an example of computing the Lyapunov exponent of Eq. 8.4.3 over varying \(r\):

Figure 9.3.1 shows the result. By comparing this figure with the bifurcation diagram (Fig. 8.4.3), you will notice that the parameter range where the Lyapunov exponent takes positive values nicely matches the range where the system shows chaotic behavior. Also, whenever a bifurcation occurs (e.g., \(r = 1\), \(1.5\), etc.), the Lyapunov exponent touches the \(λ = 0\) line, indicating the criticality of those parameter values. Finally, there are several locations in the plot where the Lyapunov exponent diverges to negative infinity (they may not look so, but they are indeed going infinitely deep). Such values occur when the system converges to an extremely stable equilibrium point with \(\frac{dF^{t}}{dx}_{|x=x_{0}} ≈ 0\) for certain \(t\). Since the definition of the Lyapunov exponent contains logarithms of this derivative, if it becomes zero, the exponent diverges to negative infinity as well.

Plot the Lyapunov exponent of the logistic map (Eq. (\ref{8.4.9})) for \(0 < r < 4\), and compare the result with its bifurcation diagram.

Plot the bifurcation diagram and the Lyapunov exponent of the following discrete-time dynamical system for \(r > 0\):

\[x_{t}= cos^{2}(rx_{t-1})\label{9.8} \]

Then explain in words how its dynamics change over \(r\).