2.7: Constrained Optimization - Lagrange Multipliers

- Page ID

- 2256

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\dsum}{\displaystyle\sum\limits} \)

\( \newcommand{\dint}{\displaystyle\int\limits} \)

\( \newcommand{\dlim}{\displaystyle\lim\limits} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\(\newcommand{\longvect}{\overrightarrow}\)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\(\newcommand{\avec}{\mathbf a}\) \(\newcommand{\bvec}{\mathbf b}\) \(\newcommand{\cvec}{\mathbf c}\) \(\newcommand{\dvec}{\mathbf d}\) \(\newcommand{\dtil}{\widetilde{\mathbf d}}\) \(\newcommand{\evec}{\mathbf e}\) \(\newcommand{\fvec}{\mathbf f}\) \(\newcommand{\nvec}{\mathbf n}\) \(\newcommand{\pvec}{\mathbf p}\) \(\newcommand{\qvec}{\mathbf q}\) \(\newcommand{\svec}{\mathbf s}\) \(\newcommand{\tvec}{\mathbf t}\) \(\newcommand{\uvec}{\mathbf u}\) \(\newcommand{\vvec}{\mathbf v}\) \(\newcommand{\wvec}{\mathbf w}\) \(\newcommand{\xvec}{\mathbf x}\) \(\newcommand{\yvec}{\mathbf y}\) \(\newcommand{\zvec}{\mathbf z}\) \(\newcommand{\rvec}{\mathbf r}\) \(\newcommand{\mvec}{\mathbf m}\) \(\newcommand{\zerovec}{\mathbf 0}\) \(\newcommand{\onevec}{\mathbf 1}\) \(\newcommand{\real}{\mathbb R}\) \(\newcommand{\twovec}[2]{\left[\begin{array}{r}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\ctwovec}[2]{\left[\begin{array}{c}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\threevec}[3]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\cthreevec}[3]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\fourvec}[4]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\cfourvec}[4]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\fivevec}[5]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\cfivevec}[5]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\mattwo}[4]{\left[\begin{array}{rr}#1 \amp #2 \\ #3 \amp #4 \\ \end{array}\right]}\) \(\newcommand{\laspan}[1]{\text{Span}\{#1\}}\) \(\newcommand{\bcal}{\cal B}\) \(\newcommand{\ccal}{\cal C}\) \(\newcommand{\scal}{\cal S}\) \(\newcommand{\wcal}{\cal W}\) \(\newcommand{\ecal}{\cal E}\) \(\newcommand{\coords}[2]{\left\{#1\right\}_{#2}}\) \(\newcommand{\gray}[1]{\color{gray}{#1}}\) \(\newcommand{\lgray}[1]{\color{lightgray}{#1}}\) \(\newcommand{\rank}{\operatorname{rank}}\) \(\newcommand{\row}{\text{Row}}\) \(\newcommand{\col}{\text{Col}}\) \(\renewcommand{\row}{\text{Row}}\) \(\newcommand{\nul}{\text{Nul}}\) \(\newcommand{\var}{\text{Var}}\) \(\newcommand{\corr}{\text{corr}}\) \(\newcommand{\len}[1]{\left|#1\right|}\) \(\newcommand{\bbar}{\overline{\bvec}}\) \(\newcommand{\bhat}{\widehat{\bvec}}\) \(\newcommand{\bperp}{\bvec^\perp}\) \(\newcommand{\xhat}{\widehat{\xvec}}\) \(\newcommand{\vhat}{\widehat{\vvec}}\) \(\newcommand{\uhat}{\widehat{\uvec}}\) \(\newcommand{\what}{\widehat{\wvec}}\) \(\newcommand{\Sighat}{\widehat{\Sigma}}\) \(\newcommand{\lt}{<}\) \(\newcommand{\gt}{>}\) \(\newcommand{\amp}{&}\) \(\definecolor{fillinmathshade}{gray}{0.9}\)In Sections 2.5 and 2.6 we were concerned with finding maxima and minima of functions without any constraints on the variables (other than being in the domain of the function). What would we do if there were constraints on the variables? The following example illustrates a simple case of this type of problem.

Example 2.24

For a rectangle whose perimeter is 20 m, find the dimensions that will maximize the area.

Solution

The area \(A\) of a rectangle with width \(x\) and height \(y\) is \(A = x y\). The perimeter \(P\) of the rectangle is then given by the formula \(P = 2x+2y\). Since we are given that the perimeter \(P = 20\), this problem can be stated as:

\[\nonumber \begin{align}\text{Maximize : }&f (x, y) = x y \\[4pt] \nonumber \text{given : }&2x+2y = 20 \end{align}\]

The reader is probably familiar with a simple method, using single-variable calculus, for solving this problem. Since we must have \(2x + 2y = 20\), then we can solve for, say, \(y\) in terms of \(x\) using that equation. This gives \(y = 10− x\), which we then substitute into \(f\) to get \(f (x, y) = x y = x(10 − x) = 10x − x^2\). This is now a function of \(x\) alone, so we now just have to maximize the function \(f (x) = 10x− x^2\) on the interval [0,10]. Since \(f ′ (x) = 10−2x = 0 \Rightarrow x = 5 \text{ and }f ′′(5) = −2 < 0\), then the Second Derivative Test tells us that \(x = 5\) is a local maximum for \(f\), and hence \(x = 5\) must be the global maximum on the interval [0,10] (since \(f = 0\) at the endpoints of the interval). So since \(y = 10 − x = 5\), then the maximum area occurs for a rectangle whose width and height both are 5 m.

Notice in the above example that the ease of the solution depended on being able to solve for one variable in terms of the other in the equation \(2x+2y = 20\). But what if that were not possible (which is often the case)? In this section we will use a general method, called the Lagrange multiplier method, for solving constrained optimization problems:

\[\nonumber \begin{align} \text{Maximize (or minimize) : }&f (x, y)\quad (\text{or }f (x, y, z)) \\[4pt] \nonumber \text{given : }&g(x, y) = c \quad (\text{or }g(x, y, z) = c) \text{ for some constant } c \end{align}\]

The equation \(g(x, y) = c\) is called the constraint equation, and we say that \(x\) and \(y\) are constrained by \(g(x, y) = c\). Points \((x, y)\) which are maxima or minima of \(f (x, y)\) with the condition that they satisfy the constraint equation \(g(x, y) = c\) are called constrained maximum or constrained minimum points, respectively. Similar definitions hold for functions of three variables.

The Lagrange multiplier method for solving such problems can now be stated:

Theorem 2.7: The Lagrange Multiplier Method

Let \(f (x, y)\text{ and }g(x, y)\) be smooth functions, and suppose that \(c\) is a scalar constant such that \(\nabla g(x, y) \neq \textbf{0}\) for all \((x, y)\) that satisfy the equation \(g(x, y) = c\). Then to solve the constrained optimization problem

\[\nonumber \begin{align} \text{Maximize (or minimize) : }&f (x, y) \\[4pt] \nonumber \text{given : }&g(x, y) = c ,\end{align}\]

find the points \((x, y)\) that solve the equation \(\nabla f (x, y) = \lambda \nabla g(x, y)\) for some constant \(\lambda\) (the number \(\lambda\) is called the Lagrange multiplier). If there is a constrained maximum or minimum, then it must be such a point.

A rigorous proof of the above theorem requires use of the Implicit Function Theorem, which is beyond the scope of this text. Note that the theorem only gives a necessary condition for a point to be a constrained maximum or minimum. Whether a point \((x, y)\) that satisfies \(\nabla f (x, y) = \lambda \nabla g(x, y)\) for some \(\lambda\) actually is a constrained maximum or minimum can sometimes be determined by the nature of the problem itself. For instance, in Example 2.24 it was clear that there had to be a global maximum.

So how can you tell when a point that satisfies the condition in Theorem 2.7 really is a constrained maximum or minimum? The answer is that it depends on the constraint function \(g(x, y)\), together with any implicit constraints. It can be shown that if the constraint equation \(g(x, y) = c\) (plus any hidden constraints) describes a bounded set \(B\) in \(\mathbb{R}^2\), then the constrained maximum or minimum of \(f (x, y)\) will occur either at a point \((x, y)\) satisfying \(\nabla f (x, y) = \lambda \nabla g(x, y)\) or at a “boundary” point of the set \(B\).

In Example 2.24 the constraint equation \(2x+2y = 20\) describes a line in \(\mathbb{R}^2\), which by itself is not bounded. However, there are “hidden” constraints, due to the nature of the problem, namely \(0 ≤ x, y ≤ 10\), which cause that line to be restricted to a line segment in \(\mathbb{R}^2\) (including the endpoints of that line segment), which is bounded.

Example 2.25

For a rectangle whose perimeter is 20 m, use the Lagrange multiplier method to find the dimensions that will maximize the area.

Solution

As we saw in Example 2.24, with \(x\) and \(y\) representing the width and height, respectively, of the rectangle, this problem can be stated as:

\[\nonumber \begin{align} \text{Maximize : }&f (x, y) = x y \\[4pt] \nonumber \text{given : }&g(x, y) = 2x+2y = 20 \end{align}\]

Then solving the equation \(\nabla f (x, y) = \lambda \nabla g(x, y)\) for some \(\lambda\) means solving the equations \(\dfrac{∂f}{∂x} = \lambda \dfrac{∂g}{∂x}\text{ and }\dfrac{∂f}{∂y} = \lambda \dfrac{∂g}{∂y}\), namely:

\[\nonumber \begin{align} y &=2\lambda ,\\[4pt] \nonumber x &=2\lambda \end{align}\]

The general idea is to solve for \(\lambda\) in both equations, then set those expressions equal (since they both equal \(\lambda\)) to solve for \(x \text{ and }y\). Doing this we get

\[\nonumber \dfrac{y}{2} = \lambda = \dfrac{x}{2} \Rightarrow x = y ,\]

so now substitute either of the expressions for \(x \text{ or }y\) into the constraint equation to solve for \(x \text{ and }y\):

\[\nonumber 20 = g(x, y) = 2x+2y = 2x+2x = 4x \quad \Rightarrow \quad x = 5 \quad \Rightarrow \quad y = 5\]

There must be a maximum area, since the minimum area is 0 and \(f (5,5) = 25 > 0\), so the point \((5,5)\) that we found (called a constrained critical point) must be the constrained maximum.

\(\therefore\) The maximum area occurs for a rectangle whose width and height both are 5 m.

Example 2.26

Find the points on the circle \(x^2 + y^2 = 80\) which are closest to and farthest from the point \((1,2)\).

Solution

The distance \(d\) from any point \((x, y)\) to the point \((1,2)\) is

\[\nonumber d = \sqrt{ (x−1)^2 +(y−2)^2} ,\]

and minimizing the distance is equivalent to minimizing the square of the distance. Thus the problem can be stated as:

\[\nonumber \begin{align}\text{Maximize (and minimize) : }&f (x, y) = (x−1)^2 +(y−2)^2 \\[4pt] \nonumber \text{given : }&g(x, y) = x^2 + y^2 = 80 \end{align} \]

Solving \(\nabla f (x, y) = \lambda \nabla g(x, y)\) means solving the following equations:

\[\nonumber \begin{align}2(x−1) &= 2\lambda x , \\[4pt] \nonumber 2(y−2) &= 2\lambda y \end{align} \]

Note that \(x \neq 0\) since otherwise we would get −2 = 0 in the first equation. Similarly, \(y \neq 0\). So we can solve both equations for \(\lambda\) as follows:

\[\nonumber \dfrac{x−1}{x} = \lambda = \dfrac{y−2}{y} \Rightarrow x y− y = x y−2x \quad \Rightarrow \quad y = 2x\]

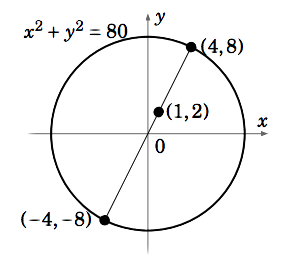

Substituting this into \(g(x, y) = x^2 + y^2 = 80\) yields \(5x^2 = 80\), so \(x = \pm 4\). So the two constrained critical points are \((4,8)\text{ and }(−4,−8)\). Since \(f (4,8) = 45 \text{ and }f (−4,−8) = 125\), and since there must be points on the circle closest to and farthest from \((1,2)\), then it must be the case that \((4,8)\) is the point on the circle closest to \((1,2)\text{ and }(−4,−8)\) is the farthest from \((1,2)\) (see Figure 2.7.1).

Notice that since the constraint equation \(x^2+y^2 = 80\) describes a circle, which is a bounded set in \(\mathbb{R}^2\), then we were guaranteed that the constrained critical points we found were indeed the constrained maximum and minimum.

The Lagrange multiplier method can be extended to functions of three variables.

Example 2.27

\[\nonumber \begin{align} \text{Maximize (and minimize) : }&f (x, y, z) = x+ z \\[4pt] \nonumber \text{given : }&g(x, y, z) = x^2 + y^2 + z^2 = 1 \end{align}\]

Solution

Solve the equation \(\nabla f (x, y, z) = \lambda \nabla g(x, y, z)\):

\[\nonumber \begin{align} 1 &= 2\lambda x \\[4pt] 0 &= 2\lambda y \\[4pt] \nonumber 1 &= 2\lambda z \end{align}\]

The first equation implies \(\lambda \neq 0\) (otherwise we would have 1 = 0), so we can divide by \(\lambda\) in the second equation to get \(y = 0\) and we can divide by \(\lambda\) in the first and third equations to get \(x = \dfrac{1}{2\lambda} = z\). Substituting these expressions into the constraint equation \(g(x, y, z) = x^2 + y^2 + z^2 = 1\) yields the constrained critical points \(\left (\dfrac{1}{\sqrt{2}},0,\dfrac{1}{\sqrt{2}} \right )\) and \(\left ( \dfrac{−1}{\sqrt{2}} ,0,\dfrac{ −1}{\sqrt{2}}\right )\). Since \(f \left ( \dfrac{1}{\sqrt{2}} ,0,\dfrac{ 1}{\sqrt{2}}\right ) > f \left ( \dfrac{−1}{\sqrt{2}} ,0,\dfrac{ −1}{\sqrt{2}}\right )\), and since the constraint equation \(x^2 + y^2 + z^2 = 1\) describes a sphere (which is bounded) in \(\mathbb{R}^ 3\), then \(\left ( \dfrac{1}{\sqrt{2}} ,0,\dfrac{ 1}{\sqrt{2}}\right )\) is the constrained maximum point and \(\left ( \dfrac{−1}{\sqrt{2}} ,0,\dfrac{ −1}{\sqrt{2}}\right )\) is the constrained minimum point.

So far we have not attached any significance to the value of the Lagrange multiplier \(\lambda\). We needed \(\lambda\) only to find the constrained critical points, but made no use of its value. It turns out that \(\lambda\) gives an approximation of the change in the value of the function \(f (x, y)\) that we wish to maximize or minimize, when the constant c in the constraint equation \(g(x, y) = c\) is changed by 1.

For example, in Example 2.25 we showed that the constrained optimization problem

\[\nonumber \begin{align}\text{Maximize : }&f (x, y) = x y \\[4pt] \nonumber \text{given : }&g(x, y) = 2x+2y = 20 \end{align}\]

had the solution \((x, y) = (5,5)\), and that \(\lambda = \dfrac{x}{2} = \dfrac{y}{2}\). Thus, \(\lambda = 2.5\). In a similar fashion we could show that the constrained optimization problem

\[\nonumber \begin{align} \text{Maximize : }&f (x, y) = x y \\[4pt] \nonumber \text{given : }&g(x, y) = 2x+2y = 21 \end{align}\]

has the solution \((x, y) = (5.25,5.25)\). So we see that the value of \(f (x, y)\) at the constrained maximum increased from \(f (5,5) = 25 \text{ to }f (5.25,5.25) = 27.5625\), i.e. it increased by 2.5625 when we increased the value of \(c\) in the constraint equation \(g(x, y) = c \text{ from }c = 20 \text{ to }c = 21\). Notice that \(\lambda = 2.5\) is close to 2.5625, that is,

\[\nonumber \lambda \approx \nabla f=f (\text{new max. pt})− f (\text{old max. pt})\]

Finally, note that solving the equation \(\nabla f (x, y) = \lambda \nabla g(x, y)\) means having to solve a system of two (possibly nonlinear) equations in three unknowns, which as we have seen before, may not be possible to do. And the 3-variable case can get even more complicated. All of this somewhat restricts the usefulness of Lagrange’s method to relatively simple functions. Luckily there are many numerical methods for solving constrained optimization problems, though we will not discuss them here.