2.3.2: Transformation Composition and Matrix Multiplication

- Page ID

- 186414

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\dsum}{\displaystyle\sum\limits} \)

\( \newcommand{\dint}{\displaystyle\int\limits} \)

\( \newcommand{\dlim}{\displaystyle\lim\limits} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\(\newcommand{\longvect}{\overrightarrow}\)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\(\newcommand{\avec}{\mathbf a}\) \(\newcommand{\bvec}{\mathbf b}\) \(\newcommand{\cvec}{\mathbf c}\) \(\newcommand{\dvec}{\mathbf d}\) \(\newcommand{\dtil}{\widetilde{\mathbf d}}\) \(\newcommand{\evec}{\mathbf e}\) \(\newcommand{\fvec}{\mathbf f}\) \(\newcommand{\nvec}{\mathbf n}\) \(\newcommand{\pvec}{\mathbf p}\) \(\newcommand{\qvec}{\mathbf q}\) \(\newcommand{\svec}{\mathbf s}\) \(\newcommand{\tvec}{\mathbf t}\) \(\newcommand{\uvec}{\mathbf u}\) \(\newcommand{\vvec}{\mathbf v}\) \(\newcommand{\wvec}{\mathbf w}\) \(\newcommand{\xvec}{\mathbf x}\) \(\newcommand{\yvec}{\mathbf y}\) \(\newcommand{\zvec}{\mathbf z}\) \(\newcommand{\rvec}{\mathbf r}\) \(\newcommand{\mvec}{\mathbf m}\) \(\newcommand{\zerovec}{\mathbf 0}\) \(\newcommand{\onevec}{\mathbf 1}\) \(\newcommand{\real}{\mathbb R}\) \(\newcommand{\twovec}[2]{\left[\begin{array}{r}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\ctwovec}[2]{\left[\begin{array}{c}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\threevec}[3]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\cthreevec}[3]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\fourvec}[4]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\cfourvec}[4]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\fivevec}[5]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\cfivevec}[5]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\mattwo}[4]{\left[\begin{array}{rr}#1 \amp #2 \\ #3 \amp #4 \\ \end{array}\right]}\) \(\newcommand{\laspan}[1]{\text{Span}\{#1\}}\) \(\newcommand{\bcal}{\cal B}\) \(\newcommand{\ccal}{\cal C}\) \(\newcommand{\scal}{\cal S}\) \(\newcommand{\wcal}{\cal W}\) \(\newcommand{\ecal}{\cal E}\) \(\newcommand{\coords}[2]{\left\{#1\right\}_{#2}}\) \(\newcommand{\gray}[1]{\color{gray}{#1}}\) \(\newcommand{\lgray}[1]{\color{lightgray}{#1}}\) \(\newcommand{\rank}{\operatorname{rank}}\) \(\newcommand{\row}{\text{Row}}\) \(\newcommand{\col}{\text{Col}}\) \(\renewcommand{\row}{\text{Row}}\) \(\newcommand{\nul}{\text{Nul}}\) \(\newcommand{\var}{\text{Var}}\) \(\newcommand{\corr}{\text{corr}}\) \(\newcommand{\len}[1]{\left|#1\right|}\) \(\newcommand{\bbar}{\overline{\bvec}}\) \(\newcommand{\bhat}{\widehat{\bvec}}\) \(\newcommand{\bperp}{\bvec^\perp}\) \(\newcommand{\xhat}{\widehat{\xvec}}\) \(\newcommand{\vhat}{\widehat{\vvec}}\) \(\newcommand{\uhat}{\widehat{\uvec}}\) \(\newcommand{\what}{\widehat{\wvec}}\) \(\newcommand{\Sighat}{\widehat{\Sigma}}\) \(\newcommand{\lt}{<}\) \(\newcommand{\gt}{>}\) \(\newcommand{\amp}{&}\) \(\definecolor{fillinmathshade}{gray}{0.9}\)Composition means the same thing in linear algebra as it does in Calculus. Here is the definition.

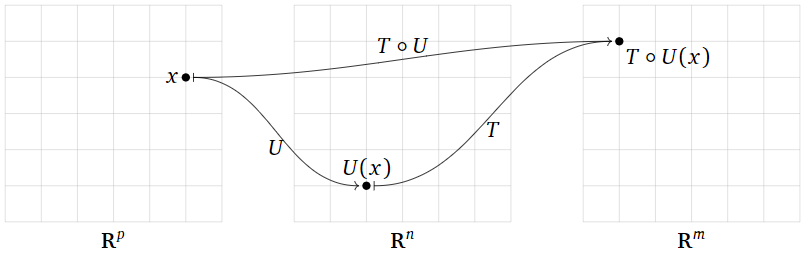

Let \(T\colon\mathbb{R}^n \to\mathbb{R}^m \) and \(U\colon\mathbb{R}^p \to\mathbb{R}^n \) be transformations. Their composition is the transformation \(T\circ U\colon\mathbb{R}^p \to\mathbb{R}^m \) defined by

\[ (T\circ U)(\vec{x}) = T(U(\vec{x})) \nonumber \]

Composing two transformations means chaining them together: \(T\circ U\) is the transformation that first applies \(U\), then applies \(T\) (note the order of operations). More precisely, to evaluate \(T\circ U\) on an input vector \(\vec{x}\), first you evaluate \(U(\vec{x})\), then you take this output vector of \(U\) and use it as an input vector of \(T\): that is, \((T\circ U)(\vec{x}) = T(U(\vec{x}))\). Of course, this only makes sense when the outputs of \(U\) are valid inputs of \(T\), that is: when the range of \(U\) is contained in the domain of \(T\).

Figure \(\PageIndex{1}\)

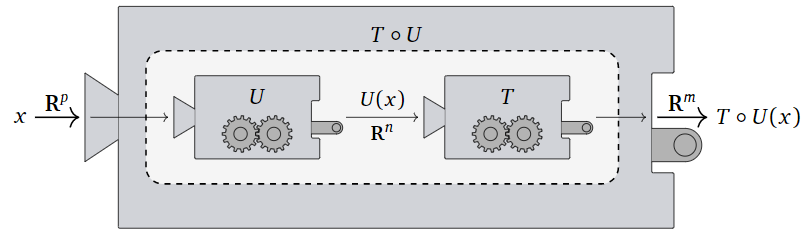

Here is a picture of the composition \(T\circ U\) as a “machine” that first runs \(U\), then takes its output and feeds it into \(T\);

Figure \(\PageIndex{2}\)

- In order for \(T\circ U\) to be defined, the codomain of \(U\) must equal the domain of \(T\).

- The domain of \(T\circ U\) is the domain of \(U\).

- The codomain of \(T\circ U\) is the codomain of \(T\).

Define \(f\colon \mathbb{R} \to\mathbb{R} \) by \(f(x) = x^2\) and \(g\colon \mathbb{R} \to\mathbb{R} \) by \(g(x) = x^3\). The composition \(f\circ g\colon \mathbb{R} \to\mathbb{R} \) is the transformation defined by the rule

\[ f\circ g(x) = f(g(x)) = f(x^3) = (x^3)^2 = x^6 \nonumber \]

For instance, \(f\circ g(-2) = f(-8) = 64.\)

Define \(T\colon\mathbb{R}^3 \to\mathbb{R}^2 \) and \(U\colon\mathbb{R}^2 \to\mathbb{R}^3 \) by

\[T(\vec{x})=\left[\begin{array}{ccc}1&1&0\\0&1&1\end{array}\right]\vec{x}\quad\text{and}\quad U(\vec{x})=\left[\begin{array}{cc}1&0\\0&1\\1&0\end{array}\right]\vec{x}\nonumber\]

Their composition is the transformation \(T\circ U\colon\mathbb{R}^2 \to\mathbb{R}^2 \); and we can see that:

\[ (T\circ U)\left( \left[\begin{array} {c} a \\ b \end{array} \right] \right)=T\left( U\left(\left[\begin{array} {c} a \\ b \end{array} \right] \right)\right)=T\left( \left[\begin{array}{cc}1&0\\0&1\\1&0\end{array}\right]\left[\begin{array} {c} a \\ b \end{array} \right] \right) \nonumber\]

\[ = T\left( \left[\begin{array} {c} a \\ b \\ a \end{array} \right]\right) =\left[\begin{array}{ccc}1&1&0\\0&1&1\end{array}\right]\left[\begin{array} {c} a \\ b \\ a \end{array} \right]=\left[\begin{array} {c} a+ b \\ a+b \end{array} \right] \nonumber\]

This shows that \(T\circ U\) is a matrix transformation with representation matrix \(\left[ \begin{array} {cc} 1 & 1 \\ 1 & 1 \end{array} \right]\).

Let \(S,T,U\) be transformations and let \(c\) be a scalar. Suppose that \(T\colon\mathbb{R}^n \to\mathbb{R}^m\), and that in each of the following identities, the domains and the codomains are compatible when necessary for the composition to be defined. The following properties are easily verified:

\[\begin{align*} S\circ(T+U) \amp= S\circ T+S\circ U \amp (S + T)\circ U \amp= S\circ U + T\circ U \amp\\ c(T\circ U) \amp= (cT)\circ U \amp c(T\circ U) \amp= T\circ(cU) \rlap{\;\;\text{ if $T$ is linear}}\\ T\circ\mathcal{I} \amp= T \amp \mathcal{I}\circ T \amp= T\\ \amp \amp S\circ(T\circ U) \amp= (S\circ T)\circ U \amp \end{align*}\]

The final property is called associativity. Unwrapping both sides, it says:

\[ S\circ(T\circ U)(\vec{x}) = S(T\circ U(\vec{x})) = S(T(U(\vec{x}))) = S\circ T(U(\vec{x})) = (S\circ T)\circ U(\vec{x}) \nonumber \]

In other words, both \(S\circ (T\circ U)\) and \((S\circ T)\circ U\) are the transformation defined by first applying \(U\), then \(T\text{,}\) then \(S\).

Define \(f\colon \mathbb{R} \to\mathbb{R} \) by \(f(x) = x^2\) and \(g\colon \mathbb{R} \to\mathbb{R} \) by \(g(x) = e^x\). The composition \(f\circ g\colon \mathbb{R} \to\mathbb{R} \) is the transformation defined by the rule

\[ f\circ g(x) = f(g(x)) = f(e^x) = (e^x)^2 = e^{2x} \nonumber \]

The composition \(g\circ f\colon \mathbb{R} \to\mathbb{R} \) is the transformation defined by the rule

\[ g\circ f(x) = g(f(x)) = g(x^2) = e^{x^2} \nonumber \]

Note that \(e^{x^2}\neq e^{2x}\) in general; for instance, if \(x=1\) then \(e^{x^2} = e\) and \(e^{2x} = e^2\). Thus \(f \circ g\) is not equal to \(g \circ f\text{,}\) and we can already see with functions of one variable that composition of functions is not commutative.

Composition of transformations is not commutative in general. That is, in general, \(T\circ U\neq U\circ T\text{,}\) even when both compositions are defined.

Let \(T\colon\mathbb{R}^n \to\mathbb{R}^m \) and \(U\colon\mathbb{R}^p \to\mathbb{R}^n \) be linear transformations, and let \(A\) and \(B\) be their standard matrices, respectively, so \(A\) is an \(m\times n\) matrix and \(B\) is an \(n\times p\) matrix. Then \(T\circ U\colon\mathbb{R}^p \to\mathbb{R}^m \) is a linear transformation, and its standard matrix is formed by multiplying each column of \(B\) by the matrix \(A\).

- Proof

-

First we verify that \(T\circ U\) is linear. Let \(\vec{u},\vec{v}\) be vectors in \(\mathbb{R}^p \). Then

\[ \begin{aligned} T\circ U(\vec{u}+\vec{v}) &= T(U(\vec{u}+\vec{v})) = T(U(\vec{u})+U(\vec{v})) \\ &= T(U(\vec{u}))+T(U(\vec{v})) = T\circ U(\vec{u}) + T\circ U(\vec{v}) \end{aligned} \]

If \(c\) is a scalar, then

\[ T\circ U(c\vec{v}) = T(U(c\vec{v})) = T(cU(\vec{v})) = cT(U(\vec{v})) = cT\circ U(\vec{v}) \nonumber \]

Since \(T\circ U\) satisfies the two defining properties, it is a linear transformation.

Now that we know that \(T\circ U\) is linear, it makes sense to compute its standard matrix. Let \(E\) be the standard matrix of \(T\circ U\), so \(T(\vec{x}) = A\vec{x}\), \(U(\vec{x}) = B\vec{x}\), and \(T\circ U(\vec{x}) = E\vec{x}\). The first column of \(E\) is \(E\vec{e}_1\), and the first column of \(B\) is \(\vec{b}_1=B\vec{e}_1\). We have

\[ T\circ U(\vec{e}_1) = T(U(\vec{e}_1)) = T(B\vec{e}_1) =T(\vec{b}_1)=A\vec{b}_1 \nonumber \]

By definition, the first column of the matrix representation \(E\) is the product of \(A\) with the first column of \(B\),

The same argument as applied to each of the \(i\)th standard coordinate vectors \(\vec{e}_i\) shows that \(E\) has \(i\)th column defined as the product of \(A\) with the \(i\)th column vector in \(B\).

Note that we could alternatively say that

\[ E\vec{x} = T\circ U(\vec{x}) = A(B\vec{x}) = (AB)\vec{x} \nonumber \]

It follows that \(E\) should be how we define the matrix product \(AB\).

The theorem justifies our choice of definition of the matrix product. This is the one and only reason that matrix products are defined in this (terrible) way. To rephrase:

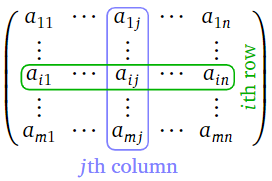

Let \(A\) be an \(m\times n\) matrix. We will generally write \(a_{ij}\) for the entry in the \(i\)th row and the \(j\)th column. It is called the \(i,j\) entry of the matrix.

Figure \(\PageIndex{3}\)

Let \(A\) be an \(m\times n\) matrix and let \(B\) be an \(n\times p\) matrix. Denote the columns of \(B\) by \(\vec{v}_1,\vec{v}_2,\ldots,\vec{v}_p\text{:}\)

\[B=\left[\begin{array}{cccc}|&|&\quad&| \\ \vec{v}_1&\vec{v}_2&\cdots &\vec{v}_p \\ |&|&\quad&|\end{array}\right] \nonumber\]

The product \(AB\) is the \(m\times p\) matrix with columns \(A\vec{v}_1,A\vec{v}_2,\ldots,A\vec{v}_p\text{:}\)

\[AB=\left[\begin{array}{cccc}|&|&\quad&| \\ A\vec{v}_1&A\vec{v}_2&\cdots &A\vec{v}_p \\ |&|&\quad &|\end{array}\right] \nonumber\]

In other words, matrix multiplication is defined column-by-column, or “distributes over the columns of \(B\).”

\[\begin{aligned}\left[\begin{array}{ccc}1&1&0\\0&1&1\end{array}\right]\left[\begin{array}{cc}1&0\\0&1\\1&0\end{array}\right]&=\left[\left[\begin{array}{ccc}1&1&0\\0&1&1\end{array}\right]\left[\begin{array}{c}1\\0\\1\end{array}\right]\:\left[\begin{array}{ccc}1&1&0\\0&1&1\end{array}\right]\left[\begin{array}{c}0\\1\\0\end{array}\right]\right] \\ &=\left[\left[\begin{array}{c}1\\1\end{array}\right]\:\left[\begin{array}{c}1\\1\end{array}\right]\right]=\left[\begin{array}{cc}1&1\\1&1\end{array}\right]\end{aligned}\nonumber\]

In order for the vectors \(A\vec{v}_1,A\vec{v}_2,\ldots,A\vec{v}_p\) to be defined, the numbers of rows of \(B\) has to equal the number of columns of \(A\).

- In order for \(AB\) to be defined, the number of rows of \(B\) has to equal the number of columns of \(A\).

- The product of an \(\color{blue}{m}\color{black}{\times}\color{Red}{n}\) matrix and an \(\color{red}{n}\color{black}{\times}\color{blue}{p}\) matrix is an \(\color{blue}{m}\color{black}{\times}\color{blue}{p}\) matrix.

If \(B\) has only one column, then \(AB\) also has one column. A matrix with one column is the same as a vector, so the definition of the matrix product generalizes the definition of the matrix-vector product we defined previously.

If \(A\) is a square matrix, then we can multiply it by itself; we define its powers to be

\[ A^2 = AA \qquad A^3 = AAA \qquad \text{etc.} \nonumber \]

Recall from Chapter 1 that the product of a row vector \(\vec{a}\) and a column vector \(\vec{x}\) is the scalar

\[ \vec{a}\cdot \vec{x}=\left[\begin{array}{cccc}a_1&a_2&\cdots &a_n\end{array}\right]\:\left[\begin{array}{c}x_1\\x_2\\ \vdots\\x_n\end{array}\right] = a_1x_1 + a_2x_2 + \cdots + a_nx_n \nonumber \]

The following procedure for finding the matrix product is much better adapted to computations by hand.

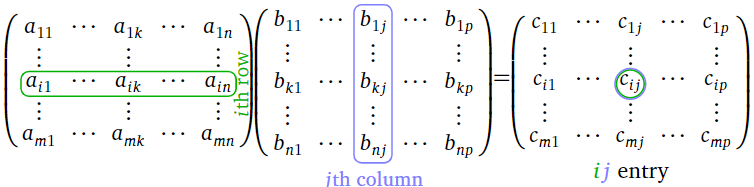

Let \(A\) be an \(m\times n\) matrix, let \(B\) be an \(n\times p\) matrix, and let \(C = AB\). Then the \(ij\) entry of \(C\) is the \(i\)th row of \(A\) times the \(j\)th column of \(B\text{:}\)

\[ c_{ij} = a_{i1}b_{1j} + a_{i2}b_{2j} + \cdots + a_{in}b_{nj}. \nonumber \]

Here is a diagram:

Figure \(\PageIndex{4}\)

The row-column rule allows us to compute the product matrix one entry at a time:

\[\begin{aligned}\left[\begin{array}{ccc}\color{Green}{1}&\color{Green}{2}&\color{Green}{3} \\ \color{black}{4}&\color{black}{5}&\color{black}{6}\end{array}\right]\:\left[\begin{array}{cc}\color{blue}{1}&\color{black}{-3} \\ \color{blue}{2}&\color{black}{-2} \\ \color{blue}{3}&\color{black}{-1}\end{array}\right] &= \left[\begin{array}{cccccc} \color{Green}{1} \color{black}{\cdot}\color{blue}{1}&\color{black}{+}&\color{Green}{2}\color{black}{\cdot}\color{blue}{2}&\color{black}{+}&\color{Green}{3}\color{black}{\cdot}\color{blue}{3}&\fbox{ } \\ {}&{}&\fbox{ }&{}&{}&\fbox{ }\end{array}\right] = \left[\begin{array}{cc}\color{purple}{14}&\color{black}{\fbox{ }} \\ \fbox{ }&\fbox{ }\end{array}\right] \\ \left[\begin{array}{ccc}1&2&3 \\ \color{Green}{4}&\color{Green}{5}&\color{Green}{6}\end{array}\right]\left[\begin{array}{cc}\color{blue}{1}&\color{black}{-3}\\ \color{blue}{2}&\color{black}{-2} \\ \color{blue}{3}&\color{black}{-1}\end{array}\right]&=\left[\begin{array}{cccccc} {}&{}&\fbox{ }&{}&{}&\fbox{ } \\ \color{Green}{4}\color{black}{\cdot}\color{blue}{1}&\color{black}{+}&\color{Green}{5}\color{black}{\cdot}\color{blue}{2}&\color{black}{+}&\color{Green}{6}\color{black}{\cdot}\color{blue}{3}&\fbox{ }\end{array}\right]=\left[\begin{array}{cc}14&\fbox{ }\\ \color{purple}{32}&\color{black}{\fbox{ }}\end{array}\right] \end{aligned}\nonumber \]

\[\begin{aligned}\left[\begin{array}{ccc}\color{Green}{1}&\color{Green}{2}&\color{Green}{3} \\ \color{black}{4}&\color{black}{5}&\color{black}{6}\end{array}\right]\:\left[\begin{array}{cc}\color{black}{1}&\color{blue}{-3} \\ \color{black}{2}&\color{blue}{-2} \\ \color{black}{3}&\color{blue}{-1}\end{array}\right] &=\left[\begin{array}{cccccc} \fbox{ } & \color{Green}{1} \color{black}{\cdot}\color{blue}{(-3)}&\color{black}{+}&\color{Green}{2}\color{black}{\cdot}\color{blue}{(-2)}&\color{black}{+}&\color{Green}{3}\color{black}{\cdot}\color{blue}{(-1)} \\ \fbox{ } & {}&{}&\fbox{ }&{}&{}\end{array}\right] = \left[\begin{array}{cc}\color{black}{14}&\color{purple}{-10 } \\ \color{black}{32 }&\fbox{ }\end{array}\right] \\ \left[\begin{array}{ccc}1&2&3 \\ \color{Green}{4}&\color{Green}{5}&\color{Green}{6}\end{array}\right] \left[\begin{array}{cc}\color{black}{1}&\color{blue}{-3}\\ \color{black}{2}&\color{blue}{-2} \\ \color{black}{3}&\color{blue}{-1}\end{array}\right]&=\left[\begin{array}{cccccc} \fbox{ }& {}&{}&\fbox{ }&{}&{} \\ \fbox{ } &\color{Green}{4}\color{black}{\cdot}\color{blue}{(-3)}&\color{black}{+}&\color{Green}{5}\color{black}{\cdot}\color{blue}{(-2)}&\color{black}{+}&\color{Green}{6}\color{black}{\cdot}\color{blue}{(-1)}\end{array}\right] =\left[\begin{array}{cc}14&-10\\ \color{black}{32}&\color{purple}{-28}\end{array}\right] \end{aligned} \nonumber \]

Although matrix multiplication satisfies many of the properties one would expect (see the end of the section), one must be careful when doing matrix arithmetic, as there are several properties that are not satisfied in general.

- Matrix multiplication is not commutative: \(AB\) is not usually equal to \(BA\text{,}\) even when both products are defined and have the same size. (See this demonstrated in the example below.)

- Matrix multiplication does not satisfy the cancellation law: \(AB=AC\) does not imply \(B=C\text{,}\) even when \(A\neq 0\). For example,

\[\left[\begin{array}{cc}1&0\\0&0\end{array}\right]\left[\begin{array}{cc}1&2\\3&4\end{array}\right]=\left[\begin{array}{cc}1&2\\0&0\end{array}\right]=\left[\begin{array}{cc}1&0\\0&0\end{array}\right]\left[\begin{array}{cc}1&2\\5&6\end{array}\right]\nonumber\] - It is possible for \(AB=0\text{,}\) even when \(A\neq 0\) and \(B\neq 0\). For example,

\[\left[\begin{array}{cc}1&0\\1&0\end{array}\right]\left[\begin{array}{cc}0&0\\1&1\end{array}\right]=\left[\begin{array}{cc}0&0\\0&0\end{array}\right]\nonumber\]

While matrix multiplication is not commutative in general there are examples of matrices \(A\) and \(B\) with \(AB=BA\). For example, this always works when \(A\) is the zero matrix, or when \(A=B\). The reader is encouraged to find other examples.

Consider the products of the matrices \(A=\left[\begin{array}{cc}1&1\\0&1\end{array}\right]\quad\text{and}\quad B=\left[\begin{array}{cc}1&0\\1&1\end{array}\right]\)

The matrix \(AB\) is \(\left[\begin{array}{cc}1&1\\0&1\end{array}\right]\:\left[\begin{array}{cc}1&0\\1&1\end{array}\right]=\left[\begin{array}{cc}2&1\\1&1\end{array}\right]\)

Whereas the matrix \(BA\) is \(\left[\begin{array}{cc}1&0\\1&1\end{array}\right]\:\left[\begin{array}{cc}1&1\\0&1\end{array}\right]=\left[\begin{array}{cc}1&1\\1&2\end{array}\right]\)

Clearly we have \(AB \neq BA. \) We see matrix multiplication is not always commutative.

Let \(T\colon\mathbb{R}^n \to\mathbb{R}^m \) and \(U\colon\mathbb{R}^p \to\mathbb{R}^n \) be linear transformations, and let \(A\) and \(B\) be their standard matrices, respectively. Recall that \(T\circ U(\vec{x})\) is the vector obtained by first applying \(U\) to \(\vec{x}\), and then \(T\).

On the matrix side, the standard matrix of \(T\circ U\) is the product \(AB\), so \(T\circ U(\vec{x}) = (AB)\vec{x}\). By associativity of matrix multiplication, we have \((AB)\vec{x} = A(B\vec{x}) \), so the product \((AB)\vec{x}\) can be computed by first multiplying \(\vec{x}\) by \(B\), then multiplying the product by \(A\).

Therefore, matrix multiplication happens in the same order as composition of transformations. In other words, both matrices and transformations are written in the order opposite from the order in which they act. But matrix multiplication and composition of transformations are written in the same order as each other: the matrix for \(T\circ U\) is \(AB\).

We have seen that the standard matrix for the counterclockwise rotation of the plane by an angle of \(\theta\) is

\[A=\left[\begin{array}{cc}\cos\theta &-\sin\theta \\ \sin\theta &\cos\theta\end{array}\right]\nonumber\]

Let \(T\colon\mathbb{R}^2 \to\mathbb{R}^2 \) be counterclockwise rotation by \(45^\circ\text{,}\) and let \(U\colon\mathbb{R}^2 \to\mathbb{R}^2 \) be counterclockwise rotation by \(90^\circ\). The matrices \(A\) and \(B\) for \(T\) and \(U\) are, respectively,

\[\begin{aligned}A&=\left[\begin{array}{cc}\cos(45^\circ )&-\sin (45^\circ ) \\ \sin (45^\circ)&\cos (45^\circ)\end{array}\right] =\left[\begin{array}{cc}\frac{1}{\sqrt{2}}&-\frac{1}{\sqrt{2}}\\\frac{1}{\sqrt{2}}&\frac{1}{\sqrt{2}}\end{array}\right] \\ B&=\left[\begin{array}{cc}\cos(90^\circ )&-\sin (90^\circ ) \\ \sin (90^\circ)&\cos (90^\circ)\end{array}\right] =\left[\begin{array}{cc}0&-1\\1&0\end{array}\right] \end{aligned}\nonumber \]

The standard matrix of the composition \(T\circ U\) is

\[AB= \left[\begin{array}{cc}\frac{1}{\sqrt{2}}&-\frac{1}{\sqrt{2}}\\\frac{1}{\sqrt{2}}&\frac{1}{\sqrt{2}}\end{array}\right]\left[\begin{array}{cc}0&-1\\1&0\end{array}\right]=\left[\begin{array}{cc}-\frac{1}{\sqrt{2}}&-\frac{1}{\sqrt{2}}\\\frac{1}{\sqrt{2}}&-\frac{1}{\sqrt{2}}\end{array}\right]\nonumber\]

This is consistent with the fact that \(T\circ U\) is counterclockwise rotation by \(90^\circ + 45^\circ = 135^\circ\text{:}\) we have

\[\left[\begin{array}{cc}\cos(135^\circ )&-\sin (135^\circ ) \\ \sin (135^\circ)&\cos (135^\circ)\end{array}\right] =\left[\begin{array}{cc}-\frac{1}{\sqrt{2}}&-\frac{1}{\sqrt{2}}\\\frac{1}{\sqrt{2}}&-\frac{1}{\sqrt{2}}\end{array}\right] \nonumber\]

Derive the trigonometric identities

\[ \sin(\alpha\pm\beta) = \sin(\alpha)\cos(\beta) \pm \cos(\alpha)\sin(\beta) \nonumber \]

and

\[ \cos(\alpha\pm\beta) = \cos(\alpha)\cos(\beta) \mp \sin(\alpha)\sin(\beta) \nonumber \]

as in the previous example.

Recall that the identity matrix is the \(n\times n\) matrix \(I\) whose columns are the standard coordinate vectors in \(\mathbb{R}^n \). The identity matrix is the standard matrix of the identity transformation: that is, \(\vec{x} =\mathcal{I}(\vec{x}) = I \vec{x}\) for all vectors \(\vec{x}\) in \(\mathbb{R}^n \). For any linear transformation \(T : \mathbb{R}^n \to \mathbb{R}^m \) we have

\[ T\circ \mathcal{I} = T \nonumber \]

and by the same token we have for any \(m \times n\) matrix \(A\) we have

\[ AI=A \nonumber \]

Similarly, we have \( \mathcal{I} \circ T= T\) and \(IA=A\) for \(T: \mathbb{R}^m\to \mathbb{R}^n\) and \(A\) any \(n\times m\) matrix.

We can also combine addition and scalar multiplication of matrices with multiplication of matrices. Since matrix multiplication corresponds to composition of transformations, the following properties are consequences of the corresponding properties of transformations.

Let \(A,B,C\) be matrices and let \(c\) be a scalar. Suppose that \(A\) is an \(m\times n\) matrix, and that in each of the following identities, the sizes of \(B\) and \(C\) are compatible when necessary for the product to be defined. Then:

\begin{align*} C(A+B) \amp= C A+C B \amp (A + B) C \amp= A C + B C \amp\\ c(A B) \amp= (cA) B \amp c(A B) \amp= A(cB)\\ A I \amp= A \amp I A \amp= A\\ (A B)C \amp= A (BC) \amp \end{align*}

Most of the above properties are easily verified directly from the definitions. The associativity property \((AB)C=A(BC)\text{,}\) however, is not (try it!). It is much easier to prove by relating matrix multiplication to composition of transformations, and using the obvious fact that composition of transformations is associative.