4.1: Sequences of Real Numbers

- Page ID

- 7937

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\(\newcommand{\avec}{\mathbf a}\) \(\newcommand{\bvec}{\mathbf b}\) \(\newcommand{\cvec}{\mathbf c}\) \(\newcommand{\dvec}{\mathbf d}\) \(\newcommand{\dtil}{\widetilde{\mathbf d}}\) \(\newcommand{\evec}{\mathbf e}\) \(\newcommand{\fvec}{\mathbf f}\) \(\newcommand{\nvec}{\mathbf n}\) \(\newcommand{\pvec}{\mathbf p}\) \(\newcommand{\qvec}{\mathbf q}\) \(\newcommand{\svec}{\mathbf s}\) \(\newcommand{\tvec}{\mathbf t}\) \(\newcommand{\uvec}{\mathbf u}\) \(\newcommand{\vvec}{\mathbf v}\) \(\newcommand{\wvec}{\mathbf w}\) \(\newcommand{\xvec}{\mathbf x}\) \(\newcommand{\yvec}{\mathbf y}\) \(\newcommand{\zvec}{\mathbf z}\) \(\newcommand{\rvec}{\mathbf r}\) \(\newcommand{\mvec}{\mathbf m}\) \(\newcommand{\zerovec}{\mathbf 0}\) \(\newcommand{\onevec}{\mathbf 1}\) \(\newcommand{\real}{\mathbb R}\) \(\newcommand{\twovec}[2]{\left[\begin{array}{r}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\ctwovec}[2]{\left[\begin{array}{c}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\threevec}[3]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\cthreevec}[3]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\fourvec}[4]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\cfourvec}[4]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\fivevec}[5]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\cfivevec}[5]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\mattwo}[4]{\left[\begin{array}{rr}#1 \amp #2 \\ #3 \amp #4 \\ \end{array}\right]}\) \(\newcommand{\laspan}[1]{\text{Span}\{#1\}}\) \(\newcommand{\bcal}{\cal B}\) \(\newcommand{\ccal}{\cal C}\) \(\newcommand{\scal}{\cal S}\) \(\newcommand{\wcal}{\cal W}\) \(\newcommand{\ecal}{\cal E}\) \(\newcommand{\coords}[2]{\left\{#1\right\}_{#2}}\) \(\newcommand{\gray}[1]{\color{gray}{#1}}\) \(\newcommand{\lgray}[1]{\color{lightgray}{#1}}\) \(\newcommand{\rank}{\operatorname{rank}}\) \(\newcommand{\row}{\text{Row}}\) \(\newcommand{\col}{\text{Col}}\) \(\renewcommand{\row}{\text{Row}}\) \(\newcommand{\nul}{\text{Nul}}\) \(\newcommand{\var}{\text{Var}}\) \(\newcommand{\corr}{\text{corr}}\) \(\newcommand{\len}[1]{\left|#1\right|}\) \(\newcommand{\bbar}{\overline{\bvec}}\) \(\newcommand{\bhat}{\widehat{\bvec}}\) \(\newcommand{\bperp}{\bvec^\perp}\) \(\newcommand{\xhat}{\widehat{\xvec}}\) \(\newcommand{\vhat}{\widehat{\vvec}}\) \(\newcommand{\uhat}{\widehat{\uvec}}\) \(\newcommand{\what}{\widehat{\wvec}}\) \(\newcommand{\Sighat}{\widehat{\Sigma}}\) \(\newcommand{\lt}{<}\) \(\newcommand{\gt}{>}\) \(\newcommand{\amp}{&}\) \(\definecolor{fillinmathshade}{gray}{0.9}\)- Explain the sequences of real numbers

In Chapter 2, we developed the equation \(1 + x + x^2 + x^3 + \cdots = \frac{1}{1-x}\), and we mentioned there were limitations to this power series representation. For example, substituting \(x = 1\) and \(x = -1\) into this expression leads to

\[1 + 1 + 1 + \cdots = \frac{1}{0} \; \; \text{and } 1 - 1 + 1 - 1 + \cdots = \frac{1}{2}\]

which are rather hard to accept. On the other hand, if we substitute \(x = \frac{1}{2}\) into the expression we get \(1 + \left ( \frac{1}{2} \right ) + \left ( \frac{1}{2} \right )^2 + \left ( \frac{1}{2} \right )^3 + \cdots = 2\) which seems more palatable until we think about it. We can add two numbers together by the method we all learned in elementary school. Or three. Or any finite set of numbers, at least in principle. But infinitely many? What does that even mean? Before we can add infinitely many numbers together we must find a way to give meaning to the idea.

To do this, we examine an infinite sum by thinking of it as a sequence of finite partial sums. In our example, we would have the following sequence of partial sums.

\[\left ( 1, 1 + \frac{1}{2}, 1 + \frac{1}{2} + \left ( \frac{1}{2} \right )^2, 1 + \frac{1}{2} + \left ( \frac{1}{2} \right )^3, \cdots , \sum_{j=0}^{n}\left ( \frac{1}{2} \right )^j\right )\]

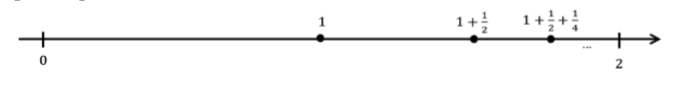

We can plot these sums on a number line to see what they tend toward as \(n\) gets large.

Figure \(\PageIndex{1}\): Number line plot.

Since each partial sum is located at the midpoint between the previous partial sum and \(2\), it is reasonable to suppose that these sums tend to the number \(2\). Indeed, you probably have seen an expression such as \(\lim_{n \to \infty }\left ( \sum_{j=0}^{n}\left ( \frac{1}{2} \right )^j \right ) = 2\) justified by a similar argument. Of course, the reliance on such pictures and words is fine if we are satisfied with intuition. However, we must be able to make these intuitions rigorous without relying on pictures or nebulous words such as “approaches.”

No doubt you are wondering “What’s wrong with the word ‘approaches’? It seems clear enough to me.” This is often a sticking point. But if we think carefully about what we mean by the word “approach” we see that there is an implicit assumption that will cause us some difficulties later if we don’t expose it.

To see this consider the sequence \(\left ( 1,\frac{1}{2},\frac{1}{3},\frac{1}{4},\cdots \right )\). Clearly it “approaches”zero, right? But, doesn’t it also “approach” \(-1\)? It does, in the sense that each term gets closer to \(-1\) than the one previous. But it also “approaches” \(-2\), \(-3\), or even \(-1000\) in the same sense. That’s the problem with the word “approaches.” It just says that we’re getting closer to something than we were in the previous step. It does not tell us that we are actually getting close. Since the moon moves in an elliptical orbit about the earth for part of each month it is “approaching” the earth. The moon gets closer to the earth but, thankfully, it does not get close to the earth. The implicit assumption we alluded to earlier is this: When we say that the sequence \(\left ( \frac{1}{n}\right )_{n=1}^\infty\) “approaches” zero we mean that it is getting close not closer. Ordinarily this kind of vagueness in our language is pretty innocuous. When we say “approaches” in casual conversation we can usually tell from the context of the conversation whether we mean “getting close to” or “getting closer to.” But when speaking mathematically we need to be more careful, more explicit, in the language we use.

So how can we change the language we use so that this ambiguity is eliminated? Let’s start out by recognizing, rigorously, what we mean when we say that a sequence converges to zero. For example, you would probably want to say that the sequence \(\left ( 1,\frac{1}{2},\frac{1}{3},\frac{1}{4},\cdots \right ) = \left ( \frac{1}{n}\right )_{n=1}^\infty\) converges to zero. Is there a way to give this meaning without relying on pictures or intuition?

One way would be to say that we can make \(\frac{1}{n}\) as close to zero as we wish, provided we make \(n\) large enough. But even this needs to be made more specific. For example, we can get \(\frac{1}{n}\) to within a distance of \(0.1\) of \(0\) provided we make \(n > 10\), we can get \(\frac{1}{n}\) to within a distance of \(0.01\) of \(0\) provided we make \(n > 100\), etc. After a few such examples it is apparent that given any arbitrary distance \(ε > 0\), we can get \(\frac{1}{n}\) to within \(ε\) of \(0\) provided we make \(n > \frac{1}{\varepsilon }\). This leads to the following definition.

Let \((s_n) = (s_1,s_2,s_3,...)\) be a sequence of real numbers. We say that \((s_n)\) converges to \(0\) and write \(\lim_{n \to \infty }s_n = 0\) provided for any \(ε > 0\), there is a real number \(N\) such that if \(n > N\), then \(|s_n| < ε\).

- This definition is the formal version of the idea we just talked about; that is, given an arbitrary distance \(ε\), we must be able to find a specific number \(N\) such that \(s_n\) is within \(ε\) of \(0\), whenever \(n > N\). The \(N\) is the answer to the question of how large is “large enough” to put \(s_n\) this close to \(0\).

- Even though we didn’t need it in the example \(\left (\frac{1}{n} \right )\), the absolute value appears in the definition because we need to make the distance from \(s_n\) to \(0\) smaller than \(ε\). Without the absolute value in the definition, we would be able to “prove” such outrageous statements as \(\lim_{n \to \infty }-n = 0\), which we obviously don’t want.

- The statement \(|sn| < ε\) can also be written as \(-ε < sn < ε\) or \(s_n ∈ (-ε,ε)\). (See the exercise \(\PageIndex{1}\) below.) Any one of these equivalent formulations can be used to prove convergence. Depending on the application, one of these may be more advantageous to use than the others.

- Any time an \(N\) can be found that works for a particular \(ε\), any number \(M > N\) will work for that \(ε\) as well, since if \(n > M\) then \(n > N\).

Let \(a\) and \(b\) be real numbers with \(b > 0\). Prove \(|a| < b\) if and only if \(-b < a < b\). Notice that this can be extended to \(|a| ≤ b\) if and only if \(-b ≤ a ≤ b\).

To illustrate how this definition makes the above ideas rigorous, let’s use it to prove that \(\lim_{n \to \infty }\left ( \frac{1}{n} \right ) = 0\).

Let \(ε > 0\) be given. Let \(N = \frac{1}{\varepsilon }\). If \(n > N\), then \(n > \frac{1}{\varepsilon }\) and so \(\left | \frac{1}{n} \right | = \frac{1}{n} < \varepsilon\). Hence by definition, \(\lim_{n \to \infty } \frac{1}{n} = 0\).

Notice that this proof is rigorous and makes no reference to vague notions such as “getting smaller” or “approaching infinity.” It has three components:

- provide the challenge of a distance \(ε > 0\)

- identify a real number \(N\)

- show that this \(N\) works for this given \(ε\).

There is also no explanation about where \(N\) came from. While it is true that this choice of \(N\) is not surprising in light of the “scrapwork” we did before the definition, the motivation for how we got it is not in the formal proof nor is it required. In fact, such scrapwork is typically not included in a formal proof. For example, consider the following.

Use the definition of convergence to zero to prove \(\lim_{n \to \infty } \frac{\sin }{n} = 0\).

Proof:

Let \(ε > 0\). Let \(N = \frac{1}{\varepsilon }\). If \(n > N\), then \(n > \frac{1}{\varepsilon }\) and \(\frac{1}{n} < \varepsilon\). Thus \(\left | \frac{\sin n}{n} \right | \leq \frac{1}{n} < \varepsilon\). Hence by definition, \(\lim_{n \to \infty } \frac{\sin }{n} = 0\).

Notice that the \(N\) came out of nowhere, but you can probably see the thought process that went into this choice: we needed to use the inequality \(|\sin n|≤ 1\). Again this scrapwork is not part of the formal proof, but it is typically necessary for finding what \(N\) should be. You might be able to do the next problem without doing any scrapwork first, but don’t hesitate to do scrapwork if you need it.

Use the definition of convergence to zero to prove the following.

- \(\lim_{n \to \infty } \frac{1}{n^2} = 0\)

- \(\lim_{n \to \infty } \frac{1}{\sqrt{n}} = 0\)

As the sequences get more complicated, doing scrapwork ahead of time will become more necessary.

Use the definition of convergence to zero to prove \(\lim_{n \to \infty } \frac{n+4}{n^2 + 1} = 0\).

Scrapwork:

Given an \(ε > 0\), we need to see how large to make \(n\) in order to guarantee that \(\left |\frac{n+4}{n^2 + 1} \right |< \varepsilon\). First notice that \((\frac{n+4}{n^2 + 1} < \frac{n+4}{n^2}\). Also, notice that if \(n > 4\), then \(n + 4 < n + n = 2n\). So as long as \(n > 4\), we have \(\frac{n+4}{n^2 + 1} < \frac{n+4}{n^2} < \frac{2n}{n^2} < \frac{2}{n}\). We can make this less than \(ε\) if we make \(n > \frac{2}{\varepsilon }\). This means we need to make \(n > 4\) and \(n > \frac{2}{\varepsilon }\), simultaneously. These can be done if we let \(N\) be the maximum of these two numbers. This sort of thing comes up regularly, so the notation \(N = \max \left (4,\frac{2}{\varepsilon } \right )\) was developed to mean the maximum of these two numbers. Notice that if \(N = \max \left (4,\frac{2}{\varepsilon } \right )\) then \(N ≥ 4\) and \(N \geq \frac{2}{\varepsilon }\). We’re now ready for the formal proof.

Proof:

Let \(ε > 0\). Let \(N = \max \left (4,\frac{2}{\varepsilon } \right )\). If \(n > N\), then \(n > 4\) and \(n > \frac{2}{\varepsilon }\). Thus we have \(n > 4\) and \(\frac{2}{n} < \varepsilon\). Therefore

\[\left |\frac{n+4}{n^2 + 1} \right | = \frac{n+4}{n^2 + 1} < \frac{n+4}{n^2} < \frac{2n}{n^2} = \frac{2}{n} < \varepsilon\]

Hence by definition, \(\lim_{n \to \infty }\frac{n+4}{n^2 + 1} = 0\).

Again we emphasize that the scrapwork is NOT part of the formal proof and the reader will not see it. However, if you look carefully, you can see the scrapwork in the formal proof.

Use the definition of convergence to zero to prove \(\lim_{n \to \infty }\frac{n^2+4n+1}{n^3} = 0\).

Let b be a nonzero real number with \(|b| < 1\) and let \(ε > 0\).

- Solve the inequality \(|b|^n < ε\) for \(n\).

- Use part (a) to prove \(\lim_{n \to \infty }b^n = 0\).

We can negate this definition to prove that a particular sequence does not converge to zero.

Use the definition to prove that the sequence \((1 + (-1)^n)_{n=0}^{\infty } = (2,0,2,0,2,\cdots )\) does not converge to zero.

Before we provide this proof, let’s analyze what it means for a sequence \((s_n)\) to not converge to zero. Converging to zero means that any time a distance \(ε > 0\) is given, we must be able to respond with a number \(N\) such that \(|s_n| < ε\) for every \(n > N\). To have this not happen, we must be able to find some \(ε > 0\) such that no choice of \(N\) will work. Of course, if we find such an \(ε\), then any smaller one will fail to have such an \(N\), but we only need one to mess us up. If you stare at the example long enough, you see that any \(ε\) with \(0 < ε ≤ 2\) will cause problems. For our purposes, we will let \(ε = 2\).

Proof:

Let \(ε = 2\) and let \(N ∈ \mathbb{N}\) be any integer. If we let \(k\) be any nonnegative integer with \(k > \frac{N}{2}\), then \(n = 2k > N\), but \(|1 + (-1)^n| = 2\). Thus no choice of \(N\) will satisfy the conditions of the definition for this \(ε\), (namely that \(|1 + (-1)^n| < 2\) for all \(n > N\)) and so \(\lim_{n \to \infty }(1 + (-1)^n) \neq 0\).

Negate the definition of \(\lim_{n \to \infty }s_n = 0\) to provide a formal definition for \(\lim_{n \to \infty }s_n \neq 0\).

Use the definition to prove \(\lim_{n \to \infty }\frac{n}{n+100} \neq 0\).

Now that we have a handle on how to rigorously prove that a sequence converges to zero, let’s generalize this to a formal definition for a sequence converging to something else. Basically, we want to say that a sequence \((s_n)\) converges to a real number \(s\), provided the difference \((s_n - s)\) converges to zero. This leads to the following definition:

Let \((s_n) = (s_1,s_2,s_3,...)\) be a sequence of real numbers and let \(s\) be a real number. We say that \((s_n)\) converges to \(s\) and write \(\lim_{n \to \infty }s_n = s\)provided for any \(ε > 0\), there is a real number \(N\) such that if \(n > N\), then \(|s_n - s| < ε\).

- Clearly \(\lim_{n \to \infty }s_n = s\) if and only if \(\lim_{n \to \infty }(s_n - s) = 0\).

- Again notice that this says that we can make \(s_n\) as close to \(s\) as we wish (within \(ε\)) by making \(n\) large enough (\(> N\)). As before, this definition makes these notions very specific.

- Notice that \(|s_n - s| < ε\) can be written in the following equivalent forms

- \(|s_n - s| < ε\)

- \(-ε < s_n - s < ε \)

- \(s - ε < s_n < s + ε \)

- \(s_n ∈ (s - ε,s + ε) \)

and we are free to use any one of these which is convenient at the time.

As an example, let’s use this definition to prove that the sequence in Problem \(\PageIndex{6}\), in fact, converges to \(1\).

Prove \(\lim_{n \to \infty }\frac{n}{n+100} = 1\).

Scrapwork:

Given an \(ε > 0\), we need to get \(\left |\frac{n}{n+100} - 1 \right | < \varepsilon\). This prompts us to do some algebra.

\[\left |\frac{n}{n+100} - 1 \right | = \left |\frac{n-(n+100)}{n+100} - 1 \right | \leq \frac{100}{n}\]

This in turn, seems to suggest that \(N = \frac{100}{\varepsilon }\) should work.

Proof:

Let \(ε > 0\). Let \(N = \frac{100}{\varepsilon }\). If \(n > N\), then \(n > \frac{100}{\varepsilon }\) and so \(\frac{100}{n} < \varepsilon\). Hence

\[\left |\frac{n}{n+100} - 1 \right | = \left |\frac{n-(n+100)}{n+100} - 1 \right | = \frac{100}{n+100} < \frac{100}{n} < \varepsilon\]

Thus by definition \(\lim_{n \to \infty }\frac{n}{n+100} = 1\)

Notice again that the scrapwork is not part of the formal proof and the author of a proof is not obligated to tell where the choice of \(N\) came from (although the thought process can usually be seen in the formal proof). The formal proof contains only the requisite three parts: provide the challenge of an arbitrary \(ε > 0\), provide a specific \(N\), and show that this \(N\) works for the given \(ε\).

Also notice that given a specific sequence such as \(\frac{n}{n+100}\), the definition does not indicate what the limit would be if, in fact, it exists. Once an educated guess is made as to what the limit should be, the definition only verifies that this intuition is correct.

This leads to the following question: If intuition is needed to determine what a limit of a sequence should be, then what is the purpose of this relatively non-intuitive, complicated definition?

Remember that when these rigorous formulations were developed, intuitive notions of convergence were already in place and had been used with great success. This definition was developed to address the foundational issues. Could our intuitions be verified in a concrete fashion that was above reproach? This was the purpose of this non-intuitive definition. It was to be used to verify that our intuition was, in fact, correct and do so in a very prescribed manner. For example, if \(b > 0\) is a fixed number, then you would probably say as \(n\) approaches infinity, \(b^{(\frac{1}{n})}\) approaches \(b^0 = 1\). After all, we did already prove that \(\lim_{n \to \infty }\frac{1}{n} = 0\). We should be able to back up this intuition with our rigorous definition.

Let \(b > 0\). Use the definition to prove \(\lim_{n \to \infty }b^{(\frac{1}{n})} = 1\). [Hint: You will probably need to separate this into two cases: \(0 < b < 1\) and \(b ≥ 1\).]

- Provide a rigorous definition for \(\lim_{n \to \infty }s_n \neq s\).

- Use your definition to show that for any real number \(a\), \(\lim_{n \to \infty }((-1)^n) \neq a\). [Hint: Choose \(ε = 1\) and use the fact that \(\left | a - (-1)^n \right | < 1\) is equivalent to \((-1)^n - 1 < a < (-1)^n + 1\) to show that no choice of \(N\) will work for this \(ε\).]