17.4: Approximation

- Page ID

- 4844

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\dsum}{\displaystyle\sum\limits} \)

\( \newcommand{\dint}{\displaystyle\int\limits} \)

\( \newcommand{\dlim}{\displaystyle\lim\limits} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\(\newcommand{\longvect}{\overrightarrow}\)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\(\newcommand{\avec}{\mathbf a}\) \(\newcommand{\bvec}{\mathbf b}\) \(\newcommand{\cvec}{\mathbf c}\) \(\newcommand{\dvec}{\mathbf d}\) \(\newcommand{\dtil}{\widetilde{\mathbf d}}\) \(\newcommand{\evec}{\mathbf e}\) \(\newcommand{\fvec}{\mathbf f}\) \(\newcommand{\nvec}{\mathbf n}\) \(\newcommand{\pvec}{\mathbf p}\) \(\newcommand{\qvec}{\mathbf q}\) \(\newcommand{\svec}{\mathbf s}\) \(\newcommand{\tvec}{\mathbf t}\) \(\newcommand{\uvec}{\mathbf u}\) \(\newcommand{\vvec}{\mathbf v}\) \(\newcommand{\wvec}{\mathbf w}\) \(\newcommand{\xvec}{\mathbf x}\) \(\newcommand{\yvec}{\mathbf y}\) \(\newcommand{\zvec}{\mathbf z}\) \(\newcommand{\rvec}{\mathbf r}\) \(\newcommand{\mvec}{\mathbf m}\) \(\newcommand{\zerovec}{\mathbf 0}\) \(\newcommand{\onevec}{\mathbf 1}\) \(\newcommand{\real}{\mathbb R}\) \(\newcommand{\twovec}[2]{\left[\begin{array}{r}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\ctwovec}[2]{\left[\begin{array}{c}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\threevec}[3]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\cthreevec}[3]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\fourvec}[4]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\cfourvec}[4]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\fivevec}[5]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\cfivevec}[5]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\mattwo}[4]{\left[\begin{array}{rr}#1 \amp #2 \\ #3 \amp #4 \\ \end{array}\right]}\) \(\newcommand{\laspan}[1]{\text{Span}\{#1\}}\) \(\newcommand{\bcal}{\cal B}\) \(\newcommand{\ccal}{\cal C}\) \(\newcommand{\scal}{\cal S}\) \(\newcommand{\wcal}{\cal W}\) \(\newcommand{\ecal}{\cal E}\) \(\newcommand{\coords}[2]{\left\{#1\right\}_{#2}}\) \(\newcommand{\gray}[1]{\color{gray}{#1}}\) \(\newcommand{\lgray}[1]{\color{lightgray}{#1}}\) \(\newcommand{\rank}{\operatorname{rank}}\) \(\newcommand{\row}{\text{Row}}\) \(\newcommand{\col}{\text{Col}}\) \(\renewcommand{\row}{\text{Row}}\) \(\newcommand{\nul}{\text{Nul}}\) \(\newcommand{\var}{\text{Var}}\) \(\newcommand{\corr}{\text{corr}}\) \(\newcommand{\len}[1]{\left|#1\right|}\) \(\newcommand{\bbar}{\overline{\bvec}}\) \(\newcommand{\bhat}{\widehat{\bvec}}\) \(\newcommand{\bperp}{\bvec^\perp}\) \(\newcommand{\xhat}{\widehat{\xvec}}\) \(\newcommand{\vhat}{\widehat{\vvec}}\) \(\newcommand{\uhat}{\widehat{\uvec}}\) \(\newcommand{\what}{\widehat{\wvec}}\) \(\newcommand{\Sighat}{\widehat{\Sigma}}\) \(\newcommand{\lt}{<}\) \(\newcommand{\gt}{>}\) \(\newcommand{\amp}{&}\) \(\definecolor{fillinmathshade}{gray}{0.9}\)We have seen how to solve a restricted collection of differential equations, or more accurately, how to attempt to solve them---we may not be able to find the required anti-derivatives. Not surprisingly, non-linear equations can be even more difficult to solve. Yet much is known about solutions to some more general equations.

Suppose \(\phi(t,y)\) is a function of two variables. A more general class of first order differential equations has the form \(\dot y=\phi(t,y)\). This is not necessarily a linear first order equation, since \(\phi\) may depend on \(y\) in some complicated way; note however that \(\dot y\) appears in a very simple form. Under suitable conditions on the function \(\phi\), it can be shown that every such differential equation has a solution, and moreover that for each initial condition the associated initial value problem has exactly one solution. In practical applications this is obviously a very desirable property.

The equation \(\dot y=t-y^2\) is a first order non-linear equation, because \(y\) appears to the second power. We will not be able to solve this equation.

The equation \(\dot y=y^2\) is also non-linear, but it is separable and can be solved by separation of variables.

Not all differential equations that are important in practice can be solved exactly, so techniques have been developed to approximate solutions. We describe one such technique, Euler's Method, which is simple though not particularly useful compared to some more sophisticated techniques.

Suppose we wish to approximate a solution to the initial value problem \(\dot y=\phi(t,y)\), \(y(t_0)=y_0\), for \(t\ge t_0\). Under reasonable conditions on \(\phi\), we know the solution exists, represented by a curve in the \(t\)-\(y\) plane; call this solution \(f(t)\). The point \((t_0,y_0)\) is of course on this curve. We also know the slope of the curve at this point, namely

\(\phi(t_0,y_0)\). If we follow the tangent line for a brief distance, we arrive at a point that should be almost on the graph of \(f(t)\), namely \((t_0+\Delta t, y_0+\phi(t_0,y_0)\Delta t)\); call this point \((t_1,y_1)\). Now we pretend, in effect, that this point really is on the graph of \(f(t)\), in which case we again know the slope of the curve through \((t_1,y_1)\), namely \(\phi(t_1,y_1)\). So we can compute a new point, \((t_2,y_2)=(t_1+\Delta t, y_1+\phi(t_1,y_1)\Delta t)\) that is a little farther along, still close to the graph of \(f(t)\) but probably not quite so close as \((t_1,y_1)\). We can continue in this way, doing a sequence of straightforward calculations, until we have an approximation \((t_n,y_n)\) for whatever time \( t_n\) we need. At each step we do essentially the same calculation, namely

\[(t_{i+1},y_{i+1})=(t_i+\Delta t, y_i+\phi(t_i,y_i)\Delta t). \nonumber \]

We expect that smaller time steps \(\Delta t\) will give better approximations, but of course it will require more work to compute to a specified time. It is possible to compute a guaranteed upper bound on how far off the approximation might be, that is, how far \(y_n\) is from \(f(t_n)\). Suffice it to say that the bound is not particularly good and that there are other more complicated approximation techniques that do better.

Let us compute an approximation to the solution for \(\dot y=t-y^2\), \(y(0)=0\), when \(t=1\). We will use \(\Delta t=0.2\), which is easy to do even by hand, though we should not expect the resulting approximation to be very good. We get

\[\eqalign{

(t_1,y_1)&=(0+0.2,0+(0-0^2)0.2) = (0.2,0)\cr

(t_2,y_2)&=(0.2+0.2,0+(0.2-0^2)0.2) = (0.4,0.04)\cr

(t_3,y_3)&=(0.6,0.04+(0.4-0.04^2)0.2) = (0.6,0.11968)\cr

(t_4,y_4)&=(0.8,0.11968+(0.6-0.11968^2)0.2) = (0.8,0.23681533952)\cr

(t_5,y_5)&=(1.0,0.23681533952+(0.6-0.23681533952^2)0.2) = (1.0,0.385599038513605)\cr}

\nonumber \]

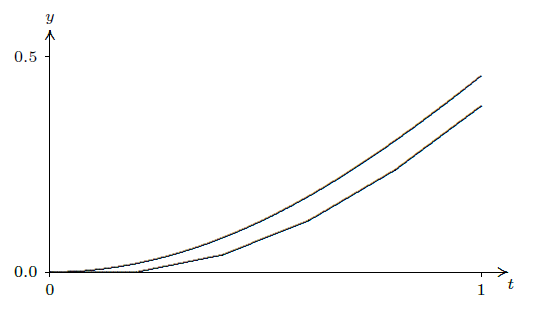

So \(y(1)\approx 0.3856\). As it turns out, this is not accurate to even one decimal place. Figure 17.4.1 shows these points connected by line segments (the lower curve) compared to a solution obtained by a much better approximation technique. Note that the shape is approximately correct even though the end points are quite far apart.

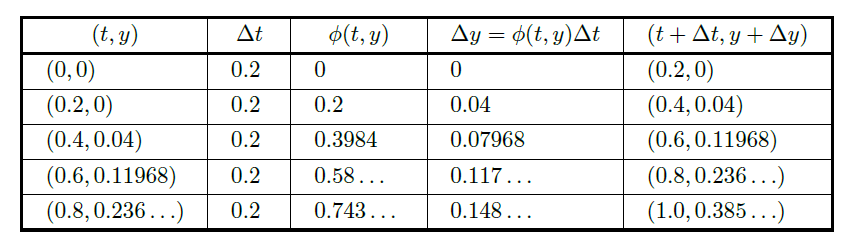

If you need to do Euler's method by hand, it is useful to construct a table to keep track of the work, as shown in figure 17.4.2. Each row holds the computation for a single step: the starting point \((t_i,y_i)\); the stepsize \(\Delta t\); the computed slope \(\phi(t_i,y_i)\); the change in \(y\), \(\Delta y=\phi(t_i,y_i)\Delta t\); and the new point, \((t_{i+1},y_{i+1})=(t_i+\Delta t,y_i+\Delta y)\). The starting point in each row is the newly computed point from the end of the previous row.

It is easy to write a short function in Sage to do Euler's method; see this Sage worksheet.

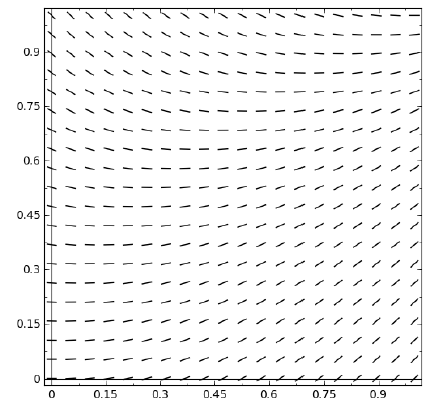

Euler's method is related to another technique that can help in understanding a differential equation in a qualitative way. Euler's method is based on the ability to compute the slope of a solution curve at any point in the plane, simply by computing \(\phi(t,y)\). If we compute \(\phi(t,y)\) at many points, say in a grid, and plot a small line segment with that slope at the point, we can get an idea of how solution curves must look. Such a plot is called a slope field. A slope field for \(\phi=t-y^2\) is shown in figure 17.4.3; compare this to figure 17.4.1. With a little practice, one can sketch reasonably accurate solution curves based on the slope field, in essence doing Euler's method visually.

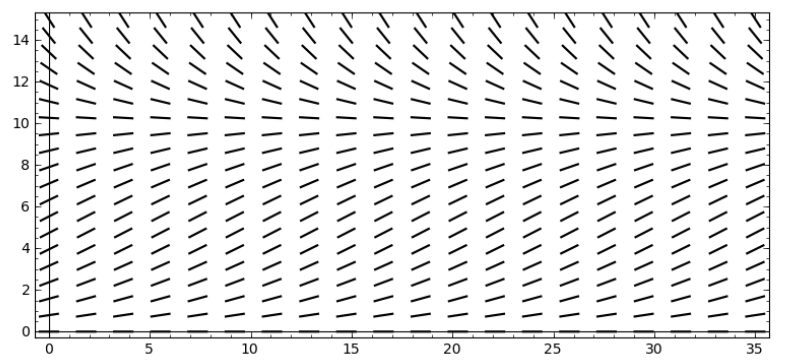

Even when a differential equation can be solved explicitly, the slope field can help in understanding what the solutions look like with various initial conditions. Recall the logistic equation from exercise 13 in section 17.1, \(\dot y =ky(M-y)\): \(y\) is a population at time \(t\), \(M\) is a measure of how large a population the environment can support, and \(k\) measures the reproduction rate of the population. Figure 17.4.4 shows a slope field for this equation that is quite informative. It is apparent that if the initial population is smaller than \(M\) it rises to \(M\) over the long term, while if the initial population is greater than \(M\) it decreases to \(M\). It is quite easy to generate slope fields with Sage; follow the AP link in the figure caption.

Contributors

Integrated by Justin Marshall.