1.8: Exact Equations

- Page ID

- 71363

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\(\newcommand{\avec}{\mathbf a}\) \(\newcommand{\bvec}{\mathbf b}\) \(\newcommand{\cvec}{\mathbf c}\) \(\newcommand{\dvec}{\mathbf d}\) \(\newcommand{\dtil}{\widetilde{\mathbf d}}\) \(\newcommand{\evec}{\mathbf e}\) \(\newcommand{\fvec}{\mathbf f}\) \(\newcommand{\nvec}{\mathbf n}\) \(\newcommand{\pvec}{\mathbf p}\) \(\newcommand{\qvec}{\mathbf q}\) \(\newcommand{\svec}{\mathbf s}\) \(\newcommand{\tvec}{\mathbf t}\) \(\newcommand{\uvec}{\mathbf u}\) \(\newcommand{\vvec}{\mathbf v}\) \(\newcommand{\wvec}{\mathbf w}\) \(\newcommand{\xvec}{\mathbf x}\) \(\newcommand{\yvec}{\mathbf y}\) \(\newcommand{\zvec}{\mathbf z}\) \(\newcommand{\rvec}{\mathbf r}\) \(\newcommand{\mvec}{\mathbf m}\) \(\newcommand{\zerovec}{\mathbf 0}\) \(\newcommand{\onevec}{\mathbf 1}\) \(\newcommand{\real}{\mathbb R}\) \(\newcommand{\twovec}[2]{\left[\begin{array}{r}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\ctwovec}[2]{\left[\begin{array}{c}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\threevec}[3]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\cthreevec}[3]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\fourvec}[4]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\cfourvec}[4]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\fivevec}[5]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\cfivevec}[5]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\mattwo}[4]{\left[\begin{array}{rr}#1 \amp #2 \\ #3 \amp #4 \\ \end{array}\right]}\) \(\newcommand{\laspan}[1]{\text{Span}\{#1\}}\) \(\newcommand{\bcal}{\cal B}\) \(\newcommand{\ccal}{\cal C}\) \(\newcommand{\scal}{\cal S}\) \(\newcommand{\wcal}{\cal W}\) \(\newcommand{\ecal}{\cal E}\) \(\newcommand{\coords}[2]{\left\{#1\right\}_{#2}}\) \(\newcommand{\gray}[1]{\color{gray}{#1}}\) \(\newcommand{\lgray}[1]{\color{lightgray}{#1}}\) \(\newcommand{\rank}{\operatorname{rank}}\) \(\newcommand{\row}{\text{Row}}\) \(\newcommand{\col}{\text{Col}}\) \(\renewcommand{\row}{\text{Row}}\) \(\newcommand{\nul}{\text{Nul}}\) \(\newcommand{\var}{\text{Var}}\) \(\newcommand{\corr}{\text{corr}}\) \(\newcommand{\len}[1]{\left|#1\right|}\) \(\newcommand{\bbar}{\overline{\bvec}}\) \(\newcommand{\bhat}{\widehat{\bvec}}\) \(\newcommand{\bperp}{\bvec^\perp}\) \(\newcommand{\xhat}{\widehat{\xvec}}\) \(\newcommand{\vhat}{\widehat{\vvec}}\) \(\newcommand{\uhat}{\widehat{\uvec}}\) \(\newcommand{\what}{\widehat{\wvec}}\) \(\newcommand{\Sighat}{\widehat{\Sigma}}\) \(\newcommand{\lt}{<}\) \(\newcommand{\gt}{>}\) \(\newcommand{\amp}{&}\) \(\definecolor{fillinmathshade}{gray}{0.9}\)Another type of equation that comes up quite often in physics and engineering is an exact equation. Suppose \(F(x,y)\) is a function of two variables, which we call the potential function. The naming should suggest potential energy, or electric potential. Exact equations and potential functions appear when there is a conservation law at play, such as conservation of energy. Let us make up a simple example. Let \[F(x,y) = x^2+y^2 . \nonumber \]

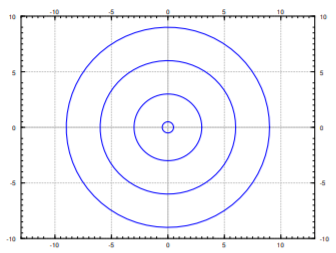

We are interested in the lines of constant energy, that is lines where the energy is conserved; we want curves where \(F(x,y) = C\), for some constant \(C\). In our example, the curves \(x^2+y^2=C\) are circles. See Figure \(\PageIndex{1}\).

We take the total derivative of \(F\): \[dF = \frac{\partial F}{\partial x} dx + \frac{\partial F}{\partial y} dy . \nonumber \]

For convenience, we will make use of the notation of \(F_x = \frac{\partial F}{\partial x}\) and \(F_y = \frac{\partial F}{\partial y}\). In our example, \[dF = 2x \, dx + 2y \, dy . \nonumber \]

We apply the total derivative to \(F(x,y) = C\), to find the differential equation \(dF = 0\). The differential equation we obtain in such a way has the form \[M \, dx + N \, dy = 0, \qquad \text{or} \qquad M + N \, \frac{dy}{dx} = 0 . \nonumber \]

An equation of this form is called exact if it was obtained as \(dF = 0\) for some potential function \(F\). In our simple example, we obtain the equation \[2x \, dx + 2y \, dy = 0, \qquad \text{or} \qquad 2x + 2y \, \frac{dy}{dx} = 0 . \nonumber \]

Since we obtained this equation by differentiating \(x^2+y^2=C\), the equation is exact. We often wish to solve for \(y\) in terms of \(x\). In our example, \[y = \pm \sqrt{C^2-x^2} . \nonumber \]

An interpretation of the setup is that at each point \(\vec{v} = (M,N)\) is a vector in the plane, that is, a direction and a magnitude. As \(M\) and \(N\) are functions of \((x,y)\), we have a vector field. The particular vector field \(\vec{v}\) that comes from an exact equation is a so-called conservative vector field, that is, a vector field that comes with a potential function \(F(x,y)\), such that \[\vec{v} = \left( \frac{\partial F}{\partial x} ,\frac{\partial F}{\partial y} \right) . \nonumber \] Let \(\gamma\) be a path in the plane starting at \((x_1,y_1)\) and ending at \((x_2,y_2)\). If we think of \(\vec{v}\) as force, then the work required to move along \(\gamma\) is \[\int_\gamma \vec{v}(\vec{r}) \cdot d\vec{r} = \int_\gamma M \, dx + N \, dy = F(x_2,y_2) - F(x_1,y_1) . \nonumber \]

That is, the work done only depends on endpoints, that is where we start and where we end. For example, suppose \(F\) is gravitational potential. The derivative of \(F\) given by \(\vec{v}\) is the gravitational force. What we are saying is that the work required to move a heavy box from the ground floor to the roof, only depends on the change in potential energy. That is, the work done is the same no matter what path we took; if we took the stairs or the elevator. Although if we took the elevator, the elevator is doing the work for us. The curves \(F(x,y) = C\) are those where no work need be done, such as the heavy box sliding along without accelerating or breaking on a perfectly flat roof, on a cart with incredibly well oiled wheels.

An exact equation is a conservative vector field, and the implicit solution of this equation is the potential function.

Solving exact equations

Now you, the reader, should ask: Where did we solve a differential equation? Well, in applications we generally know \(M\) and \(N\), but we do not know \(F\). That is, we may have just started with \(2x + 2y \frac{dy}{dx} = 0\), or perhaps even \[x + y \frac{dy}{dx} = 0 . \nonumber \]

It is up to us to find some potential \(F\) that works. Many different \(F\) will work; adding a constant to \(F\) does not change the equation. Once we have a potential function \(F\), the equation \(F\bigl(x,y(x)\bigr) = C\) gives an implicit solution of the ODE.

Let us find the general solution to \(2x + 2y \frac{dy}{dx} = 0\). Forget we knew what \(F\) was.

Solution

If we know that this is an exact equation, we start looking for a potential function \(F\). We have \(M = 2x\) and \(N=2y\). If \(F\) exists, it must be such that \(F_x (x,y) = 2x\). Integrate in the \(x\) variable to find \[\label{eq:exact:fint} F(x,y) = x^2 + A(y) , \]

for some function \(A(y)\). The function \(A\) is the , though it is only constant as far as \(x\) is concerned, and may still depend on \(y\). Now differentiate \(\eqref{eq:exact:fint}\) in \(y\) and set it equal to \(N\), which is what \(F_y\) is supposed to be: \[2y = F_y (x,y) = A'(y) . \nonumber \]

Integrating, we find \(A(y) = y^2\). We could add a constant of integration if we wanted to, but there is no need. We found \(F(x,y) = x^2+y^2\). Next for a constant \(C\), we solve

\[F\bigl(x,y(x)\bigr) = C . \nonumber \]

for \(y\) in terms of \(x\). In this case, we obtain \(y = \pm \sqrt{C^2-x^2}\) as we did before.

Why did we not need to add a constant of integration when integrating \(A'(y) = 2y\)? Add a constant of integration, say \(3\), and see what \(F\) you get. What is the difference from what we got above, and why does it not matter?

The procedure, once we know that the equation is exact, is:

- Integrate \(F_x = M\) in \(x\) resulting in \(F(x,y) = \text{something} + A(y)\).

- Differentiate this \(F\) in \(y\), and set that equal to \(N\), so that we may find \(A(y)\) by integration.

The procedure can also be done by first integrating in \(y\) and then differentiating in \(x\). Pretty easy huh? Let’s try this again.

Consider now \(2x+y + xy \frac{dy}{dx} = 0\).

OK, so \(M = 2x+y\) and \(N=xy\). We try to proceed as before. Suppose \(F\) exists. Then \(F_x (x,y) = 2x+y\). We integrate: \[F(x,y) = x^2 + xy + A(y) \nonumber \] for some function \(A(y)\). Differentiate in \(y\) and set equal to \(N\): \[N = xy = F_y (x,y) = x+A'(y) . \nonumber \] But there is no way to satisfy this requirement! The function \(xy\) cannot be written as \(x\) plus a function of \(y\). The equation is not exact; no potential function \(F\) exists.

But there is no way to satisfy this requirement! The function \(xy\) cannot be written as \(x\) plus a function of \(y\). The equation is not exact; no potential function \(F\) exists

Is there an easier way to check for the existence of \(F\), other than failing in trying to find it? Turns out there is. Suppose \(M = F_x\) and \(N = F_y\). Then as long as the second derivatives are continuous, \[\frac{\partial M}{\partial y} = \frac{\partial^2 F}{\partial y \partial x} = \frac{\partial^2 F}{\partial x \partial y} = \frac{\partial N}{\partial x} . \nonumber \] Let us state it as a theorem. Usually this is called the Poincaré Lemma.\(^{1}\)

Pointcaré

If \(M\) and \(N\) are continuously differentiable functions of \((x,y)\), and \(\frac{\partial M}{\partial y} = \frac{\partial N}{\partial x}\), then near any point there is a function \(F(x,y)\) such that \(M = \frac{\partial F}{\partial x}\) and \(N = \frac{\partial F}{\partial y}\).

The theorem doesn’t give us a global \(F\) defined everywhere. In general, we can only find the potential locally, near some initial point. By this time, we have come to expect this from differential equations.

Let us return to Example \(\PageIndex{2}\) where \(M = 2x + y\) and \(N = xy\). Notice \(M_y = 1\) and \(N_x = y\), which are clearly not equal. The equation is not exact.

Solve \[\frac{dy}{dx} = \frac{-2x-y}{x-1}, \qquad y(0) = 1. \nonumber \]

Solution

We write the equation as \[(2x+y) + (x-1)\frac{dy}{dx} = 0 , \nonumber \] so \(M = 2x+y\) and \(N = x-1\). Then \[M_y = 1 = N_x . \nonumber \]

The equation is exact. Integrating \(M\) in \(x\), we find \[F(x,y) = x^2+xy + A(y) . \nonumber \]

Differentiating in \(y\) and setting to \(N\), we find \[x-1 = x + A'(y) . \nonumber \]

So \(A'(y) = -1\), and \(A(y) = -y\) will work. Take \(F(x,y) = x^2+xy-y\). We wish to solve \(x^2+xy-y = C\). First let us find \(C\). As \(y(0)=1\) then \(F(0,1) = C\). Therefore \(0^2+0\times 1 - 1 = C\), so \(C=-1\). Now we solve \(x^2+xy-y = -1\) for \(y\) to get \[y = \frac{-x^2-1}{x-1} . \nonumber \]

Solve \[-\frac{y}{x^2+y^2} dx + \frac{x}{x^2+y^2} dy = 0 , \qquad y(1) = 2. \nonumber \]

Solution

We leave to the reader to check that \(M_y = N_x\).

This vector field \((M,N)\) is not conservative if considered as a vector field of the entire plane minus the origin. The problem is that if the curve \(\gamma\) is a circle around the origin, say starting at \((1,0)\) and ending at \((1,0)\) going counterclockwise, then if \(F\) existed we would expect

\[0 = F(1,0) - F(1,0) = \int_\gamma F_x \, dx + F_y \, dy = \int_\gamma \frac{-y}{x^2+y^2} \, dx + \frac{x}{x^2+y^2} \, dy = 2\pi . \nonumber \]

That is nonsense! We leave the computation of the path integral to the interested reader, or you can consult your multivariable calculus textbook. So there is no potential function \(F\) defined everywhere outside the origin \((0,0)\).

If we think back to the theorem, it does not guarantee such a function anyway. It only guarantees a potential function locally, that is only in some region near the initial point. As \(y(1) = 2\) we start at the point \((1,2)\). Considering \(x > 0\) and integrating \(M\) in \(x\) or \(N\) in \(y\), we find

\[F(x,y) = \operatorname{arctan} \left( \frac{y}{x} \right) . \nonumber \]

The implicit solution is \(\operatorname{arctan} \bigl( \frac{y}{x} \bigr) = C\). Solving, \(y = \tan(C) x\). That is, the solution is a straight line. Solving \(y(1) = 2\) gives us that \(\tan(C) = 2\), and so \(y= 2x\) is the desired solution. See Figure \(\PageIndex{1}\), and note that the solution only exists for \(x > 0\).

Solve \[x^2+y^2 + 2y(x+1) \frac{dy}{dx} = 0 . \nonumber \]

Solution

The reader should check that this equation is exact. Let \(M= x^2+y^2\) and \(N=2y(x+1)\). We follow the procedure for exact equations

\[F(x,y) = \frac{1}{3}x^3 + xy^2 + A(y) , \nonumber \] and \[2y(x+1) = 2xy + A'(y) . \nonumber \]

Therefore \(A'(y) = 2y\) or \(A(y) = y^2\) and \(F(x,y) = \frac{1}{3}x^3 + xy^2 + y^2\). We try to solve \(F(x,y) = C\). We easily solve for \(y^2\) and then just take the square root:

\[y^2 = \frac{C-(\frac{1}{3})x^3}{x+1}, \qquad \text{so} \qquad y = \pm \sqrt{\frac{C-(\frac{1}{3})x^3}{x+1}} . \nonumber \] When \(x=-1\), the term in front of \(\frac{dy}{dx}\) vanishes. You can also see that our solution is not valid in that case. However, one could in that case try to solve for \(x\) in terms of \(y\) starting from the implicit solution \(\frac{1}{3}x^3 + xy^2 + y^2 = C\). The solution is somewhat messy and we leave it as implicit.

Integrating factors

Sometimes an equation \(M\, dx + N \, dy = 0\) is not exact, but it can be made exact by multiplying with a function \(u(x,y)\). That is, perhaps for some nonzero function \(u(x,y)\), \[u(x,y) M(x,y) \, dx + u(x,y) N(x,y) \, dy = 0 \nonumber \] is exact. Any solution to this new equation is also a solution to \(M\, dx + N \, dy = 0\).

In fact, a linear equation \[\frac{dy}{dx} + p(x) y = f(x), \qquad \text{or} \qquad \bigl( p(x) y - f(x) \bigr)\, dx + dy = 0 \nonumber \] is always such an equation. Let \(r(x) = e^{\int p(x)\,dx}\) be the integrating factor for a linear equation. Multiply the equation by \(r(x)\) and write it in the form of \(M + N \frac{dy}{dx} = 0\). \[r(x) p(x) y - r(x) f(x) + r(x) \frac{dy}{dx} = 0 . \nonumber \] Then \(M = r(x) p(x) y - r(x) f(x)\), so \(M_y = r(x) p(x)\), while \(N = r(x)\), so \(N_x = r'(x) = r(x) p(x)\). In other words, we have an exact equation. Integrating factors for linear functions are just a special case of integrating factors for exact equations.

But how do we find the integrating factor \(u\)? Well, given an equation \[M \, dx + N \, dy = 0 , \nonumber \] \(u\) should be a function such that \[\frac{\partial}{\partial y} \bigl[ u M \bigr] = u_y M + u M_y = \frac{\partial}{\partial x} \bigl[ u N \bigr] = u_x N + u N_x . \nonumber \] Therefore, \[(M_y-N_x)u = u_x N - u_y M . \nonumber \] At first it may seem we replaced one differential equation by another. True, but all hope is not lost.

A strategy that often works is to look for a \(u\) that is a function of \(x\) alone, or a function of \(y\) alone. If \(u\) is a function of \(x\) alone, that is \(u(x)\), then we write \(u'(x)\) instead of \(u_x\), and \(u_y\) is just zero. Then \[\frac{M_y-N_x}{N}u = u' . \nonumber \] In particular, \(\frac{M_y-N_x}{N}\) ought to be a function of \(x\) alone (not depend on \(y\)). If so, then we have a linear equation \[u' - \frac{M_y-N_x}{N} u = 0 . \nonumber \] Letting \(P(x) = \frac{M_y-N_x}{N}\), we solve using the standard integrating factor method, to find \(u(x) = C e^{\int P(x) \, dx}\). The constant in the solution is not relevant, we need any nonzero solution, so we take \(C=1\). Then \(u(x) = e^{\int P(x) \, dx}\) is the integrating factor.

Similarly we could try a function of the form \(u(y)\). Then \[\frac{M_y-N_x}{M} u = - u' . \nonumber \] In particular, \(\frac{M_y-N_x}{M}\) ought to be a function of \(y\) alone. If so, then we have a linear equation \[u' + \frac{M_y-N_x}{M} u = 0 . \nonumber \] Letting \(Q(y) = \frac{M_y-N_x}{M}\), we find \(u(y) = C e^{-\int Q(y) \, dy}\). We take \(C=1\). So \(u(y) = e^{-\int Q(y) \, dy}\) is the integrating factor.

Solve \[\frac{x^2+y^2}{x+1} + 2y \frac{dy}{dx} = 0 . \nonumber \]

Solution

Let \(M= \frac{x^2+y^2}{x+1}\) and \(N=2y\). Compute \[M_y-N_x = \frac{2y}{x+1} - 0 = \frac{2y}{x+1} . \nonumber \]

As this is not zero, the equation is not exact. We notice \[P(x) = \frac{M_y-N_x}{N} = \frac{2y}{x+1} \frac{1}{2y} = \frac{1}{x+1} \nonumber \] is a function of \(x\) alone. We compute the integrating factor \[e^{\int P(x) \, dx} = e^{\ln (x+1)} = x+1 . \nonumber \] We multiply our given equation by \((x+1)\) to obtain \[x^2+y^2 + 2y(x+1) \frac{dy}{dx} = 0 , \nonumber \] which is an exact equation that we solved in Example \(\PageIndex{5}\). The solution was \[y = \pm \sqrt{\frac{C-(\frac{1}{3})x^3}{x+1}} . \nonumber \]

Solve \[y^2 + (xy+1) \frac{dy}{dx} = 0 . \nonumber \]

Solution

First compute \[M_y-N_x = 2y-y = y . \nonumber \] As this is not zero, the equation is not exact. We observe \[Q(y) = \frac{M_y-N_x}{M} = \frac{y}{y^2} = \frac{1}{y} \nonumber \] is a function of \(y\) alone. We compute the integrating factor \[e^{-\int Q(y) \, dy} = e^{-\ln y} = \frac{1}{y} . \nonumber \] Therefore we look at the exact equation \[y + \frac{xy+1}{y} \frac{dy}{dx} = 0 . \nonumber \] The reader should double check that this equation is exact. We follow the procedure for exact equations \[F(x,y) = xy + A(y) , \nonumber \] and \[\frac{xy+1}{y} = x+\frac{1}{y} = x+ A'(y) . \nonumber \] Consequently \(A'(y) = \frac{1}{y}\) or \(A(y) = \ln y\). Thus \(F(x,y) = xy + \ln y\). It is not possible to solve \(F(x,y)=C\) for \(y\) in terms of elementary functions, so let us be content with the implicit solution: \[xy + \ln y = C . \nonumber \] We are looking for the general solution and we divided by \(y\) above. We should check what happens when \(y=0\), as the equation itself makes perfect sense in that case. We plug in \(y=0\) to find the equation is satisfied. So \(y=0\) is also a solution.

Footnotes

[1] Named for the French polymath Jules Henri Poincaré (1854–1912).