4.10: Dirichlet Problem in the Circle and the Poisson Kernel

( \newcommand{\kernel}{\mathrm{null}\,}\)

Laplace in Polar Coordinates

A more natural setting for the Laplace equation Δu=0 is the circle rather than the square. On the other hand, what makes the problem somewhat more difficult is that we need polar coordinates.

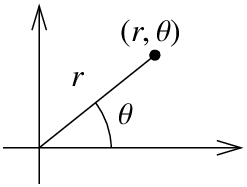

Recall that the polar coordinates for the (x,y)-plane are (r,θ):

x=rcosθ, y=rsinθ,

where r≥0 and −π<θ<π. So (x,y) is distance r from the origin at angle θ from the positive x-axis.

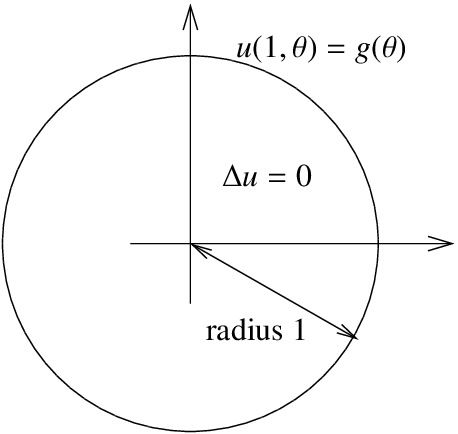

Now that we know our coordinates, let us give the problem we wish to solve. We have a circular region of radius 1, and we are interested in the Dirichlet problem for the Laplace equation for this region. Let u(r,θ) denote the temperature at the point (r,θ) in polar coordinates. We have the problem:

Δu=0,for r<1,u(1,θ)=g(θ),for π<θ≤π.

The first issue we face is that we do not know what the Laplacian is in polar coordinates. Normally we would find uxx and uyy in terms of the derivatives in r and θ. We would need to solve for r and θ in terms of x and y. While this is certainly possible, it happens to be more convenient to work in reverse. Let us instead compute derivatives in r and θ in terms of derivatives in x and y and then solve. The computations are easier this way. First

xr=cosθ,xθ=−rsinθ,yr=sinθ,yθ=rcosθ.

Next by chain rule we obtain

ur=uxxr+uyyr=cos(θ)ux+sin(θ)uy,urr=cos(θ)(uxxxr+uxyyr)+sin(θ)(uyxxr+uyyyr)=cos2(θ)uxx+2cos(θ)sin(θ)uxy+sin2(θ)uyy.

Similarly for the θ derivative. Note that we have to use product rule for the second derivative.

uθ=uxxθ+uyyθ=−rsin(θ)ux+rcos(θ)uy,uθθ=−rcos(θ)(ux)−rsin(θ)(uxxxθ+uxyyθ)−rsin(θ)(uy)+rcos(θ)(uyxxθ+uyyyθ)=−rcos(θ)ux−rsin(θ)uy+r2sin2(θ)uxx−r22sin(θ)cos(θ)uxy+r2cos2(θ)uyy.

Let us now try to solve for uxx+uyy. We start with 1r2uθθ to get rid of those pesky r2. If we add urr and use the fact that cos2(θ)+sin2(θ)=1, we get

1r2uθθ+urr=uxx+uyy−1rcos(θ)ux−1rsin(θ)uy.

We’re not quite there yet, but all we are lacking is 1rur. Adding it we obtain the Laplacian in polar coordinates:

1r2uθθ+1rur+urr=uxx+uyy=Δu.

Notice that the Laplacian in polar coordinates no longer has constant coefficients.

Series Solution

Let us separate variables as usual. That is let us try u(r,θ)=R(r)Θ(θ). Then

0=Δu=1r2RΘ″+1rR′Θ+R″Θ.

Let us put R on one side and Θ on the other and conclude that both sides must be constant.

1r2RΘ″=−(1rR′+R″)Θ.Θ″Θ=−rR′+r2R″R+−λ.

We get two equations:

Θ″+λΘ=0,r2R″+rR′−λR=0.

Let us first focus on Θ. We know that u(r,θ) ought to be 2π-periodic in θ, that is, u(r,θ)=u(r,θ+2π). Therefore, the solution to Θ″+λΘ=0 must be 2π-periodic. We have seen such a problem in Example 4.1.5. We conclude that λ=n2 for a nonnegative integer n=0,1,2,3,.... The equation becomes Θ″+n2Θ=0. When n=0 the equation is just Θ″=0, so we have the general solution Aθ+B. As Θ is periodic, A=0. For convenience let us write this solution as

Θ0=a02

for some constant a0. For positive n, the solution to Θ″+n2Θ=0 is

Θn=ancos(nθ)+bnsin(nθ),

for some constants an and bn.

Next, we consider the equation for R,

r2R″+rR′−n2R=0.

This equation has appeared in exercises before—we solved it in Exercise 2.E.2.1.6 and Exercise 2.E.1.7. The idea is to try a solution rs and if that does not work out try a solution of the form rslnr. When n=0 we obtain

R0=Ar0+Br0lnr=A+Blnr,

and if n>0, we get

Rn=Arn+Br−n.

The function u(r,θ) must be finite at the origin, that is, when r=0. Therefore, B=0 in both cases. Let us set A=1 in both cases as well, the constants in Θn will pick up the slack so we do not lose anything. Therefore let

R0=1,andRn=rn.

Hence our building block solutions are

u0(r,θ)=a02,un(r,θ)=anrncos(nθ)+bnrnsin(nθ).

Putting everything together our solution is:

u(r,θ)=a02+∞∑n=1anrncos(nθ)+bnrnsin(nθ).

We look at the boundary condition in (4.10.2),

g(θ)=u(1,θ)=a02+∞∑n=1ancos(nθ)+bnsin(nθ).

Therefore, the solution (4.10.2) is to expand g(θ), which is a 2π-periodic function as a Fourier series, and then the nth coordinate is multiplied by rn. In other words, to compute an and bn from the formula we can, as usual, compute

an=1π∫−ππg(θ)cos(nθ)dθ,andbn=1π∫−ππg(θ)sin(nθ)dθ.

Suppose we wish to solve

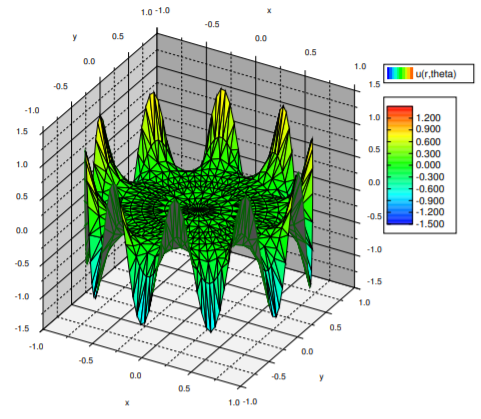

Δu=0,0≤r<1,−π<θ≤π,u(1,θ)=cos(10θ),−π<θ≤π.

The solution is

u(r,θ)=r10cos(10θ).

See the plot in Figure 4.10.3. The thing to notice in this example is that the effect of a high frequency is mostly felt at the boundary. In the middle of the disc, the solution is very close to zero. That is because r10 rather small when r is close to 0.

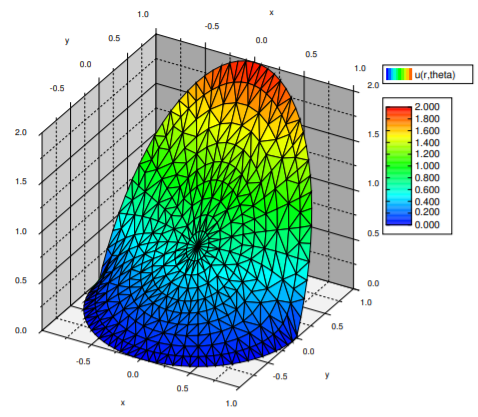

Let us solve a more difficult problem. Suppose we have a long rod with circular cross section of radius 1 and we wish to solve the steady state heat problem. If the rod is long enough we simply need to solve the Laplace equation in two dimensions. Let us put the center of the rod at the origin and we have exactly the region we are currently studying—a circle of radius 1. For the boundary conditions, suppose in Cartesian coordinates x and y, the temperature is fixed at 0 when y<0 and at 2y when y>0.

We set the problem up. As y=rsin(θ), then on the circle of radius 1 we have 2y=2sin(θ). So

Δu=0,0≤r<1,−π<θ≤π,u(1,θ)={2sin(θ)if −0≤θ≤π,0if −π<θ<0.

We must now compute the Fourier series for the boundary condition. By now the reader has plentiful experience in computing Fourier series and so we simply state that

u(1,θ)=2π+sin(θ)+∞∑n=1−4π(4n2−1)cos(2nθ).

Compute the series for u(1,θ) and verify that it really is what we have just claimed. Hint: Be careful, make sure not to divide by zero.

We now simply write the solution (see Figure 4.10.4) by multiplying by rn in the right places.

u(r,θ)=2π+rsin(θ)+∞∑n=1−4r2nπ(4n2−1)cos(2nθ).

Poisson Kernel

There is another way to solve the Dirichlet problem with the help of an integral kernel. That is, we will find a function P(r,θ,α) called the Poisson kernel1 such that

u(r,θ)=12π∫π−πP(r,θ,α)g(α)dα.

While the integral will generally not be solvable analytically, it can be evaluated numerically. In fact, unless the boundary data is given as a Fourier series already, it will be much easier to numerically evaluate this formula as there is only one integral to evaluate.

The formula also has theoretical applications. For instance, as P(r,θ,α) will have infinitely many derivatives, then via differentiating under the integral we find that the solution u(r,θ) has infinitely many derivatives, at least when inside the circle, r<1. By infinitely many derivatives what you should think of is that u(r,θ) has “no corners” and all of its partial derivatives exist too and also have “no corners”.

We will compute the formula for P(r,θ,α) from the series solution, and this idea can be applied anytime you have a convenient series solution where the coefficients are obtained via integration. Hence you can apply this reasoning to obtain such integral kernels for other equations, such as the heat equation. The computation is long and tedious, but not overly difficult. Since the ideas are often applied in similar contexts, it is good to understand how this computation works.

What we do is start with the series solution and replace the coefficients with the integrals that compute them. Then we try to write everything as a single integral. We must use a different dummy variable for the integration and hence we use α instead of θ.

u(r,θ)=a02+∞∑n=1anrncos(nθ)+bnrnsin(nθ)=(12π∫π−πg(α)dα)⏟a02+∞∑n=1(1π∫π−πg(α)cos(nα)dα)⏟anrncos(nθ)+(1π∫π−πg(α)sin(nα)dα)⏟bnrnsin(nθ)=12π∫π−π(g(α)+2∞∑n=1g(α)cos(nα)rncos(nθ)+g(α)sin(nα)rnsin(nθ))dα=12π∫π−π(1+2∞∑n=1rn(cos(nα)cos(nθ)+sin(nα)sin(nθ)))⏟P(r,θ,α)g(α)dα

OK, so we have what we wanted, the expression in the parentheses is the Poisson kernel, P(r,θ,α). However, we can do a lot better. It is still given as a series, and we would really like to have a nice simple expression for it. We must work a little harder. The trick is to rewrite everything in terms of complex exponentials. Let us work just on the kernel.

P(r,θ,α)=1+2∞∑n=1rn(cos(nα)cos(nθ)+sin(nα)sin(nθ))=1+2∞∑n=1rncos(n(θ−α))=1+2∞∑n=1rn(ein(θ−α)+e−in(θ−α))=1+∞∑n=1(rei(θ−α))n+∞∑n=1(re−i(θ−α))n.

In the above expression we recognize the geometric series. That is, recall from calculus that as long as |z|<1, then

∞∑n=1zn=z1−z.

Note that n starts at 1 and that is why we have the z in the numerator. It is the standard geometric series multiplied by z. Let us continue with the computation.

P(r,θ,α)=1+∞∑n=1(rei(θ−α))n+∞∑n=1(re−i(θ−α))n=1+rei(θ−α)1−rei(θ−α)+re−i(θ−α)1−re−i(θ−α)=(1−rei(θ−α))(1−re−i(θ−α))+(1−re−i(θ−α))rei(θ−α)+(1−rei(θ−α))re−i(θ−α)(1−rei(θ−α))(1−re−i(θ−α))=1−r21−rei(θ−α)−re−i(θ−α)+r2=1−r21−2rcos(θ−α)+r2.

Now that’s a formula we can live with. The solution to the Dirichlet problem using the Poisson kernel is

u(r,θ)=12π∫π−π1−r21−2rcos(θ−α)+r2g(α)dα.

Sometimes the formula for the Poisson kernel is given together with the constant 12π, in which case we should of course not leave it in front of the integral. Also, often the limits of the integral are given as 0 to 2π; everything inside is 2π-periodic in α, so this does not change the integral.

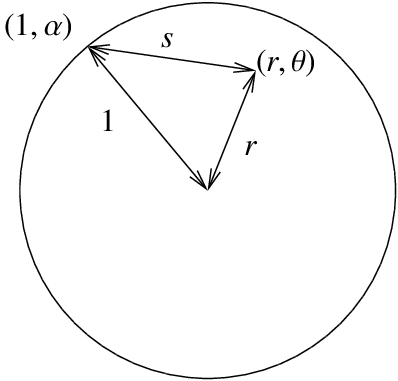

Let us not leave the Poisson kernel without explaining its geometric meaning. Let s be the distance from (r,θ) to (1,α). You may recall from calculus that this distance s in polar coordinates is given precisely by the square root of 1−2rcos(θ−α)+r2. That is, the Poisson kernel is really the formula

1−r2s2.

One final note we make about the formula is to note that it is really a weighted average of the boundary values. First let us look at what happens at the origin, that is when r=0.

u(0,0)=12π∫π−π1−021−2(0)cos(θ−α)+02g(α)dα=12π∫π−πg(α)dα.

So u(0,0) is precisely the average value of g(θ) and therefore the average value of u on the boundary. This is a general feature of harmonic functions, the value at some point p is equal to the average of the values on a circle centered at p.

What the formula says is that the value of the solution at any point in the circle is a weighted average of the boundary data g(θ). The kernel is bigger when (r,θ) is closer to (1,α). Therefore when computing u(r,θ) we give more weight to the values g(α) when (1,α) is closer to (r,θ) and less weight to the values g(θ) when (1,α) far from (r,θ).

Footnotes

[1] Named for the French mathematician Siméon Denis Poisson (1781 – 1840).