8.1: The Determinant Formula

- Page ID

- 1991

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\dsum}{\displaystyle\sum\limits} \)

\( \newcommand{\dint}{\displaystyle\int\limits} \)

\( \newcommand{\dlim}{\displaystyle\lim\limits} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\(\newcommand{\longvect}{\overrightarrow}\)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\(\newcommand{\avec}{\mathbf a}\) \(\newcommand{\bvec}{\mathbf b}\) \(\newcommand{\cvec}{\mathbf c}\) \(\newcommand{\dvec}{\mathbf d}\) \(\newcommand{\dtil}{\widetilde{\mathbf d}}\) \(\newcommand{\evec}{\mathbf e}\) \(\newcommand{\fvec}{\mathbf f}\) \(\newcommand{\nvec}{\mathbf n}\) \(\newcommand{\pvec}{\mathbf p}\) \(\newcommand{\qvec}{\mathbf q}\) \(\newcommand{\svec}{\mathbf s}\) \(\newcommand{\tvec}{\mathbf t}\) \(\newcommand{\uvec}{\mathbf u}\) \(\newcommand{\vvec}{\mathbf v}\) \(\newcommand{\wvec}{\mathbf w}\) \(\newcommand{\xvec}{\mathbf x}\) \(\newcommand{\yvec}{\mathbf y}\) \(\newcommand{\zvec}{\mathbf z}\) \(\newcommand{\rvec}{\mathbf r}\) \(\newcommand{\mvec}{\mathbf m}\) \(\newcommand{\zerovec}{\mathbf 0}\) \(\newcommand{\onevec}{\mathbf 1}\) \(\newcommand{\real}{\mathbb R}\) \(\newcommand{\twovec}[2]{\left[\begin{array}{r}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\ctwovec}[2]{\left[\begin{array}{c}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\threevec}[3]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\cthreevec}[3]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\fourvec}[4]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\cfourvec}[4]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\fivevec}[5]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\cfivevec}[5]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\mattwo}[4]{\left[\begin{array}{rr}#1 \amp #2 \\ #3 \amp #4 \\ \end{array}\right]}\) \(\newcommand{\laspan}[1]{\text{Span}\{#1\}}\) \(\newcommand{\bcal}{\cal B}\) \(\newcommand{\ccal}{\cal C}\) \(\newcommand{\scal}{\cal S}\) \(\newcommand{\wcal}{\cal W}\) \(\newcommand{\ecal}{\cal E}\) \(\newcommand{\coords}[2]{\left\{#1\right\}_{#2}}\) \(\newcommand{\gray}[1]{\color{gray}{#1}}\) \(\newcommand{\lgray}[1]{\color{lightgray}{#1}}\) \(\newcommand{\rank}{\operatorname{rank}}\) \(\newcommand{\row}{\text{Row}}\) \(\newcommand{\col}{\text{Col}}\) \(\renewcommand{\row}{\text{Row}}\) \(\newcommand{\nul}{\text{Nul}}\) \(\newcommand{\var}{\text{Var}}\) \(\newcommand{\corr}{\text{corr}}\) \(\newcommand{\len}[1]{\left|#1\right|}\) \(\newcommand{\bbar}{\overline{\bvec}}\) \(\newcommand{\bhat}{\widehat{\bvec}}\) \(\newcommand{\bperp}{\bvec^\perp}\) \(\newcommand{\xhat}{\widehat{\xvec}}\) \(\newcommand{\vhat}{\widehat{\vvec}}\) \(\newcommand{\uhat}{\widehat{\uvec}}\) \(\newcommand{\what}{\widehat{\wvec}}\) \(\newcommand{\Sighat}{\widehat{\Sigma}}\) \(\newcommand{\lt}{<}\) \(\newcommand{\gt}{>}\) \(\newcommand{\amp}{&}\) \(\definecolor{fillinmathshade}{gray}{0.9}\)The determinant extracts a single number from a matrix that determines whether its invertibility. Lets see how this works for small matrices first.

Simple Examples

For small cases, we already know when a matrix is invertible. If \(M\) is a \(1\times 1\) matrix, then \(M=(m) \Rightarrow M^{-1}=(1/m)\). Then \(M\) is invertible if and only if \(m\neq 0\).

For \(M\) a \(2\times 2\) matrix, chapter 7 section 7.5 shows that if \(M=\begin{pmatrix}

m^{1}_{1} & m^{1}_{2} \\

m^{2}_{1} & m^{2}_{2} \\

\end{pmatrix}\, ,\) then \(M^{-1}=\frac{1}{m^{1}_{1}m^{2}_{2}-m^{1}_{2}m^{2}_{1}}\begin{pmatrix}

m^{2}_{2} & -m^{1}_{2} \\

-m^{2}_{1} & m^{1}_{1} \\

\end{pmatrix}\, .\) Thus \(M\) is invertible if and only if

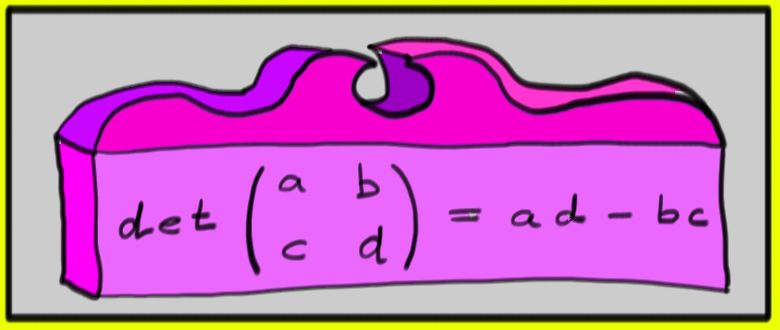

\[m^{1}_{1}m^{2}_{2}-m^{1}_{2}m^{2}_{1}\neq 0\, .\] For \(2\times 2\) matrices, this quantity is called the \(\textit{determinant of \(M\)}\).

\[

\det M = \det \begin{pmatrix}

m^{1}_{1} & m^{1}_{2} \\

m^{2}_{1} & m^{2}_{2} \\

\end{pmatrix} = m^{1}_{1}m^{2}_{2}-m^{1}_{2}m^{2}_{1}\, .

\]

Example \(\PageIndex{1}\):

For a \(3\times 3\) matrix, \[M=\begin{pmatrix}

m^{1}_{1} & m^{1}_{2} & m^{1}_{3}\\

m^{2}_{1} & m^{2}_{2} & m^{2}_{3}\\

m^{3}_{1} & m^{3}_{2} & m^{3}_{3}\\

\end{pmatrix}\, ,\] then---see review question 1---\(M\) is non-singular if and only if

\[\det M = m^{1}_{1}m^{2}_{2}m^{3}_{3} - m^{1}_{1}m^{2}_{3}m^{3}_{2} + m^{1}_{2}m^{2}_{3}m^{3}_{1} - m^{1}_{2}m^{2}_{1}m^{3}_{3} + m^{1}_{3}m^{2}_{1}m^{3}_{2} - m^{1}_{3}m^{2}_{2}m^{3}_{1} \neq 0.\]

Notice that in the subscripts, each ordering of the numbers \(1\), \(2\), and \(3\) occurs exactly once. Each of these is a \(\textit{permutation}\) of the set \(\{1,2,3\}\).

Permutations

Consider \(n\) objects labeled \(1\) through \(n\) and shuffle them. Each possible shuffle is called a \(\textit{permutation}\).

For example, here is an example of a permutation of \(1\)--\(5\):

\[

\sigma = \begin{bmatrix}

1 & 2 & 3 & 4 & 5 \\

4 & 2 & 5 & 1 & 3

\end{bmatrix}

\]

We can consider a permutation \(\sigma\) as an invertible function from the set of numbers \([n] := \{1, 2, \dotsc, n\}\) to \([n]\), so can write \(\sigma(3) = 5\) in the above example. In general we can write

\[\left[\!

\begin{array}{ccccc}

1 & 2 & 3 & 4 & 5 \\

\sigma(1) & \sigma(2) & \sigma(3) & \sigma(4) & \sigma(5)

\end{array}\!\right]\, ,

\]

but since the top line of any permutation is always the same, we can omit it and just write:

\[

\sigma = \begin{bmatrix}

\sigma(1) & \sigma(2) & \sigma(3) & \sigma(4) & \sigma(5)

\end{bmatrix}

\]

and so our example becomes simply \(\sigma = [4\, 2\, 5\, 1\, 3]\). The mathematics of permutations is extensive; there are a few key properties of permutations that we'll need:

- There are \(n!\) permutations of \(n\) distinct objects, since there are \(n\) choices for the first object, \(n-1\) choices for the second once the first has been chosen, and so on.

- Every permutation can be built up by successively swapping pairs of objects. For example, to build up the permutation \(\begin{bmatrix} 3 & 1 & 2 \end{bmatrix}\) from the trivial permutation \(\begin{bmatrix} 1 & 2 & 3 \end{bmatrix}\), you can first swap \(2\) and \(3\), and then swap \(1\) and \(3\).

- For any given permutation \(\sigma\), there is some number of swaps it takes to build up the permutation. (It's simplest to use the minimum number of swaps, but you don't have to: it turns out that \(\textit{any}\) way of building up the permutation from swaps will have have the same parity of swaps, either even or odd.) If this number happens to be even, then \(\sigma\) is called an \(\textit{even permutation}\) if this number is odd, then \(\sigma\) is an \(\textit{odd permutation}\). In fact, \(n!\) is even for all \(n\geq 2\), and exactly half of the permutations are even and the other half are odd. It's worth noting that the trivial permutation (which sends \(i\rightarrow i\) for every \(i\)) is an even permutation, since it uses zero swaps.

Definition

The \(\textit{sign function}\) is a function \(\textit{sgn}\) that sends permutations to the set \(\{-1,1\}\), defined by:

\[ \text{sgn}(\sigma) =

\left\{ \begin{array}{rl}

1 & \mbox{if \(\sigma\) is even};\\

-1 & \mbox{if \(\sigma\) is odd}.\end{array} \right.

\]

We can use permutations to give a definition of the determinant.

Definition

For an \(n \times n\) matrix \(M\), the \(\textit{determinant}\) of \(M\) (sometimes written \(|M|\)) is given by:

\[\det M = \sum\limits_{\sigma}\textit{sgn}(\sigma)m^{1}_{\sigma(1)}m^{2}_{\sigma(2)}\cdots m^{n}_{\sigma(n)}\]

The sum is over all permutations of \(n\). Each summand is a product of a single entry from each row, but with the column numbers shuffled by the permutation \(\sigma\). The last statement about the summands yields a nice property of the determinant:

Theorem

If \(M=(m^{i}_{j})\) has a row consisting entirely of zeros, then \(m^{i}_{\sigma(i)}=0\) for every \(\sigma\) and some \(i\). Moreover \(\det M=0\).

Because there are many permutations of \(n\), writing the determinant this way for a general matrix gives a very long sum. For \(n=4\), there are \(24=4!\) permutations, and for \(n=5\), there are already \(120=5!\) permutations.

For a \(4\times 4\) matrix, \(M=\begin{pmatrix}

m^{1}_{1} & m^{1}_{2} & m^{1}_{3} & m^{1}_{4}\\

m^{2}_{1} & m^{2}_{2} & m^{2}_{3} & m^{2}_{4}\\

m^{3}_{1} & m^{3}_{2} & m^{3}_{3} & m^{3}_{4}\\

m^{4}_{1} & m^{4}_{2} & m^{4}_{3} & m^{4}_{4}\\

\end{pmatrix}\), then \(\det M\) is:

\begin{eqnarray*}

\det M &=&

m^{1}_{1}m^{2}_{2}m^{3}_{3}m^{4}_{4}

-m^{1}_{1}m^{2}_{3}m^{3}_{2}m^{4}_{4}

-m^{1}_{1}m^{2}_{2}m^{3}_{4}m^{4}_{3} \\

& -&m^{1}_{2}m^{2}_{1}m^{3}_{3}m^{4}_{4}

+m^{1}_{1}m^{2}_{3}m^{3}_{4}m^{4}_{2}

+m^{1}_{1}m^{2}_{4}m^{3}_{2}m^{4}_{3} \\

&+ & m^{1}_{2}m^{2}_{3}m^{3}_{1}m^{4}_{4}

+m^{1}_{2}m^{2}_{1}m^{3}_{4}m^{4}_{3}

\pm \text{16 more terms}.

\end{eqnarray*}

This is very cumbersome.

Luckily, it is very easy to compute the determinants of certain matrices. For example, if \(M\) is diagonal, then \(M^{i}_{j}=0\) whenever \(i\neq j\). Then all summands of the determinant involving off-diagonal entries vanish, so:

\[\det M = \sum_{\sigma} \text{sgn}(\sigma) m^{1}_{\sigma(1)}m^{2}_{\sigma(2)}\cdots m^{n}_{\sigma(n)}= m^{1}_{1}m^{2}_{2}\cdots m^{n}_{n}.\]

Thus: \(\textit{The~ determinant ~of~ a~ diagonal ~matrix~ is~ the~ product ~of ~its~ diagonal~ entries.}\)

Since the identity matrix is diagonal with all diagonal entries equal to one, we have:

\[\det I=1.\]

We would like to use the determinant to decide whether a matrix is invertible. Previously, we computed the inverse of a matrix by applying row operations. Therefore we ask what happens to the determinant when row operations are applied to a matrix.

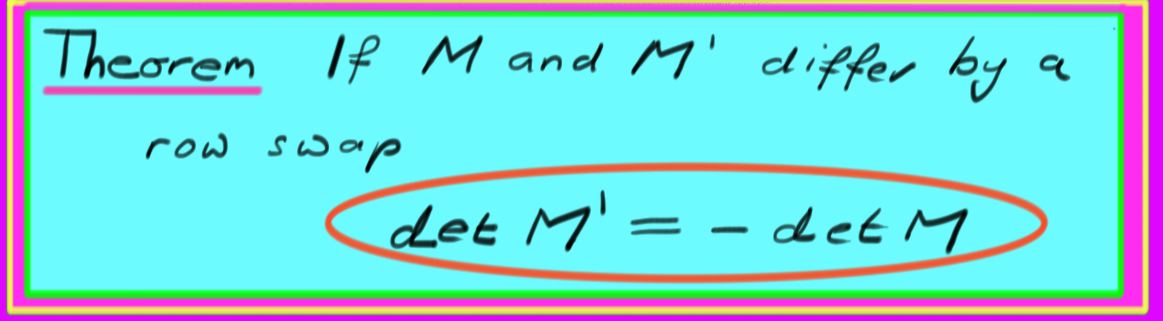

Remark: Swapping rows

Lets swap rows \(i\) and \(j\) of a matrix \(M\) and then compute its determinant. For the permutation \(\sigma\), let \(\hat{\sigma}\) be the permutation obtained by swapping positions \(i\) and \(j\). Clearly $$\hat{\sigma}=-\sigma\, .$$ Let \(M'\) be the matrix \(M\) with rows \(i\) and \(j\) swapped. Then (assuming \(i<j\)):

\begin{eqnarray}

\det M' & = & \sum_{\sigma} \text{sgn}(\sigma)\, m^{1}_{\sigma(1)}\cdots m^{j}_{\sigma(i)}\cdots m^{i}_{\sigma(j)} \cdots m^{n}_{\sigma(n)} \nonumber \\

& = & \sum_{\sigma} \text{sgn}(\sigma) \,m^{1}_{\sigma(1)}\cdots m^{i}_{\sigma(j)}\cdots m^{j}_{\sigma(i)} \cdots m^{n}_{\sigma(n)} \nonumber \\

& = & \sum_{\sigma}(-\text{sgn}(\hat{\sigma})) \,m^{1}_{\hat{\sigma}(1)}\cdots m^{i}_{\hat{\sigma}(i)}\cdots m^{j}_{\hat{\sigma}(j)} \cdots m^{n}_{\hat{\sigma}(n)} \nonumber \\

& = & - \sum_{\hat{\sigma}} \text{sgn}(\hat{\sigma}) \,m^{1}_{\hat{\sigma}(1)}\cdots m^{i}_{\hat{\sigma}(i)}\cdots m^{j}_{\hat{\sigma}(j)} \cdots m^{n}_{\hat{\sigma}(n)} \nonumber \\

& = & -\det M.

\end{eqnarray}

The step replacing \(\sum_{\sigma}\) by \(\sum_{\hat \sigma}\) often causes confusion; it hold since we sum over all permutations (see review problem 3). Thus we see that swapping rows changes the sign of the determinant. \(\textit{i.e.}\), $$M' = - \det M\, .\]

Applying this result to \(M=I\) (the identity matrix) yields

\[\det E^{i}_{j}=-1\, ,\]

where the matrix \(E^{i}_{j}\) is the identity matrix with rows \(i\) and \(j\) swapped. It is a row swap elementary matrix. This implies another nice property of the determinant. If two rows of the matrix are identical, then swapping the rows changes the sign of the matrix, but leaves the matrix unchanged. Then we see the following:

Theorem

If \(M\) has two identical rows, then \(\det M = 0\).

Contributor

David Cherney, Tom Denton, and Andrew Waldron (UC Davis)