5.7: The Kernel and Image of A Linear Map

- Last updated

- May 12, 2023

- Save as PDF

- Page ID

- 128010

( \newcommand{\kernel}{\mathrm{null}\,}\)

Outcomes

- Describe the kernel and image of a linear transformation, and find a basis for each.

In this section we will consider the case where the linear transformation is not necessarily an isomorphism. First consider the following important definition.

Definition 5.7.1: Kernel and Image

Let V and W be subspaces of Rn and let T:V↦W be a linear transformation. Then the image of T denoted as im(T) is defined to be the set im(T)={T(→v):→v∈V} In words, it consists of all vectors in W which equal T(→v) for some →v∈V.

The kernel of T, written ker(T), consists of all →v∈V such that T(→v)=→0. That is, ker(T)={→v∈V:T(→v)=→0}

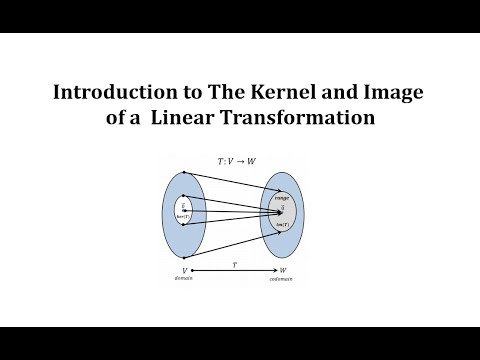

Below is a video on the kernel and image of a linear transformation.

Below is a video on which vectors are in the range and kernel of a linear transformation.

Below is a video on which vectors are in the kernel of a 2x3 matrix.

Below is a video on finding a nonzero vector in the kernel of a linear transformation.

Below is another video on finding a nonzero vector in the kernel of a linear transformation.

It follows that im(T) and ker(T) are subspaces of W and V respectively.

Proposition 5.7.1: Kernel and Image as Subspaces

Let V,W be subspaces of Rn and let T:V→W be a linear transformation. Then ker(T) is a subspace of V and im(T) is a subspace of W.

- Proof

-

First consider ker(T). It is necessary to show that if →v1,→v2 are vectors in ker(T) and if a,b are scalars, then a→v1+b→v2 is also in ker(T). But T(a→v1+b→v2)=aT(→v1)+bT(→v2)=a→0+b→0=→0

Thus ker(T) is a subspace of V.

Next suppose T(→v1),T(→v2) are two vectors in im(T). Then if a,b are scalars, aT(→v2)+bT(→v2)=T(a→v1+b→v2) and this last vector is in im(T) by definition.

We will now examine how to find the kernel and image of a linear transformation and describe the basis of each.

Example 5.7.1: Kernel and Image of a Linear Transformation

Let T:R4↦R2 be defined by

T[abcd]=[a−bc+d]

Then T is a linear transformation. Find a basis for ker(T) and im(T).

Solution

You can verify that T is a linear transformation.

First we will find a basis for ker(T). To do so, we want to find a way to describe all vectors →x∈R4 such that T(→x)=→0. Let →x=[abcd] be such a vector. Then

T[abcd]=[a−bc+d]=(00)

The values of a,b,c,d that make this true are given by solutions to the system

a−b=0c+d=0

The solution to this system is a=s,b=s,c=t,d=−t where s,t are scalars. We can describe ker(T) as follows.

ker(T)={[sst−t]}=span{[1100],[001−1]}

Notice that this set is linearly independent and therefore forms a basis for ker(T).

We move on to finding a basis for im(T). We can write the image of T as im(T)={[a−bc+d]}

We can write this in the form span={[10],[−10],[01],[01]}

This set is clearly not linearly independent. By removing unnecessary vectors from the set we can create a linearly independent set with the same span. This gives a basis for im(T) as im(T)=span{[10],[01]}

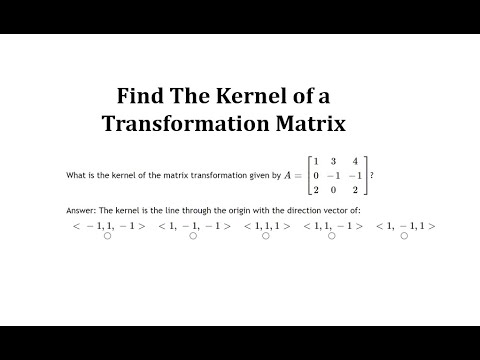

Below is a video on finding the kernel of a transformation matrix.

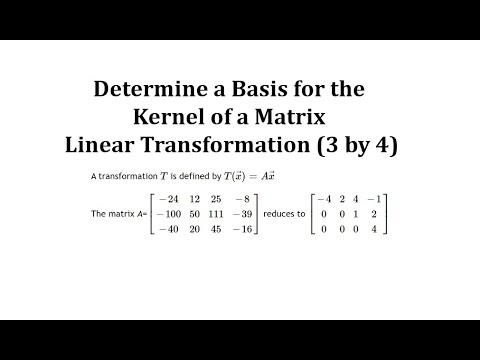

Below is a video on finding the basis for the kernel of a linear transformation.

Below is a video on finding the kernel of a linear transformation given a matrix.

Recall that a linear transformation T is called one to one if and only if T(→x)=→0 implies →x=→0. Using the concept of kernel, we can state this theorem in another way.

Theorem 5.7.1: One to One and Kernel

Let T be a linear transformation where ker(T) is the kernel of T. Then T is one to one if and only if ker(T) consists of only the zero vector.

A major result is the relation between the dimension of the kernel and dimension of the image of a linear transformation. In the previous example ker(T) had dimension 2, and im(T) also had dimension of 2. Is it a coincidence that the dimension of M22 is 4=2+2? Consider the following theorem.

Theorem 5.7.2: Dimension of Kernel and Image

Let T:V→W be a linear transformation where V,W are subspaces of Rn. Suppose the dimension of V is m. Then m=dim(ker(T))+dim(im(T))

- Proof

-

From Proposition 5.7.1, im(T) is a subspace of W. We know that there exists a basis for im(T), {T(→v1),⋯,T(→vr)}. Similarly, there is a basis for ker(T),{→u1,⋯,→us}. Then if →v∈V, there exist scalars ci such that T(→v)=r∑i=1ciT(→vi) Hence T(→v−∑ri=1ci→vi)=0. It follows that →v−∑ri=1ci→vi is in ker(T). Hence there are scalars ai such that →v−r∑i=1ci→vi=s∑j=1aj→uj Hence →v=∑ri=1ci→vi+∑sj=1aj→uj. Since →v is arbitrary, it follows that V=span{→u1,⋯,→us,→v1,⋯,→vr}

If the vectors {→u1,⋯,→us,→v1,⋯,→vr} are linearly independent, then it will follow that this set is a basis. Suppose then that r∑i=1ci→vi+s∑j=1aj→uj=0 Apply T to both sides to obtain r∑i=1ciT(→vi)+s∑j=1ajT(→u)j=r∑i=1ciT(→vi)=0 Since {T(→v1),⋯,T(→vr)} is linearly independent, it follows that each ci=0. Hence ∑sj=1aj→uj=0 and so, since the {→u1,⋯,→us} are linearly independent, it follows that each aj=0 also. Therefore {→u1,⋯,→us,→v1,⋯,→vr} is a basis for V and so n=s+r=dim(ker(T))+dim(im(T))

The above theorem leads to the next corollary.

Corollary 5.7.1

Let T:V→W be a linear transformation where V,W are subspaces of Rn. Suppose the dimension of V is m. Then dim(ker(T))≤m dim(im(T))≤m

This follows directly from the fact that n=dim(ker(T))+dim(im(T)).

Consider the following example.

Example 5.7.2

Let T:R2→R3 be defined by T(→x)=[101001]→x Then im(T)=V is a subspace of R3 and T is an isomorphism of R2 and V. Find a 2×3 matrix A such that the restriction of multiplication by A to V=im(T) equals T−1.

Solution

Since the two columns of the above matrix are linearly independent, we conclude that dim(im(T))=2 and therefore dim(ker(T))=2−dim(im(T))=2−2=0 by Theorem 5.7.2. Then by Theorem 5.7.1 it follows that T is one to one.

Thus T is an isomorphism of R2 and the two dimensional subspace of R3 which is the span of the columns of the given matrix. Now in particular, T(→e1)=[110], T(→e2)=[001]

Thus T^{-1}\left[ \begin{array}{r} 1 \\ 1 \\ 0 \end{array} \right] =\vec{e}_{1},\ T^{-1}\left[ \begin{array}{c} 0 \\ 0 \\ 1 \end{array} \right] =\vec{e}_{2} \nonumber

Extend T^{-1} to all of \mathbb{R}^{3} by defining T^{-1}\left[ \begin{array}{c} 0 \\ 1 \\ 0 \end{array} \right] =\vec{e}_{1}\nonumber Notice that the vectors \left\{ \left[ \begin{array}{c} 1 \\ 1 \\ 0 \end{array} \right] ,\left[ \begin{array}{c} 0 \\ 0 \\ 1 \end{array} \right] ,\left[ \begin{array}{c} 0 \\ 1 \\ 0 \end{array} \right] \right\} \nonumber are linearly independent so T^{-1} can be extended linearly to yield a linear transformation defined on \mathbb{R}^{3}. The matrix of T^{-1} denoted as A needs to satisfy A\left[ \begin{array}{rrr} 1 & 0 & 0 \\ 1 & 0 & 1 \\ 0 & 1 & 0 \end{array} \right] =\left[ \begin{array}{rrr} 1 & 0 & 1 \\ 0 & 1 & 0 \end{array} \right] \nonumber and so A=\left[ \begin{array}{rrr} 1 & 0 & 1 \\ 0 & 1 & 0 \end{array} \right] \left[ \begin{array}{rrr} 1 & 0 & 0 \\ 1 & 0 & 1 \\ 0 & 1 & 0 \end{array} \right]^{-1}=\left[ \begin{array}{rrr} 0 & 1 & 0 \\ 0 & 0 & 1 \end{array} \right] \nonumber

Note that \left[ \begin{array}{rrr} 0 & 1 & 0 \\ 0 & 0 & 1 \end{array} \right] \left[ \begin{array}{c} 1 \\ 1 \\ 0 \end{array} \right] =\left[ \begin{array}{c} 1 \\ 0 \end{array} \right]\nonumber \left[ \begin{array}{rrr} 0 & 1 & 0 \\ 0 & 0 & 1 \end{array} \right] \left[ \begin{array}{c} 0 \\ 0 \\ 1 \end{array} \right] =\left[ \begin{array}{c} 0 \\ 1 \end{array} \right] \nonumber so the restriction to V of matrix multiplication by this matrix yields T^{-1}.

Below is a video on describing the kernel of a linear transformation: projection onto y=x.

Below is a video on describing the kernel of a linear transformation: reflection across the y-axis.