4.1: Antiderivatives and Indefinite Integrals

- Page ID

- 116606

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\dsum}{\displaystyle\sum\limits} \)

\( \newcommand{\dint}{\displaystyle\int\limits} \)

\( \newcommand{\dlim}{\displaystyle\lim\limits} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\(\newcommand{\longvect}{\overrightarrow}\)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\(\newcommand{\avec}{\mathbf a}\) \(\newcommand{\bvec}{\mathbf b}\) \(\newcommand{\cvec}{\mathbf c}\) \(\newcommand{\dvec}{\mathbf d}\) \(\newcommand{\dtil}{\widetilde{\mathbf d}}\) \(\newcommand{\evec}{\mathbf e}\) \(\newcommand{\fvec}{\mathbf f}\) \(\newcommand{\nvec}{\mathbf n}\) \(\newcommand{\pvec}{\mathbf p}\) \(\newcommand{\qvec}{\mathbf q}\) \(\newcommand{\svec}{\mathbf s}\) \(\newcommand{\tvec}{\mathbf t}\) \(\newcommand{\uvec}{\mathbf u}\) \(\newcommand{\vvec}{\mathbf v}\) \(\newcommand{\wvec}{\mathbf w}\) \(\newcommand{\xvec}{\mathbf x}\) \(\newcommand{\yvec}{\mathbf y}\) \(\newcommand{\zvec}{\mathbf z}\) \(\newcommand{\rvec}{\mathbf r}\) \(\newcommand{\mvec}{\mathbf m}\) \(\newcommand{\zerovec}{\mathbf 0}\) \(\newcommand{\onevec}{\mathbf 1}\) \(\newcommand{\real}{\mathbb R}\) \(\newcommand{\twovec}[2]{\left[\begin{array}{r}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\ctwovec}[2]{\left[\begin{array}{c}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\threevec}[3]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\cthreevec}[3]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\fourvec}[4]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\cfourvec}[4]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\fivevec}[5]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\cfivevec}[5]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\mattwo}[4]{\left[\begin{array}{rr}#1 \amp #2 \\ #3 \amp #4 \\ \end{array}\right]}\) \(\newcommand{\laspan}[1]{\text{Span}\{#1\}}\) \(\newcommand{\bcal}{\cal B}\) \(\newcommand{\ccal}{\cal C}\) \(\newcommand{\scal}{\cal S}\) \(\newcommand{\wcal}{\cal W}\) \(\newcommand{\ecal}{\cal E}\) \(\newcommand{\coords}[2]{\left\{#1\right\}_{#2}}\) \(\newcommand{\gray}[1]{\color{gray}{#1}}\) \(\newcommand{\lgray}[1]{\color{lightgray}{#1}}\) \(\newcommand{\rank}{\operatorname{rank}}\) \(\newcommand{\row}{\text{Row}}\) \(\newcommand{\col}{\text{Col}}\) \(\renewcommand{\row}{\text{Row}}\) \(\newcommand{\nul}{\text{Nul}}\) \(\newcommand{\var}{\text{Var}}\) \(\newcommand{\corr}{\text{corr}}\) \(\newcommand{\len}[1]{\left|#1\right|}\) \(\newcommand{\bbar}{\overline{\bvec}}\) \(\newcommand{\bhat}{\widehat{\bvec}}\) \(\newcommand{\bperp}{\bvec^\perp}\) \(\newcommand{\xhat}{\widehat{\xvec}}\) \(\newcommand{\vhat}{\widehat{\vvec}}\) \(\newcommand{\uhat}{\widehat{\uvec}}\) \(\newcommand{\what}{\widehat{\wvec}}\) \(\newcommand{\Sighat}{\widehat{\Sigma}}\) \(\newcommand{\lt}{<}\) \(\newcommand{\gt}{>}\) \(\newcommand{\amp}{&}\) \(\definecolor{fillinmathshade}{gray}{0.9}\)

|

|

At this point, we have seen how to calculate derivatives of many functions and have been introduced to a variety of their applications. We now ask a question that turns this process around: Given a function \(f\), how do we find a function with the derivative \(f\), and why would we be interested in such a function?

We answer the first part of this question by defining antiderivatives. An antiderivative of a function \(f\) is a function whose derivative is \(f\). The need for antiderivatives arises in many situations, and we look at various examples throughout the remainder of the text. Here, we examine one specific example that involves rectilinear motion.

In our examination of derivatives, we showed that given a position function, \(s(t)\), of an object, its velocity function, \(v(t)\), is the derivative of \(s(t)\). That is, \(v(t)=s^{\prime}(t)\). Furthermore, the acceleration, \(a(t)\), is the derivative of the velocity \(v(t)\). That is, \(a(t)=v^{\prime}(t)=s^{\prime\prime}(t)\). Now, suppose we are given an acceleration function \(a\) but not the velocity function \(v\) or the position function \(s\). Since \(a(t)=v^{\prime}(t)\), determining the velocity function requires us to find an antiderivative of the acceleration function. Since \(v(t)=s^{\prime}(t)\), determining the position function requires us to find an antiderivative of the velocity function.

Rectilinear motion is just one case where the need for antiderivatives arises. We will see many more examples throughout the remainder of the text. Let’s look at the terminology and notation for antiderivatives and determine the antiderivatives for several functions. We examine techniques for finding antiderivatives of more complicated functions later in the text.

The Reverse of Differentiation

At this point, we know how to find derivatives of various functions. We now ask the opposite question. Given a function \(f\), how can we find a function with derivative \(f\)? If we can find a function \(F\) with derivative \(f\), we call \(F\) an antiderivative of \(f\).

A function \(F\) is an antiderivative of the function \(f\) if\[F^{\prime}(x)=f(x) \nonumber \]for all \(x\) in the domain of \(f\).

Consider the function \(f(x)=2x\). Knowing the Power Rule of differentiation, we conclude that \(F(x)=x^2\) is an antiderivative of \(f\) since \(F^{\prime}(x)=2x\).

Are there any other antiderivatives of \(f\)?

Yes; since the derivative of any constant \(C\) is zero, \(x^2+C\) is also an antiderivative of \(2x\). Therefore, \(x^2+5\) and \(x^2−\sqrt{2}\) are also antiderivatives of \( f(x) = 2x \).

Are there any others that are not of the form \(x^2+C\) for some constant \(C\)?

The answer is no. From the corollary following the Mean Value Theorem, we know that if \(F\) and \(G\) are differentiable functions such that \(F^{\prime}(x)=G^{\prime}(x)\), then \(F(x)−G(x)=C\) for some constant \(C\). This fact leads to the following important theorem.

Let \(F\) be an antiderivative of \(f\) over an interval \(I\). Then,

- for each constant \(C\), the function \(F(x)+C\) is also an antiderivative of \(f\) over \(I\);

- if \(G\) is an antiderivative of \(f\) over \(I\), there is a constant \(C\) for which \(G(x)=F(x)+C\) over \(I\).

In other words, the most general form of the antiderivative of \(f\) over \(I\) is \(F(x)+C\).

We use this fact and our knowledge of derivatives to find all the antiderivatives for several functions.

For each of the following functions, find all antiderivatives.

- \(f(x)=3x^2\)

- \(f(x)=\frac{1}{x}\)

- \(f(x)=\cos x\)

- \(f(x)=e^x\)

- Solutions

-

- Because\[\dfrac{d}{dx}\left(x^3\right)=3x^2, \nonumber \]\(F(x)=x^3\) is an antiderivative of \(3x^2\). Therefore, every antiderivative of \(3x^2\) is of the form \(x^3+C\) for some constant \(C\), and every function of the form \(x^3+C\) is an antiderivative of \(3x^2\).

- Let \(f(x)=\ln |x|\). For \(x>0,\; f(x)=\ln |x|=\ln (x)\) and\[\dfrac{d}{dx} \left( \ln x \right) =\dfrac{1}{x}. \nonumber \]For \(x<0,\; f(x)=\ln |x|=\ln (−x)\) and\[\dfrac{d}{dx} \left( \ln (−x) \right) =−\dfrac{1}{−x}=\dfrac{1}{x}. \nonumber \]Therefore,\[\dfrac{d}{dx} \left( \ln |x| \right) =\dfrac{1}{x}. \nonumber \]Thus, \(F(x)=\ln |x|\) is an antiderivative of \(\frac{1}{x}\). Therefore, every antiderivative of \(\frac{1}{x}\) is of the form \(\ln |x|+C\) for some constant \(C\) and every function of the form \(\ln |x|+C\) is an antiderivative of \(\frac{1}{x}\).

- We have\[\dfrac{d}{dx} \left( \sin x \right) =\cos x, \nonumber \]so \(F(x)=\sin x\) is an antiderivative of \(\cos x\). Therefore, every antiderivative of \(\cos x\) is of the form \(\sin x+C\) for some constant \(C\) and every function of the form \(\sin x+C\) is an antiderivative of \(\cos x\).

- Since\[\dfrac{d}{dx}\left(e^x\right)=e^x, \nonumber \]then \(F(x)=e^x\) is an antiderivative of \(e^x\). Therefore, every antiderivative of \(e^x\) is of the form \(e^x+C\) for some constant \(C\) and every function of the form \(e^x+C\) is an antiderivative of \(e^x\).

Consider the function \( f(x) = \frac{1}{x} \). In Example \( \PageIndex{ 1b } \), we claimed that the antiderivative of \( f(x) \) was \( F(x) = \ln(|x|) + C \). In truth, however, because \( F(x) \) has breaks in its domain, this antiderivative could technically be written as\[ F(x) = \left\{ \begin{array}{ll}

\ln(x) + C_1, & \text{ if } x \gt 0 \\[6pt]

\ln(-x) + C_2, & \text{ if } x \lt 0 \\[6pt]

\end{array} \right. \nonumber \]Likewise, if we consider the antiderivative of \( g(x) = -\csc^2(x) \), rather than writing \( G(x) = \cot(x) + C \) (I leave it to the reader to verify that \( G(x) \) is the antiderivative of \( g(x) \)), we could write \( G \) (with some slightly abusive index notation) as\[ G(x) = \left\{ \begin{array}{ll}

\cot(x) + C_1, & \text{ if } 0 \lt x \lt \pi \\[6pt]

\cot(x) + C_{-1}, & \text{ if } -\pi \lt x \lt 0 \\[6pt]

\cot(x) + C_2, & \text{ if } \pi \lt x \lt 2\pi \\[6pt]

\cot(x) + C_{-2}, & \text{ if } -2\pi \lt x \lt -\pi \\[6pt]

\vdots & \vdots \\[6pt]

\cot(x) + C_n, & \text{ if } (n - 1)\pi \lt x \lt n\pi \\[6pt]

\cot(x) + C_{-n}, & \text{ if } n\pi \lt x \lt (n + 1)\pi \\[6pt]

\vdots & \vdots \\[6pt]

\end{array} \right. \nonumber \]While both of these piecewise versions of the antiderivatives of \( f(x) \) and \( g(x) \) are correct, I hope the reader would agree, they are incredibly bulky. The convention adopted in this text (and all others I have encountered on the subject) is to collapse these down to \( F(x) = \ln(|x|) + C \) and \( G(x) = \cot(x) + C \), respectively, with the understanding that these are valid on all disjoint intervals in their domains.

Find all antiderivatives of \(f(x)=\sin x\).

- Answer

-

\(F(x) = −\cos x+C\)

Indefinite Integrals

We now look at the formal notation used to represent antiderivatives and examine some of their properties. These properties allow us to find antiderivatives of more complicated functions. Given a function \(f\), we use the notation \(f^{\prime}(x)\) or \(\frac{df}{dx}\) to denote the derivative of \(f\). Here, we introduce notation for antiderivatives. If \(F\) is an antiderivative of \(f\), we say that \(F(x)+C\) is the most general antiderivative of \(f\) and write\[\int f(x)\,dx=F(x)+C.\nonumber \]The symbol \(\displaystyle \int \) is called an integral sign, and \(\displaystyle \int f(x)\,dx\) is called the indefinite integral of \(f\).

Given a function \(f\), the indefinite integral of \(f\), denoted\[\int f(x)\,dx, \nonumber \]is the most general antiderivative of \(f\). If \(F\) is an antiderivative of \(f\), then\[\int f(x)\,dx=F(x)+C. \nonumber \]The expression \(f(x)\) is called the integrand and the variable \(x\) is the variable of integration.

Given the terminology introduced in this definition, the act of finding the antiderivatives of a function \(f\) is usually referred to as integrating \(f\).

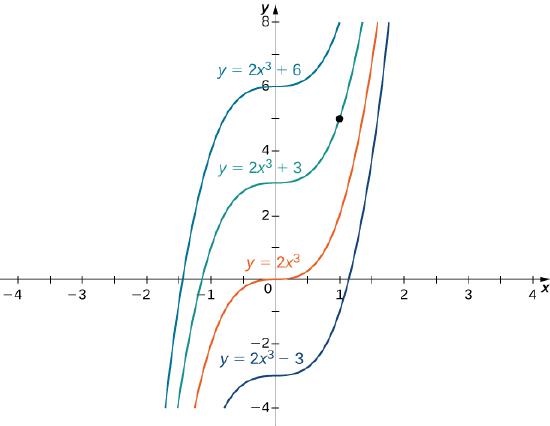

For a function \(f\) and an antiderivative \(F\), the set of functions \(F(x)+C\), where \(C\) is any real number, is often referred to as the family of antiderivatives of \(f\). For example, since \(x^2\) is an antiderivative of \(2x\) and any antiderivative of \(2x\) is of the form \(x^2+C\), we write\[\int 2x\,dx=x^2+C.\nonumber \]The collection of all functions of the form \(x^2+C\), where \(C\) is any real number, is known as the family of antiderivatives of \(2x\). Figure \(\PageIndex{1}\) shows a graph of this family of antiderivatives.

Figure \(\PageIndex{1}\): The family of antiderivatives of \(2x\) consists of all functions of the form \(x^2+C\), where \(C\) is any real number.

For some functions, evaluating indefinite integrals follows directly from properties of derivatives. For example, for \(n \neq −1\),\[\int x^n\,dx=\dfrac{x^{n+1}}{n+1}+C,\nonumber\]which comes directly from\[\dfrac{d}{dx}\left(\dfrac{x^{n+1}}{n+1}\right)=(n+1)\dfrac{x^n}{n+1}=x^n.\nonumber\]This fact is known as the Power Rule for integrals.

For \(n \neq −1\),\[\int x^n\,dx=\dfrac{x^{n+1}}{n+1}+C. \nonumber \]

Evaluating indefinite integrals for some other functions is also a straightforward calculation. The following table lists the indefinite integrals for several common functions.

| Differentiation Formula | Indefinite Integral |

|---|---|

| \(\dfrac{d}{dx} \left( k \right) =0\) | \(\displaystyle \int k\,dx=\int kx^0\,dx=kx+C\) |

| \(\dfrac{d}{dx} \left( x^n \right) =nx^{n−1}\) | \(\displaystyle \int x^n\,dx=\dfrac{x^{n+1}}{n+1}+C\) for \(n \neq −1\) |

| \(\dfrac{d}{dx} \left( \ln |x| \right) =\dfrac{1}{x}\) | \(\displaystyle \int \dfrac{1}{x}\,dx=\ln |x|+C\) |

| \(\dfrac{d}{dx} \left( e^x \right) =e^x\) | \(\displaystyle \int e^x\,dx=e^x+C\) |

| \( \dfrac{d}{dx} \left( b^x \right) = b^x \ln{(b)} \) | \( \displaystyle \int b^x \, dx = \dfrac{b^x}{\ln{(b)}} + C \) |

| \(\dfrac{d}{dx} \left( \sin x \right) =\cos x\) | \(\displaystyle \int \cos x\,dx=\sin x+C\) |

| \(\dfrac{d}{dx} \left( \cos x \right) =−\sin x\) | \(\displaystyle \int \sin x\,dx=−\cos x+C\) |

| \(\dfrac{d}{dx} \left( \tan x \right) =\sec^2 x\) | \(\displaystyle \int \sec^2 x\,dx=\tan x+C\) |

| \(\dfrac{d}{dx} \left( \csc x \right) =−\csc x\cot x\) | \(\displaystyle \int \csc x\cot x\,dx=−\csc x+C\) |

| \(\dfrac{d}{dx} \left( \sec x \right) =\sec x\tan x\) | \(\displaystyle \int \sec x\tan x\,dx=\sec x+C\) |

| \(\dfrac{d}{dx} \left( \cot x \right) =−\csc^2 x\) | \(\displaystyle \int \csc^2x\,dx=−\cot x+C\) |

| \(\dfrac{d}{dx} \left( \sin^{−1}x \right) =\dfrac{1}{\sqrt{1−x^2}}\) | \(\displaystyle \int \dfrac{1}{\sqrt{1−x^2}} \, dx =\sin^{−1}x+C\) |

| \(\dfrac{d}{dx} \left( \tan^{−1}x \right) =\dfrac{1}{1+x^2}\) | \(\displaystyle \int \dfrac{1}{1+x^2}\,dx=\tan^{−1}x+C\) |

| \( \dfrac{d}{dx} \left( \sec^{-1}x \right) = \dfrac{1}{x \sqrt{x^2 - 1}} \) | \( \displaystyle \int \dfrac{1}{x\sqrt{x^2 - 1}} \, dx = \sec^{-1}x + C \) |

| \(\dfrac{d}{dx} \left( \sinh{(x)} \right) = \cosh{(x)}\) | \( \displaystyle \int \cosh{(x)} \, dx = \sinh{(x)} + C \) |

| \( \dfrac{d}{dx} \left( \cosh{(x)} \right) = \sinh{(x)} \) | \( \displaystyle \int \sinh{(x)} \, dx = \cosh{(x)} + C \) |

Notice that not all derivatives we have learned are represented in this table. For example, we learned \( \frac{d}{dx} \left( \log_b{(x)} \right) = \frac{1}{x \ln{(b)}} \), however, the related indefinite integral is not listed. Moreover, most of the inverse trigonometric and hyperbolic functions are missing. This is because indefinite integrals involving these forms are rare. In the case of the inverse hyperbolic functions, if you find the antiderivative, you must convert that to a logarithm. This is not a pleasant process, but you will find a more efficient way to integrate these styles of functions in Calculus II. Rather than making an already long table even longer, we leave it to the reader to reference the relevant sections in Chapter 3 to determine the antiderivatives of those functions, if needed.

From the definition of indefinite integral of \(f\), we know\[\int f(x)\,dx=F(x)+C\nonumber \]if and only if \(F\) is an antiderivative of \(f\).

Therefore, when claiming that\[\int f(x)\,dx=F(x)+C,\nonumber \]it is essential to check whether this statement is correct by verifying that \(F^{\prime}(x)=f(x)\).

Each of the following statements is of the form \(\displaystyle \int f(x)\,dx=F(x)+C\). Verify that each statement is correct by showing that \(F^{\prime}(x)=f(x)\).

- \(\displaystyle\int \left( x+e^x \right) \,dx=\frac{x^2}{2}+e^x+C\)

- \(\displaystyle\int xe^x\,dx=xe^x−e^x+C\)

- Solution

-

- Since\[\dfrac{d}{dx}\left(\dfrac{x^2}{2}+e^x+C\right)=x+e^x,\nonumber\]the statement\[\int \left( x+e^x \right) \,dx=\dfrac{x^2}{2}+e^x+C \nonumber \]is correct.

Note that we are verifying an indefinite integral for a sum. Furthermore, \(\frac{x^2}{2}\) and \(e^x\) are antiderivatives of \(x\) and \(e^x\), respectively, and the sum of the antiderivatives is an antiderivative of the sum. We discuss this fact again later in this section. - Using the Product Rule, we see that\[\dfrac{d}{dx}\left(xe^x−e^x+C\right)=e^x+xe^x−e^x=xe^x. \nonumber \]Therefore, the statement\[\int xe^x\,dx=xe^x−e^x+C \nonumber \]is correct.

Note that we are verifying an indefinite integral for a product. The antiderivative \(xe^x−e^x\) is not a product of the antiderivatives. Furthermore, the product of antiderivatives, \(x^2e^x/2\) is not an antiderivative of \(xe^x\) since\[\dfrac{d}{dx}\left(\dfrac{x^2e^x}{2}\right)=xe^x+\dfrac{x^2e^x}{2} \neq xe^x.\nonumber\] Generally, the product of antiderivatives is not an antiderivative of a product.

- Since\[\dfrac{d}{dx}\left(\dfrac{x^2}{2}+e^x+C\right)=x+e^x,\nonumber\]the statement\[\int \left( x+e^x \right) \,dx=\dfrac{x^2}{2}+e^x+C \nonumber \]is correct.

Verify that \(\displaystyle \int x\cos x\,\,dx=x\sin x+\cos x+C\).

- Answer

-

\(\frac{d}{dx} \left( x\sin x+\cos x+C \right) =\sin x+x\cos x−\sin x=x \cos x\)

In Table \(\PageIndex{1}\), we listed the indefinite integrals for many elementary functions. Let’s now consider evaluating indefinite integrals for more complicated functions. For example, consider finding an antiderivative of a sum \(f+g\). In Example \(\PageIndex{2a}\), we showed that an antiderivative of the sum \(x+e^x\) is given by the sum \(\frac{x^2}{2}+e^x\) - that is, an antiderivative of a sum is given by a sum of antiderivatives. This result was not specific to this example. In general, if \(F\) and \(G\) are antiderivatives of any functions \(f\) and \(g\), respectively, then\[\dfrac{d}{dx} \left( F(x)+G(x) \right) =F^{\prime}(x)+G′(x)=f(x)+g(x).\nonumber\]Therefore, \(F(x)+G(x)\) is an antiderivative of \(f(x)+g(x)\) and we have\[ \int \left( f(x)+g(x) \right) \,dx=F(x)+G(x)+C.\nonumber \]Similarly,\[ \int \left( f(x)−g(x) \right) \,dx=F(x)−G(x)+C.\nonumber \]In addition, consider the task of finding an antiderivative of \(kf(x)\), where \(k\) is any real number. Since\[ \dfrac{d}{dx} \left( kF(x) \right) =k\dfrac{d}{dx} \left( F(x) \right) =kF^{\prime}(x)\nonumber \]for any real number \(k\), we conclude that\[ \int kf(x)\,dx=kF(x)+C.\nonumber \]These properties are summarized next.

Let \(F\) and \(G\) be antiderivatives of \(f\) and \(g\), respectively, and let \(k\) be any real number.

Sums and Differences

\[\int \left( f(x) \pm g(x) \right) \,dx=F(x) \pm G(x)+C \nonumber \]

Constant Multiples

\[ \int kf(x)\,dx=kF(x)+C \nonumber \]

From this theorem, we can evaluate any integral involving a sum, difference, or constant multiple of functions with known antiderivatives. Evaluating integrals involving products, quotients, or compositions is more complicated. (See Example \(\PageIndex{2b}\) for an example involving an antiderivative of a product.) We address integrals involving these more complicated functions in Integral Calculus (Calculus II). In the next example, we examine how to use this theorem to calculate the indefinite integrals of several functions.

Evaluate each of the following indefinite integrals:

- \(\displaystyle \int \left( 5x^3−7x^2+3x+4 \right) \,dx\)

- \(\displaystyle \int \frac{x^2+4\sqrt[3]{x}}{x}\,dx\)

- \(\displaystyle \int \frac{4}{1+x^2}\,dx\)

- \(\displaystyle \int \tan x\cos x\,dx\)

- Solutions

-

- Using Properties of Indefinite Integrals, we can integrate the four terms in the integrand separately. We obtain\[\int \left( 5x^3−7x^2+3x+4 \right) \,dx=\int 5x^3\,dx−\int 7x^2\,dx+\int 3x\,dx+\int 4\,dx.\nonumber\]From the second part of Properties of Indefinite Integrals, each coefficient can be written in front of the integral sign, which gives\[\int 5x^3\,dx−\int 7x^2\,dx+\int 3x\,dx+\int 4\,dx=5\int x^3\,dx−7\int x^2\,dx+3\int x\,dx+4\int 1\,dx.\nonumber\]Using the Power Rule for integrals, we conclude that\[\int \left( 5x^3−7x^2+3x+4 \right) \,dx=\dfrac{5}{4}x^4−\dfrac{7}{3}x^3+\dfrac{3}{2}x^2+4x+C.\nonumber\]

- Rewrite the integrand as\[\dfrac{x^2+4\sqrt[3]{x}}{x}=\dfrac{x^2}{x}+\dfrac{4\sqrt[3]{x}}{x}.\nonumber\]Then, to evaluate the integral, integrate each of these terms separately. Using the Power Rule, we have\[\begin{array}{rcl}

\int \left(x+\dfrac{4}{x^{2/3}}\right)\,dx & = & \int x\,dx+4\int x^{−2/3}\,dx\\[6pt]

& = & \dfrac{1}{2}x^2+4\dfrac{1}{\left(\tfrac{−2}{3}\right)+1}x^{(−2/3)+1}+C\\[6pt]

& = & \dfrac{1}{2}x^2+12x^{1/3}+C. \\[6pt]

\end{array}\nonumber\] - Using Properties of Indefinite Integrals, write the integral as\[4 \int \dfrac{1}{1+x^2}\,dx.\nonumber\]Then, use the fact that \(\tan^{−1}(x)\) is an antiderivative of \(\frac{1}{1+x^2}\) to conclude that\[\displaystyle \int \dfrac{4}{1+x^2}\,dx=4\tan^{−1}(x)+C.\nonumber\]

- Rewrite the integrand as\[\tan x\cos x=\dfrac{\sin x}{\cos x}\cdot\cos x=\sin x.\nonumber\]Therefore,\[\displaystyle \int \tan x\cos x\,dx=\int \sin x\,dx=−\cos x+C.\nonumber\]

Evaluate \(\displaystyle \int \left( 4x^3−5x^2+x−7 \right) \,dx\).

- Answer

-

\(\displaystyle \int \left( 4x^3−5x^2+x−7 \right) \,dx = \quad x^4−\frac{5}{3}x^3+\frac{1}{2}x^2−7x+C\)

Initial-Value Problems

We look at techniques for integrating functions involving products, quotients, and compositions later in the text. Here, we turn to one everyday use for antiderivatives that arises often in many applications: solving differential equations.

A differential equation is an equation that relates an unknown function and one or more of its derivatives. The equation\[\dfrac{dy}{dx}=f(x)\label{diffeq1} \]is a simple example of a differential equation. Solving this equation means finding a function \(y\) with a derivative \(f\). Therefore, the solutions of Equation \ref{diffeq1} are the antiderivatives of \(f\). If \(F\) is one antiderivative of \( f\), every function of the form \( y=F(x)+C\) is a solution of that differential equation. For example, the solutions of\[\dfrac{dy}{dx}=6x^2\nonumber \]are given by\[y=\int 6x^2\,dx=2x^3+C.\nonumber \]Sometimes we are interested in determining whether a particular solution curve passes through a certain point \( (x_0,y_0)\) - that is, \( y(x_0)=y_0\). The problem of finding a function \(y\) that satisfies a differential equation\[\dfrac{dy}{dx}=f(x)\nonumber\]with the additional condition \(y(x_0)=y_0\) is an example of an initial-value problem. The condition \( y(x_0)=y_0\) is known as an initial condition. For example, looking for a function \( y\) that satisfies the differential equation\[\dfrac{dy}{dx}=6x^2\nonumber\]and the initial condition \(y(1)=5\) is an example of an initial-value problem. Since the solutions of the differential equation are \( y=2x^3+C\), to find a function \(y\) that also satisfies the initial condition, we need to find \(C\) such that \(y(1)=2(1)^3+C=5\). This equation shows that \( C=3\), and we conclude that \( y=2x^3+3\) solves this initial-value problem, as shown in the following graph.

Figure \(\PageIndex{2}\): Some of the solution curves of the differential equation \(\frac{dy}{dx}=6x^2\) are displayed. The function \(y=2x^3+3\) satisfies the differential equation and the initial condition \(y(1)=5\).

Solve the initial-value problem\[\dfrac{dy}{dx}=\sin x,\quad y(0)=5.\nonumber \]

- Solution

-

First, we need to solve the differential equation. If \(\frac{dy}{dx}=\sin x\), then\[y=\displaystyle \int \sin(x)\,dx=−\cos x+C.\nonumber \]Next we need to look for a solution \(y\) that satisfies the initial condition. The initial condition \(y(0)=5\) means we need a constant \(C\) such that \(−\cos x+C=5\). Therefore,\[C=5+\cos(0)=6.\nonumber \]The solution of the initial-value problem is \(y=−\cos x+6\).

Solve the initial value problem \(\frac{dy}{dx}=3x^{−2},\quad y(1)=2\).

- Answer

-

\(y=−\frac{3}{x}+5\)

Initial-value problems arise in many applications. Next, we consider a situation where a driver applies brakes to a car. We are interested in how long it takes for the vehicle to stop. Recall that the velocity function \(v(t)\) is the derivative of a position function \(s(t)\), and the acceleration \(a(t)\) is the derivative of the velocity function. In earlier examples in the text, we could calculate the velocity from the position and then compute the acceleration from the velocity. In the following example, we work the other way around. Given an acceleration function, we calculate the velocity function. We then use the velocity function to determine the position function.

A car is traveling at the rate of \(88\) ft/sec (\(60\) mph) when the brakes are applied. The car begins decelerating at a constant rate of \(15\) ft/sec2.

- How many seconds elapse before the car stops?

- How far does the car travel during that time?

- Solutions

-

- First, we introduce variables for this problem. Let \(t\) be the time (in seconds) after the brakes are applied. Let \(a(t)\) be the acceleration of the car (in feet per second squared) at time \(t\). Let \(v(t)\) be the velocity of the car (in feet per second) at time \(t\). Let \(s(t)\) be the car’s position (in feet) beyond the point where the brakes are applied at time \(t\).

The car travels at a rate of \(88\) ft/sec. Therefore, the initial velocity is \(v(0)=88\) ft/sec. Since the car is decelerating, the acceleration is\[a(t)=−15\,\text{ft/sec}^2.\nonumber\]The acceleration is the derivative of the velocity,\[v^{\prime}(t)=-15.\nonumber\]Therefore, we have an initial-value problem to solve:\[v^{\prime}(t)=−15,\quad v(0)=88.\nonumber\]Integrating, we find that\[v(t)=−15t+C.\nonumber\]Since \(v(0)=88,C=88\). Thus, the velocity function is\[v(t)=−15t+88.\nonumber\]To find how long it takes for the car to stop, we need to find the time \(t\) such that the velocity is zero. Solving \(−15t+88=0\), we obtain \(t=\frac{88}{15}\) sec. - To find how far the car travels during this time, we need to find the position of the car after \(\frac{88}{15}\) sec. We know the velocity \(v(t)\) is the derivative of the position \(s(t)\). Consider the initial position as \(s(0)=0\). Therefore, we need to solve the initial-value problem\[s^{\prime}(t)=−15t+88,\quad s(0)=0.\nonumber\]Integrating, we have\[s(t)=−\dfrac{15}{2}t^2+88t+C.\nonumber\]Since \(s(0)=0\), the constant is \(C=0\). Therefore, the position function is\[s(t)=−\dfrac{15}{2}t^2+88t.\nonumber\]After \(t=\frac{88}{15}\) sec, the position is \(s\left(\frac{88}{15}\right) \approx 258.133\) ft.

- First, we introduce variables for this problem. Let \(t\) be the time (in seconds) after the brakes are applied. Let \(a(t)\) be the acceleration of the car (in feet per second squared) at time \(t\). Let \(v(t)\) be the velocity of the car (in feet per second) at time \(t\). Let \(s(t)\) be the car’s position (in feet) beyond the point where the brakes are applied at time \(t\).

Suppose the car is traveling at the rate of \(44\) ft/sec. How long does it take for the car to stop? How far will the car travel?

- Answer

-

\(2.93\) sec, \(64.5\) ft