2.4.1: Invertibility of Linear Transformations

- Page ID

- 186417

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\dsum}{\displaystyle\sum\limits} \)

\( \newcommand{\dint}{\displaystyle\int\limits} \)

\( \newcommand{\dlim}{\displaystyle\lim\limits} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\(\newcommand{\longvect}{\overrightarrow}\)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\(\newcommand{\avec}{\mathbf a}\) \(\newcommand{\bvec}{\mathbf b}\) \(\newcommand{\cvec}{\mathbf c}\) \(\newcommand{\dvec}{\mathbf d}\) \(\newcommand{\dtil}{\widetilde{\mathbf d}}\) \(\newcommand{\evec}{\mathbf e}\) \(\newcommand{\fvec}{\mathbf f}\) \(\newcommand{\nvec}{\mathbf n}\) \(\newcommand{\pvec}{\mathbf p}\) \(\newcommand{\qvec}{\mathbf q}\) \(\newcommand{\svec}{\mathbf s}\) \(\newcommand{\tvec}{\mathbf t}\) \(\newcommand{\uvec}{\mathbf u}\) \(\newcommand{\vvec}{\mathbf v}\) \(\newcommand{\wvec}{\mathbf w}\) \(\newcommand{\xvec}{\mathbf x}\) \(\newcommand{\yvec}{\mathbf y}\) \(\newcommand{\zvec}{\mathbf z}\) \(\newcommand{\rvec}{\mathbf r}\) \(\newcommand{\mvec}{\mathbf m}\) \(\newcommand{\zerovec}{\mathbf 0}\) \(\newcommand{\onevec}{\mathbf 1}\) \(\newcommand{\real}{\mathbb R}\) \(\newcommand{\twovec}[2]{\left[\begin{array}{r}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\ctwovec}[2]{\left[\begin{array}{c}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\threevec}[3]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\cthreevec}[3]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\fourvec}[4]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\cfourvec}[4]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\fivevec}[5]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\cfivevec}[5]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\mattwo}[4]{\left[\begin{array}{rr}#1 \amp #2 \\ #3 \amp #4 \\ \end{array}\right]}\) \(\newcommand{\laspan}[1]{\text{Span}\{#1\}}\) \(\newcommand{\bcal}{\cal B}\) \(\newcommand{\ccal}{\cal C}\) \(\newcommand{\scal}{\cal S}\) \(\newcommand{\wcal}{\cal W}\) \(\newcommand{\ecal}{\cal E}\) \(\newcommand{\coords}[2]{\left\{#1\right\}_{#2}}\) \(\newcommand{\gray}[1]{\color{gray}{#1}}\) \(\newcommand{\lgray}[1]{\color{lightgray}{#1}}\) \(\newcommand{\rank}{\operatorname{rank}}\) \(\newcommand{\row}{\text{Row}}\) \(\newcommand{\col}{\text{Col}}\) \(\renewcommand{\row}{\text{Row}}\) \(\newcommand{\nul}{\text{Nul}}\) \(\newcommand{\var}{\text{Var}}\) \(\newcommand{\corr}{\text{corr}}\) \(\newcommand{\len}[1]{\left|#1\right|}\) \(\newcommand{\bbar}{\overline{\bvec}}\) \(\newcommand{\bhat}{\widehat{\bvec}}\) \(\newcommand{\bperp}{\bvec^\perp}\) \(\newcommand{\xhat}{\widehat{\xvec}}\) \(\newcommand{\vhat}{\widehat{\vvec}}\) \(\newcommand{\uhat}{\widehat{\uvec}}\) \(\newcommand{\what}{\widehat{\wvec}}\) \(\newcommand{\Sighat}{\widehat{\Sigma}}\) \(\newcommand{\lt}{<}\) \(\newcommand{\gt}{>}\) \(\newcommand{\amp}{&}\) \(\definecolor{fillinmathshade}{gray}{0.9}\)As with matrix multiplication, it is helpful to understand matrix inversion as an operation on linear transformations. Recall that the identity transformation on \(\mathbb{R}^n \) is denoted \(\mathcal{I }\).

A transformation \(T\colon\mathbb{R}^n \to\mathbb{R}^n \) is invertible if there exists a transformation \(U\colon\mathbb{R}^n \to\mathbb{R}^n \) such that \(T\circ U = \mathcal{I }\) and \(U\circ T = \mathcal{I }\). In this case, the transformation \(U\) is called the inverse of \(T\text{,}\) and we write \(U = T^{-1}\).

The inverse \(U\) of \(T\) “undoes” whatever \(T\) did. We have

\[ T\circ U(\vec{x}) = \vec{x} \quad\text{and}\quad U\circ T(\vec{x}) = \vec{x} \nonumber \]

for all vectors \(\vec{x}\). This means that if you apply \(T\) to \(\vec{x}\text{,}\) then you apply \(U\text{,}\) you get the vector \(\vec{x}\) back, and likewise in the other order.

Define \(f\colon \mathbb{R} \to\mathbb{R} \) by \(f(x) = 2x\). This is an invertible transformation, with inverse \(g(x) = \dfrac{x}{2}\). Indeed,

\[ f\circ g(x) = f(g(x)) = f\biggl(\frac x2\biggr) = 2\biggl(\frac x2\biggr) = x \nonumber \]

and

\[ g\circ f(x) = g(f(x)) = g(2x) = \frac{2x}2 = x \nonumber \]

In other words, dividing by \(2\) undoes the transformation that multiplies by \(2\).

Define \(f\colon \mathbb{R} \to\mathbb{R} \) by \(f(x) = x^3\). This is an invertible transformation, with inverse \(g(x) = \sqrt[3]x\). Indeed,

\[ f\circ g(x) = f(g(x)) = f(\sqrt[3]x) = \bigl(\sqrt[3]x\bigr)^3 = x \nonumber \]

and

\[ g\circ f(x) = g(f(x)) = g(x^3) = \sqrt[3]{x^3} = x \nonumber \]

In other words, taking the cube root undoes the transformation that takes a number to its cube.

Define \(f\colon \mathbb{R} \to\mathbb{R} \) by \(f(x) = x^2\). This is not an invertible function. Indeed, we have \(f(2) = 4 = f(-2)\text{,}\) so there is no way to undo \(f\text{:}\) the inverse transformation would not know if it should send \(2\) to \(2\) or \(-2\). More formally, if \(g\colon \mathbb{R} \to\mathbb{R} \) satisfies \(g(f(x)) = x\text{,}\) then

\[ 2 = g(f(2)) = g(2) \quad\text{and}\quad -2 = g(f(-2)) = g(2) \nonumber \]

which is impossible: \(g(2)\) is a number, so it cannot be equal to \(2\) and \(-2\) at the same time.

Define \(f\colon \mathbb{R} \to\mathbb{R} \) by \(f(x) = e^x\). This is not an invertible function. Indeed, if there were a function \(g\colon \mathbb{R} \to\mathbb{R} \) such that \(f\circ g =x\text{,}\) then we would have

\[ -1 = f\circ g(-1) = f(g(-1)) = e^{g(-1)} \nonumber \]

But \(e^x\) is a positive number for every \(x\text{,}\) so this is impossible.

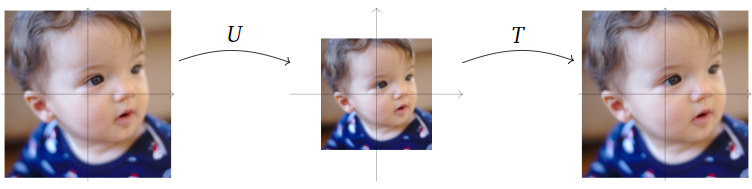

Let \(T\colon\mathbb{R}^2 \to\mathbb{R}^2 \) be dilation by a factor of \(\dfrac{3}{2}\), that is: \(T(\vec{x}) = \dfrac{3}{2}\vec{x}\)

We will show that \(T\) is an invertible transformation.

Let \(U\colon\mathbb{R}^2 \to\mathbb{R}^2 \) be dilation by a factor of \(\dfrac{2}{3}\text{:}\) that is, \(U(\vec{x}) = \dfrac{2}{3}\vec{x}\). Then

\[ T\circ U(\vec{x}) = T\biggl(\dfrac{2}{3}\vec{x}\biggr) = \dfrac{3}{2}\cdot\dfrac{2}{3}\vec{x} = \vec{x} \nonumber \]

and

\[ U\circ T(\vec{x}) = U\biggl(\dfrac{3}{2}\vec{x}\biggr) = \dfrac{2}{3}\cdot\dfrac{3}{2}\vec{x} = \vec{x} \nonumber \]

Hence \(T\circ U = \mathcal{I}\) and \(U\circ T = \mathcal{I}\text{,}\) so \(T\) is invertible, with inverse \(U\). In other words, shrinking by a factor of \(\dfrac{2}{3}\) undoes stretching by a factor of \(\dfrac{3}{2}\).

Figure \(\PageIndex{1}\)

We can see that the matrix representation of \(T\) is \(A=\left[ \begin{array}{cc} \frac{3}{2} & 0 \\ 0 & \frac{3}{2} \end{array} \right]\)

Similarly the matrix representation of \(U\) is \(B=\left[ \begin{array}{cc} \frac{2}{3} & 0 \\ 0 & \frac{2}{3} \end{array} \right]\)

It is easy to see that \(AB=I\) and \(BA=I\), so \(B\) is the inverse of \(A\). This hints to us that the matrix representation of \(T^{-1}\) may be the inverse of the matrix representation of \(T\).

Let \(T\colon\mathbb{R}^2 \to\mathbb{R}^2 \) be counterclockwise rotation by \(45^\circ\).

We will show that \(T\) is an invertible transformation.

Let \(U\colon\mathbb{R}^2 \to\mathbb{R}^2 \) be clockwise rotation by \(45^\circ\). Then \(T\circ U\) first rotates clockwise by \(45^\circ\text{,}\) then counterclockwise by \(45^\circ\text{,}\) so the composition rotates by zero degrees: it is the identity transformation. Likewise, \(U\circ T\) first rotates counterclockwise, then clockwise by the same amount, so it is the identity transformation. In other words, clockwise rotation by \(45^\circ\) undoes counterclockwise rotation by \(45^\circ\).

Figure \(\PageIndex{2}\)

This time the matrix representation of \(T\) is \(A=\left[ \begin{array}{cc} \frac{1}{\sqrt{2}} & -\frac{1}{\sqrt{2}} \\ \frac{1}{\sqrt{2}} & \frac{1}{\sqrt{2}} \end{array} \right]\)

And the matrix representation of \(U\) is \(B=\left[ \begin{array}{cc} \frac{1}{\sqrt{2}} & \frac{1}{\sqrt{2}} \\ -\frac{1}{\sqrt{2}} & \frac{1}{\sqrt{2}} \end{array} \right]\)

Once again we see that \(AB=I\) and \(BA=I\), so \(B\) is the inverse of \(A\) as expected.

Let \(T\colon\mathbb{R}^2 \to\mathbb{R}^2 \) be the reflection over the \(y\)-axis.

We will show that \(T\) is an invertible transformation.

The transformation \(T\) is invertible; in fact, it is equal to its own inverse. Reflecting a vector \(x\) over the \(y\)-axis twice brings the vector back to where it started, so \(T\circ T = \mathcal{I}\).

Figure \(\PageIndex{3}\)

The matrix representation of \(T\) is \(A=\left[ \begin{array}{cc} -1 & 0\\ 0 & 1 \end{array} \right]\)

We can see here that \(A^2=AA=I\), so \(A\) is its own inverse, just like the transformation.

Let \(T\colon\mathbb{R}^3 \to\mathbb{R}^3 \) be the projection onto the \(xy\)-plane.

We will show that \(T\) is not an invertible transformation.

Every vector on the \(z\)-axis projects onto the zero vector, so there is no way to undo what \(T\) did: the inverse transformation would not know which vector on the \(z\)-axis it should send the zero vector to. More formally, suppose there were a transformation \(U\colon\mathbb{R}^3 \to\mathbb{R}^3 \) such that \(U\circ T = \mathcal{I }\). Then

\[ \vec{0} = U\circ T(\vec{0}) = U(T(\vec{0})) = U(\vec{0}) \nonumber \]

and

\[ \left[\begin{array}{c}0\\0\\1\end{array}\right] = U\circ T\left(\left[\begin{array}{c}0\\0\\1\end{array}\right]\right) = U\left(T\left(\left[\begin{array}{c}0\\0\\1\end{array}\right]\right)\right) = U(\vec{0}) \nonumber \]

But \(U(\vec{0})\) is a single vector in \(\mathbb{R}^3 \text{,}\) so it cannot be equal to \(\vec{0}\) and to \( \left[\begin{array}{c}0\\0\\1\end{array}\right]\) at the same time.

The matrix representation of \(T\) is \(A= \left[\begin{array}{ccc}1&0&0\\0&1&0\\0&0&0\end{array}\right]\)

We attempt to find \(A^{-1}\):

\[ \left[\begin{array}{ccc|ccc}1&0&0&1&0&0\\0&1&0&0&1&0\\0&0&0&0&0&1\end{array}\right] \nonumber\]

But it is already in RREF on the left side, so it is impossible to create \(I\). This means \(A^{-1}\) does not exist, and the matrix \(A\) is not invertible (just like \(T\))

- A transformation \(T\colon\mathbb{R}^n \to\mathbb{R}^n \) is invertible if and only if it is both one-to-one and onto.

- If \(T\) is already known to be invertible, then \(U\colon\mathbb{R}^n \to\mathbb{R}^n \) is the inverse of \(T\) provided that either \(T\circ U = \mathcal{I }\) or \(U\circ T = \mathcal{I }\text{:}\) it is only necessary to verify one.

- Proof

-

To say that \(T\) is one-to-one and onto means that \(T(\vec{x})=\vec{b}\) has exactly one solution for every \(\vec{b}\) in \(\mathbb{R}^n \).

Suppose that \(T\) is invertible. Then \(T(\vec{x})=\vec{b}\) always has the unique solution \(\vec{x} = T^{-1}(\vec{b})\text{:}\) indeed, applying \(T^{-1}\) to both sides of \(T(\vec{x})=\vec{b}\) gives

\[ \vec{x} = T^{-1}(T(\vec{x})) = T^{-1}(\vec{b}) \nonumber \]

and applying \(T\) to both sides of \(\vec{x} = T^{-1}(\vec{b})\) gives

\[ T(\vec{x}) = T(T^{-1}(\vec{b})) = \vec{b} \nonumber \]

Conversely, suppose that \(T\) is one-to-one and onto. Let \(\vec{b}\) be a vector in \(\mathbb{R}^n \text{,}\) and let \(\vec{x} = U(\vec{b})\) be the unique solution of \(T(\vec{x})=\vec{b}\). Then \(U\) defines a transformation from \(\mathbb{R}^n \) to \(\mathbb{R}^n \). For any \(\vec{x}\) in \(\mathbb{R}^n \text{,}\) we have \(U(T(\vec{x})) = \vec{x}\text{,}\) because \(\vec{x}\) is the unique solution of the equation \(T(\vec{x}) =\vec{b}\) for \(\vec{b} = T(\vec{x})\). For any \(\vec{b}\) in \(\mathbb{R}^n \text{,}\) we have \(T(U(\vec{b})) = \vec{b}\text{,}\) because \(\vec{x}= U(\vec{b})\) is the unique solution of \(T(\vec{x})=\vec{b}\). Therefore, \(U\) is the inverse of \(T\text{,}\) and \(T\) is invertible.

Suppose now that \(T\) is an invertible transformation, and that \(U\) is another transformation such that \(T\circ U = \mathcal{I}\). We must show that \(U = T^{-1}\text{,}\) i.e., that \(U\circ T = \mathcal{I }\). We compose both sides of the equality \(T\circ U = \mathcal{I}\) on the left by \(T^{-1}\) and on the right by \(T\) to obtain

\[ T^{-1}\circ T\circ U\circ T = T^{-1}\circ\mathcal{I }\circ T \nonumber \]

We have \(T^{-1}\circ T =\mathcal{I }\) and \(\mathcal{I }\circ U = U\text{,}\) so the left side of the above equation is \(U\circ T\). Likewise, \(\mathcal{I }\circ T = T\) and \(T^{-1}\circ T =\mathcal{I }\text{,}\) so our equality simplifies to \(U\circ T = \mathcal{I }\text{,}\) as desired.

If instead we had assumed only that \(U\circ T =\mathcal{I }\text{,}\) then the proof that \(T\circ U =\mathcal{I }\) proceeds similarly.

It makes sense to define the inverse of a transformation \(T\colon\mathbb{R}^n \to\mathbb{R}^m \text{,}\) for \(m\neq n\text{,}\) to be a transformation \(U\colon\mathbb{R}^m \to\mathbb{R}^n \) such that \(T\circ U = \mathcal{I }\) and \(U\circ T = \mathcal{I }\). In fact, there exist invertible transformations \(T\colon\mathbb{R}^n \to\mathbb{R}^m \) for any \(m\) and \(n\text{,}\) but they are not linear, or even continuous.

If \(T\) is a linear transformation, then it can only be invertible when \(m = n\text{,}\) i.e., when its domain is equal to its codomain. Indeed, if \(T\colon\mathbb{R}^n \to\mathbb{R}^m \) is one-to-one, then \(n\leq m\) must hold, and if \(T\) is onto, then \(m\leq n\) must also hold. Therefore, when discussing invertibility we restrict ourselves to the case \(m=n\).

As we have been seeing, the matrix for the inverse of a linear transformation is the inverse of the matrix for the transformation:

Let \(T\colon\mathbb{R}^n \to\mathbb{R}^n \) be a linear transformation with standard matrix \(A\). Then \(T\) is invertible if and only if \(A\) is invertible, in which case \(T^{-1}\) is linear with standard matrix \(A^{-1}\).

- Proof

-

Suppose that \(T\) is invertible. Let \(U\colon\mathbb{R}^n \to\mathbb{R}^n \) be the inverse of \(T\). We claim that \(U\) is linear.

Let \(\vec{u},\vec{v}\) be vectors in \(\mathbb{R}^n \). Then

\[ \vec{u} + \vec{v} = T(U(\vec{u})) + T(U(\vec{v})) = T(U(\vec{u}) + U(\vec{v})) \nonumber \]

by linearity of \(T\). Applying \(U\) to both sides gives

\[ U(\vec{u} + \vec{v}) = U\bigl(T\left(U(\vec{u}) + U(\vec{v})\right)\bigr) = U(\vec{u}) + U(\vec{v}) \nonumber \]

Let \(c\) be a scalar. Then

\[ c\vec{u} = cT(U(\vec{u})) = T(cU(\vec{u})) \nonumber \]

by linearity of \(T\). Applying \(U\) to both sides gives

\[ U(c\vec{u}) = U\bigl(T(cU(\vec{u}))\bigr) = cU(\vec{u}) \nonumber \]

Since \(U\) satisfies the defining properties, it is a linear transformation.

Now that we know that \(U\) is linear, we know that it has a standard matrix \(B\). By the compatibility of matrix multiplication and composition, the matrix for \(T\circ U\) is \(AB\). But \(T\circ U\) is the identity transformation \(\mathcal{I},\) and the standard matrix for \(\mathcal{I }\) is \(I\text{,}\) so \(AB = I\). One shows similarly that \(BA = I\). Hence \(A\) is invertible and \(B = A^{-1}\).

Conversely, suppose that \(A\) is invertible. Let \(B = A^{-1}\text{,}\) and define \(U\colon\mathbb{R}^n \to\mathbb{R}^n \) by \(U(\vec{x}) = B\vec{x}\). By the compatibility of matrix multiplication and composition, the matrix for \(T\circ U\) is \(AB = I\text{,}\) and the matrix for \(U\circ T\) is \(BA = I\). Therefore,

\[ T\circ U(\vec{x}) = AB\vec{x} = I\vec{x} =\vec{x} \quad\text{and}\quad U\circ T(\vec{x}) = BA\vec{x} = I\vec{x} = \vec{x} \nonumber \]

which shows that \(T\) is invertible with inverse transformation \(U\) as desired.

Let \(T\colon\mathbb{R}^2 \to\mathbb{R}^2 \) be the linear transformation defined by, \(T\left(\left[ \begin{array} {c} a \\ b \end{array} \right]\right) = \left[ \begin{array} {c} 3a-2b \\ 5b-7a \end{array} \right]\)

Is \(T\) invertible? If so, what is \(T^{-1}\text{?}\)

Solution

Since \(T\) is a linear transformation, we know it is a matrix transformation with matrix representation:

\[ A = \left[\begin{array}{cc}| &| \\ T(\vec{e}_1) & T(\vec{e}_2) \\ | & | \end{array}\right]= \left[\begin{array}{cc}3 & -2 \\ -7 &5 \end{array}\right] \nonumber \]

We know \(T\) will have an inverse if \(A\) does:

\[ \left[A|I\right]=\left[\begin{array}{cc|cc}3 & -2 & 1 & 0 \\ -7 &5 & 0 & 1\end{array}\right]\xrightarrow{RREF} \left[\begin{array}{cc|cc}1 & 0 & 5 & 2 \\ 0 &1 & 7 & 3\end{array}\right]=\left[I|A^{-1}\right]\nonumber \]

Since \(A^{-1}\) exists, we know \(T^{-1}\) must also exist and satisfy \(T^{-1}(\vec{x})=A^{-1}\vec{x}\):

\[\boxed{T^{-1}\left(\left[ \begin{array} {c} a \\ b \end{array} \right]\right) =\left[ \begin{array} {cc} 5& 2 \\ 7&3 \end{array} \right] \left[ \begin{array} {c} a \\ b \end{array} \right]= \left[ \begin{array} {c} 5a+2b \\ 7a+3b \end{array} \right]}\nonumber\]