2.2: Power Series as Infinite Polynomials

- Page ID

- 7927

- Rules of differential and integral calculus applied to polynomials

Applied to polynomials, the rules of differential and integral calculus are straightforward. Indeed, differentiating and integrating polynomials represent some of the easiest tasks in a calculus course. For example, computing

\[\int (7 - x + x^2)dx\]

is relatively easy compared to computing

\[\int \sqrt[3]{1 + x^3}dx.\]

Unfortunately, not all functions can be expressed as a polynomial. For example, \(f(x) = \sin x\) cannot be since a polynomial has only finitely many roots and the sine function has infinitely many roots, namely \(\{nπ|n ∈ \mathbb{Z}\}\). A standard technique in the \(18^{th}\) century was to write such functions as an “infinite polynomial,” what we typically refer to as a power series. Unfortunately an “infinite polynomial” is a much more subtle object than a mere polynomial, which by definition is finite. For now we will not concern ourselves with these subtleties. We will follow the example of our forebears and manipulate all “polynomial-like” objects (finite or infinite) as if they are polynomials.

A power series centered at \(a\) is a series of the form

\[\sum_{n=0}^{\infty } a_n (x - a)^n = a_0 + a_1(x - a) + a_2(x - a)^2 + \cdots\]

Often we will focus on the behavior of power series \(\sum_{n=0}^{\infty } a_n x^n\), centered around \(0\), as the series centered around other values of \(a\) are obtained by shifting a series centered at \(0\).

Before we continue, we will make the following notational comment. The most advantageous way to represent a series is using summation notation since there can be no doubt about the pattern to the terms. After all, this notation contains a formula for the general term. This being said, there are instances where writing this formula is not practical. In these cases, it is acceptable to write the sum by supplying the first few terms and using ellipses (the three dots). If this is done, then enough terms must be included to make the pattern clear to the reader.

Returning to our definition of a power series, consider, for example, the geometric series

\[\sum_{n=0}^{\infty } x^n = 1 + x + x^ + \cdots.\]

If we multiply this series by \((1 - x)\), we obtain

\[(1 - x)(1 + x + x^2 + \cdots ) = (1 + x + x^2 + \cdots ) - (x + x^2 + x^3 + \cdots ) = 1\]

This leads us to the power series representation

\[\frac{1}{(1 - x)} = 1 + x + x^2 + \cdots = \sum_{n=0}^{\infty } x^n\]

If we substitute \(x = \frac{1}{10} \) into the above, we obtain

\[1 + \frac{1}{10} + \left ( \frac{1}{10} \right )^2 + \left ( \frac{1}{10} \right )^3 + \cdots = \frac{1}{1 - \tfrac{1}{10}} = \frac{10}{9}\]

This agrees with the fact that \(0.333 \cdots = \frac{1}{3} \), and so \(0.111v\cdots = \frac{1}{9} \), and \(1.111 \cdots = \frac{10}{9} \).

There are limitations to these formal manipulations however. Substituting \(x = 1\) or \(x = 2\) yields the questionable results

\[\frac{1}{0} = 1 + 1 + 1 + \cdots \text{ and }= \frac{1}{-1} = 1 + 2 + 2^2 + \cdots\]

We are missing something important here, though it may not be clear exactly what. A series representation of a function works sometimes, but there are some problems. For now, we will continue to follow the example of our \(18^{th}\) century predecessors and ignore them. That is, for the rest of this section we will focus on the formal manipulations to obtain and use power series representations of various functions. Keep in mind that this is all highly suspect until we can resolve problems like those just given.

Power series became an important tool in analysis in the 1700’s. By representing various functions as power series they could be dealt with as if they were (infinite) polynomials. The following is an example.

Solve the following Initial Value problem:1 Find \(y(x)\) given that \(\frac{dy}{dx} = y\), \(y(0) = 1\).

Solution

Assuming the solution can be expressed as a power series we have

\[y = \sum_{n=0}^{\infty } a_n x^n = a_0 + a_1x + a_2x^2 + \cdots \nonumber\]

Differentiating gives us

\[\frac{dy}{dx} = a_1 + 2a_2x + 3a_3x^2 + 4a_4x^3 + \cdots \nonumber\]

Since \(\frac{dy}{dx} = y\) we see that

\[a_1 = a_0 , 2a_2 = a_1 , 3a_3 = a_2 , ..., na_n = a_{n - 1} ,.... \nonumber\]

This leads to the relationship

\[a_n = \frac{1}{n}a_{n - 1} = \frac{1}{n(n - 1)}a_{n - 2} = \cdots = \frac{1}{n!}a_0 \nonumber\]

Thus the series solution of the differential equation is

\[y = \sum_{n=0}^{\infty } \frac{a_0}{n!} x^n = a_0 \sum_{n=0}^{\infty } \frac{1}{n!} x^n \nonumber\]

Using the initial condition \(y(0) = 1\), we get \(1 = a_0\left ( 1 + 0 + \frac{1}{2!}0^2 + \cdots \right ) = a_0\). Thus the solution to the initial problem is \(y = \sum_{n=0}^{\infty } \frac{1}{n!} x^n\). Let's call this function \(E(x)\). Then by definition

\[E(x) = \sum_{n=0}^{\infty } \frac{1}{n!} x^n = 1 + \frac{x^1}{1!} + \frac{x^2}{2!} + \frac{x^3}{3!} + \cdots \nonumber\]

Let’s examine some properties of this function. The first property is clear from definition \(\PageIndex{1}\).

- Property 1 \[E(0) = 1\]

- Property 2 \[E(x + y) = E(x)E(y)\]

To see this we multiply the two series together, so we have

\[\begin{align*} E(x)E(y) &= \left (\sum_{n=0}^{\infty } \frac{1}{n!} x^n \right )\left ( \sum_{n=0}^{\infty } \frac{1}{n!} y^n \right )\\ &= \left (\frac{x^0}{0!} + \frac{x^1}{1!} + \frac{x^2}{2!} + \frac{x^3}{3!} + \cdots \right )\left (\frac{y^0}{0!} + \frac{y^1}{1!} + \frac{y^2}{2!} + \frac{y^3}{3!} + \cdots \right )\\ &= \frac{x^0}{0!}\frac{y^0}{0!} + \frac{x^0}{0!}\frac{y^1}{1!} + \frac{x^1}{1!}\frac{y^0}{0!} + \frac{x^0}{0!}\frac{y^2}{2!} + \frac{x^1}{1!}\frac{y^1}{1!} + \frac{x^2}{2!}\frac{y^0}{0!} + \frac{x^0}{0!}\frac{y^3}{3!} + \frac{x^1}{1!}\frac{y^2}{2!} + \frac{x^2}{2!}\frac{y^1}{1!} + \frac{x^3}{3!}\frac{y^0}{0!} + \cdots \\ &= \frac{x^0}{0!}\frac{y^0}{0!} + \left ( \frac{x^0}{0!}\frac{y^1}{1!} + \frac{x^1}{1!}\frac{y^0}{0!} \right ) + \left ( \frac{x^0}{0!}\frac{y^2}{2!} + \frac{x^1}{1!}\frac{y^1}{1!} + \frac{x^2}{2!}\frac{y^0}{0!} \right ) + \left ( \frac{x^0}{0!}\frac{y^3}{3!} + \frac{x^1}{1!}\frac{y^2}{2!} + \frac{x^2}{2!}\frac{y^1}{1!} + \frac{x^3}{3!}\frac{y^0}{0!} \right ) \\ &= \frac{1}{0!} + \frac{1}{1!}\left ( \frac{1!}{0!1!}x^0y^1 + \frac{1!}{1!0!}x^1y^0 \right ) + \frac{1}{2!}\left ( \frac{2!}{0!2!}x^0y^2 + \frac{2!}{1!1!}x^1y^1 + \frac{2!}{2!0!}x^2y^0 \right ) + \frac{1}{3!}\left ( \frac{3!}{0!3!}x^0y^3 + \frac{3!}{1!2!}x^1y^1 + \frac{3!}{2!1!}x^2y^1 + \frac{3!}{3!0!}x^3y^0 \right ) + \cdots \end{align*}\]

\[\begin{align*} E(x)E(y) &= \frac{1}{0!} + \frac{1}{1!}\left ( \binom{1}{0}x^0y^1 + \binom{1}{1}x^1y^0 \right ) + \frac{1}{2!}\left ( \binom{2}{0}x^0y^2 + \binom{2}{1}x^1y^1 + \binom{2}{2}x^2y^0 \right ) + \frac{1}{3!}\left ( \binom{3}{0}x^0y^3 + \binom{3}{1}x^1y^2 + \binom{3}{2}x^2y^1 + \binom{3}{3}x^3y^0\right ) + \cdots \\ &= \frac{1}{0!} + \frac{1}{1!}(x+y)^1 + \frac{1}{2!}(x+y)^2 + \frac{1}{3!}(x+y)^3 + \cdots \\ &= E(x + y) \end{align*}\]

- Property 3 If \(m\) is a positive integer then \[E(mx) = (E(x))^m\] In particular, \(E(m) = (E(1))^m\).

Prove Property 3.

- Property 4 \[E(-x) = \frac{1}{E(x)} = (E(x))^{-1}\]

Prove Property 4.

- Property 5 If \(n\) is an integer with \(n\neq 0\), then \[E(\tfrac{1}{n}) = \sqrt[n]{E(1)} = (E(1))^{1/n}\]

Prove Property 5.

- Property 6 If m and n are integers with \(n\neq 0\), then \[E(\tfrac{m}{n}) = (E(1))^{m/n}\]

Prove Property 6.

Let \(E(1)\) be denoted by the number \(e\). Using the series

\[e = E(1) = \sum_{n = 0}^{\infty } \frac{1}{n!}\]

we can approximate \(e\) to any degree of accuracy. In particular \(e ≈ 2.71828\)

In light of Property 6, we see that for any rational number \(r\), \(E(r) = e^r\). Not only does this give us the series representation \(e^r = \sum_{n = 0}^{\infty } \frac{1}{n!}r^n\) for any rational number \(r\), but it gives us a way to define \(e^x\) for irrational values of \(x\) as well. That is, we can define

\[e^x = E(x) = \sum_{n = 0}^{\infty } \frac{1}{n!}x^n\]

for any real number \(x\).

As an illustration, we now have \(e^{\sqrt{2}} = \sum_{n = 0}^{\infty } \frac{1}{n!}(\sqrt{2})^n\). The expression \(e^{\sqrt{2}}\) is meaningless if we try to interpret it as one irrational number raised to another. What does it mean to raise anything to the \(\sqrt{2}\) power? However the series \(\sum_{n = 0}^{\infty } \frac{1}{n!}(\sqrt{2})^n\) does seem to have meaning and it can be used to extend the exponential function to irrational exponents. In fact, defining the exponential function via this series answers the question we raised earlier: What does \(4^{\sqrt{2}}\) mean?

It means

\[4^{\sqrt{2}} = e^{\sqrt{2}\log 4} = \sum_{n = 0}^{\infty } \frac{(\sqrt{2}\log 4)^n}{n!}\]

This may seem to be the long way around just to define something as simple as exponentiation. But this is a fundamentally misguided attitude. Exponentiation only seems simple because we’ve always thought of it as repeated multiplication (in \(\mathbb{Z}\)) or root-taking (in \(\mathbb{Q}\)). When we expand the operation to the real numbers this simply can’t be the way we interpret something like \(4^{\sqrt{2}}\). How do you take the product of \(\sqrt{2}\) copies of \(4\)? The concept is meaningless. What we need is an interpretation of \(4^{\sqrt{2}}\) which is consistent with, say \(4^{3/2} = (\sqrt{4})^3 = 8\).This is exactly what the series representation of \(e^x\) provides.

We also have a means of computing integrals as series. For example, the famous “bell shaped” curve given by the function \(f(x) = \frac{1}{\sqrt{2\pi }}e^{-\frac{x^2}{2}}\) is of vital importance in statistics and must be integrated to calculate probabilities. The power series we developed gives us a method of integrating this function. For example, we have

\[\begin{align*} \int_{x=0}^{b} \frac{1}{\sqrt{2\pi }}e^{-\frac{x^2}{2}}dx &= \frac{1}{\sqrt{2\pi }}\int_{x=0}^{b} \left ( \sum_{n = 0}^{\infty }\frac{1}{n!}\left ( \frac{-x^2}{2}^n \right ) \right )dx\\ &= \frac{1}{\sqrt{2\pi }}\sum_{n = 0}^{\infty }\left ( \frac{(-1)^n}{n!2^n} \int_{x=0}^{b} x^{2n} dx\right )\\ &= \frac{1}{\sqrt{2\pi }}\sum_{n = 0}^{\infty }\left ( \frac{(-1)^n b^{2n+1}}{n!2^n(2n+1)} \right ) \end{align*}\]

This series can be used to approximate the integral to any degree of accuracy. The ability to provide such calculations made power series of paramount importance in the 1700’s.

- Show that if \(y = \sum_{n = 0}^{\infty } a_n x^n\) satisfies the differential equation \(\frac{\mathrm{d} ^2y}{\mathrm{d} x^2} = -y\) then \[a_{n+2} = \frac{-1}{(n+2)(n+1)}a_n\] and conclude that \[y = a_0 + a_1x - \frac{1}{2!}a_0x^2 - \frac{1}{3!}a_1x^3 + \frac{1}{4!}a_0x^4 + \frac{1}{5!}a_1x^5 - \frac{1}{6!}a_0x^6 - \frac{1}{7!}a_1x^7 + \cdots \nonumber\]

- Since \(y = \sin x\) satisfies \(\frac{\mathrm{d} ^2y}{\mathrm{d} x^2} = -y\) we see that \[\sin x = a_0 + a_1x - \frac{1}{2!}a_0x^2 - \frac{1}{3!}a_1x^3 + \frac{1}{4!}a_0x^4 + \frac{1}{5!}a_1x^5 - \frac{1}{6!}a_0x^6 - \frac{1}{7!}a_1x^7 + \cdots\] for some constants \(a_0\) and \(a_1\). Show that in this case \(a_0 = 0\) and \(a_1 = 1\) and obtain \[\sin x = x - \frac{1}{3!}x^3 + \frac{1}{5!}x^5 - \frac{1}{7!}x^7 + \cdots = \sum_{n = 0}^{\infty }\frac{(-1)^n}{(2n+1)!}x^{2n+1} \nonumber\]

- Use the series \[\sin x = x - \frac{1}{3!}x^3 + \frac{1}{5!}x^5 - \frac{1}{7!}x^7 + \cdots = \sum_{n = 0}^{\infty }\frac{(-1)^n}{(2n+1)!}x^{2n+1}\] to obtain the series \[\cos x = 1 - \frac{1}{2!}x^2 + \frac{1}{4!}x^4 - \frac{1}{6!}x^6 + \cdots = \sum_{n = 0}^{\infty }\frac{(-1)^n}{(2n)!}x^{2n} \nonumber\]

- Let \(s(x,N) = \sum_{n = 0}^{N}\frac{(-1)^n}{(2n+1)!}x^{2n+1}\) and \(c(x,N) = \sum_{n = 0}^{N}\frac{(-1)^n}{(2n)!}x^{2n}\) and use a computer algebra system to plot these for \(-4π ≤ x ≤ 4π\), \(N = 1, 2, 5, 10, 15\). Describe what is happening to the series as \(N\) becomes larger.

Use the geometric series, \(\frac{1}{1-x} = 1 + x + x^2 + x^3 + \cdots = \sum_{n = 0}^{\infty }x^n\), to obtain a series for \(\frac{1}{1+x^2}\) and use this to obtain the series \[\arctan x = x - \frac{1}{3}x^3 + \frac{1}{5}x^5 - \cdots = \sum_{n = 0}^{\infty }(-1)^n\frac{1}{2n+1}x^{2n+1}\] Use the series above to obtain the series \[\frac{\pi }{4} = \sum_{n = 0}^{\infty }(-1)^n\frac{1}{2n+1} \nonumber\]

The series for arctangent was known by James Gregory (1638-1675) and it is sometimes referred to as “Gregory’s series.” Leibniz independently discovered \(\frac{\pi }{4} = 1 - \frac{1}{3} + \frac{1}{5} - \frac{1}{7} + \cdots\) by examining the area of a circle. Though it gives us a means for approximating \(π\) to any desired accuracy, the series converges too slowly to be of any practical use. For example, if we compute the sum of the first \(1000\) terms we get

\[4\left ( \sum_{n = 0}^{1000} \right )(-1)^n\frac{1}{2n+1} \approx 3.142591654\]

which only approximates \(π\) to two decimal places.

Newton knew of these results and the general scheme of using series to compute areas under curves. These results motivated Newton to provide a series approximation for \(π\) as well, which, hopefully, would converge faster. We will use modern terminology to streamline Newton’s ideas. First notice that \(\frac{\pi }{4} = \int_{x=0}^{1}\sqrt{1 - x^2} dx\) as this integral gives the area of one quarter of the unit circle. The trick now is to find series that represents \(\sqrt{1 - x^2}\).

To this end we start with the binomial theorem

\[(a+n)^N = \sum_{n = 0}^{N} \binom{N}{n} a^{N-n}b^n\]

where

\[\begin{align*} \binom{N}{n} &= \frac{N!}{n!(N-n)!}\\ &= \frac{N(N-1)(N-2)\cdots (N-n+1)}{n!}\\ &= \frac{\prod_{j=0}^{n-1}(N-j)}{n!} \end{align*}\]

Unfortunately, we now have a small problem with our notation which will be a source of confusion later if we don’t fix it. So we will pause to address this matter. We will come back to the binomial expansion afterward.

This last expression is becoming awkward in much the same way that an expression like

\[1 + \frac{1}{2} + \left ( \frac{1}{2} \right )^2 + \left ( \frac{1}{2} \right )^3 + \cdots + \left ( \frac{1}{2} \right )^k\]

is awkward. Just as this sum becomes is less cumbersome when written as \(\sum_{n=0}^{k}\left ( \frac{1}{2} \right )^n\) the product

\[N(N-1)(N-2)\cdots (N-n+1)\]

is less awkward when we write it as \(\prod_{j=0}^{n-1}(N-j)\).

A capital pi (\(Π\)) is used to denote a product in the same way that a capital sigma (\(Σ\)) is used to denote a sum. The most familiar example would be writing

\[n! = \prod_{j=1}^{n}j\]

Just as it is convenient to define \(0! = 1\), we will find it convenient to define \(\prod_{j=1}^{0} = 1\). Similarly, the fact that \(\binom{N}{0} = 1\) leads to \(\prod_{j=0}^{-1}(N-j) = 1\). Strange as this may look, it is convenient and is consistent with the convention \(\sum_{j=0}^{-1}s_j = 0\).

Returning to the binomial expansion and recalling our convention

\[\prod_{j=0}^{-1}(N-j) = 1\]

we can write, 2

\[(1+x)^N = 1 + \sum_{n=1}^{N}\left ( \frac{\prod_{j=0}^{n-1}(N-j) }{n!} \right )x^n = \sum_{n=1}^{N}\left ( \frac{\prod_{j=0}^{n-1}(N-j) }{n!} \right )x^n\]

There is an advantage to using this convention (especially when programing a product into a computer), but this is not a deep mathematical insight. It is just a notational convenience and we don’t want you to fret over it, so we will use both formulations (at least initially).

Notice that we can extend the above definition of \(\binom{N}{n}\) to values \(n > N\). In this case, \(\prod_{j=0}^{n-1}(N-j)\) will equal \(0\) as one of the factors in the product will be \(0\) (the one where \(n = N\)). This gives us that \(\binom{N}{n} = 0\) when \(n > N\) and so (1 + x)N = 1 + ∞ X n=1 Qn−1 j=0 (N −j) n! !xn = ∞ X n=0 Qn−1 j=0 (N −j) n! !xn

\[(1+x)^N = 1 + \sum_{n=1}^{\infty }\left ( \frac{\prod_{j=0}^{n-1}(N-j) }{n!} \right )x^n = \sum_{n=1}^{\infty }\left ( \frac{\prod_{j=0}^{n-1}(N-j) }{n!} \right )x^n\]

holds true for any nonnegative integer \(N\). Essentially Newton asked if it could be possible that the above equation could hold values of \(N\) which are not nonnegative integers. For example, if the equation held true for \(N = \frac{1}{2}\), we would obtain

\[(1+x)^{\frac{1}{2}} = 1 + \sum_{n=1}^{\infty }\left ( \frac{\prod_{j=0}^{n-1}(\frac{1}{2}-j) }{n!} \right )x^n = \sum_{n=1}^{\infty }\left ( \frac{\prod_{j=0}^{n-1}(\frac{1}{2}-j) }{n!} \right )x^n\]

or

\[(1+x)^{\frac{1}{2}} = 1 + \frac{1}{2}x + \frac{\frac{1}{2}(\frac{1}{2}-1)}{2!}x^2 + \frac{\frac{1}{2}(\frac{1}{2}-1)(\frac{1}{2}-2)}{3!}x^3 + \cdots\]

Notice that since \(1/2\) is not an integer the series no longer terminates. Although Newton did not prove that this series was correct (nor did we), he tested it by multiplying the series by itself. When he saw that by squaring the series he started to obtain \(1 + x + 0x^2 + 0x^3 +···\), he was convinced that the series was exactly equal to \(\sqrt{1+x}\).

Consider the series representation

\[\begin{align*} (1+x)^{\frac{1}{2}} &= 1 + \sum_{n=1}^{\infty }\left ( \frac{\prod_{j=0}^{n-1}(\frac{1}{2}-j) }{n!} \right )x^n\\ &= \sum_{n=1}^{\infty }\left ( \frac{\prod_{j=0}^{n-1}(\frac{1}{2}-j) }{n!} \right )x^n \end{align*}\]

Multiply this series by itself and compute the coefficients for \(x^0, x^1, x^2, x^3, x^4\) in the resulting series.

Let \[S(x,M) = \sum_{n=1}^{M}\left ( \frac{\prod_{j=0}^{n-1}(\frac{1}{2}-j) }{n!} \right )x^n\\\] Use a computer algebra system to plot \(S(x,M)\) for \(M = 5, 10, 15, 95, 100\) and compare these to the graph for \(\sqrt{1+x}\). What seems to be happening? For what values of \(x\) does the series appear to converge to \(\sqrt{1+x}\)?

Convinced that he had the correct series, Newton used it to find a series representation of \(\int_{x=0}^{1}\sqrt{1-x^2}dx\).

Use the series \((1+x)^{\frac{1}{2}} = \sum_{n=0}^{\infty }\left ( \frac{\prod_{j=0}^{n-1}(\frac{1}{2}-j) }{n!} \right )x^n\) to obtain the series

\[\begin{align*} \frac{\pi }{4} &= \int_{x=0}^{1}\sqrt{1-x^2}dx\\ &= \sum_{n=0}^{\infty }\left ( \frac{\prod_{j=0}^{n-1}(\frac{1}{2}-j) }{n!} \right )\left ( \frac{(-1)^n}{2n+1} \right )\\ &= 1 - \frac{1}{6} - \frac{1}{40} - \frac{1}{112} - \frac{5}{1152} - \cdots \end{align*}\]

Use a computer algebra system to sum the first \(100\) terms of this series and compare the answer to \(\frac{π}{4}\).

Again, Newton had a series which could be verified (somewhat) computationally. This convinced him even further that he had the correct series.

- Show that \[\int_{x=0}^{1/2}\sqrt{x-x^2}dx = \sum_{n=0}^{\infty } \frac{(-1)^n\prod_{j=0}^{n-1}(\frac{1}{2}-j)}{\sqrt{2}n!(2n+3)2^{n}} \nonumber\] and use this to show that \[\pi = 16\left (\sum_{n=0}^{\infty } \frac{(-1)^n\prod_{j=0}^{n-1}(\frac{1}{2}-j)}{\sqrt{2}n!(2n+3)2^{n}} \right )\]

- We now have two series for calculating \(π\) : the one from part (a) and the one derived earlier, namely \[\pi = 4\left (\sum_{n=0}^{\infty } \frac{(-1)^n}{2n+1} \right ) \nonumber\] We will explore which one converges to \(π\) faster. With this in mind, define \(S1(N) = 16\left (\sum_{n=0}^{\infty } \frac{(-1)^n\prod_{j=0}^{n-1}(\frac{1}{2}-j)}{\sqrt{2}n!(2n+3)2^{n}} \right )\) and \(S2(N) = 4\left (\sum_{n=0}^{\infty } \frac{(-1)^n}{2n+1} \right )\). Use a computer algebra system to compute \(S1(N)\) and \(S2(N)\) for \(N = 5,10,15,20\). Which one appears to converge to \(π\) faster?

In general the series representation

\[\begin{align*} (1+x)^{\alpha } &= \sum_{n=0}^{\infty }\left ( \frac{\prod_{j=0}^{n-1}(\alpha - j) }{n!} \right )x^n \\ &= 1 + \alpha x + \frac{\alpha (\alpha - 1)}{2!}x^2 + \frac{\alpha (\alpha - 1)(\alpha - 2)}{3!}x^3 + \cdots \end{align*}\]

is called the binomial series (or Newton’s binomial series). This series is correct when \(α\) is a non-negative integer (after all, that is how we got the series). We can also see that it is correct when \(α = -1\) as we obtain

\[\begin{align*} (1+x)^{-1} &= \sum_{n=0}^{\infty }\left ( \frac{\prod_{j=0}^{n-1}(-1 - j) }{n!} \right )x^n \\ &= 1 + (-1)x + \frac{-1(-1 - 1)}{2!}x^2 + \frac{-1(-1 - 1)(-1 - 2)}{3!}x^3 + \cdots \\ &= 1 - x + x^2 - x^3 + \cdots \end{align*}\]

which can be obtained from the geometric series \(\frac{1}{1-x} = 1 + x + x^2 + \cdots\).

In fact, the binomial series is the correct series representation for all values of the exponent \(α\) (though we haven’t proved this yet).

Let \(k\) be a positive integer. Find the power series, centered at zero, for \(f(x) = (1−x)^{-k}\) by

- Differentiating the geometric series \((k - 1) \)times.

- Applying the binomial series.

- Compare these two results.

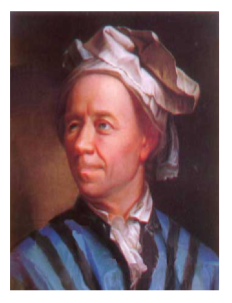

Leonhard Euler was a master at exploiting power series.

Figure \(\PageIndex{1}\): Leonhard Euler

In 1735, the \(28\) year-old Euler won acclaim for what is now called the Basel problem: to find a closed form for \(\sum_{n=1}^{\infty }\frac{1}{n^2}\). Other mathematicans knew that the series converged, but Euler was the first to find its exact value. The following problem essentially provides Euler’s solution.

- Show that the power series for \(\frac{\sin x}{x}\) is given by \(1 - \frac{1}{3!}x^2 + \frac{1}{5!}x^4 - \cdots\).

- Use (a) to infer that the roots of \(1 - \frac{1}{3!}x^2 + \frac{1}{5!}x^4 - \cdots\) are given by \(x = \pm \pi ,\pm 2\pi ,\pm 3\pi ,\cdots\)

- Suppose \(p(x) = a_0 + a_1x +···+ a_nx^n\) is a polynomial with roots \(r_1, r_2, ...,r_n\). Show that if \(a_0\neq 0\), then all the roots are non-zero and \[p(x) = a_0 \left ( 1 - \frac{x}{r_1} \right )\left ( 1 - \frac{x}{r_2} \right )\cdots \left ( 1 - \frac{x}{r_n} \right )\]

- Assuming that the result in c holds for an infinite polynomial power series, deduce that \[1 - \frac{1}{3!}x^2 + \frac{1}{5!}x^4 - \cdots = \left ( 1 - \left ( \frac{x}{\pi } \right )^2 \right )\left ( 1 - \left ( \frac{x}{2\pi } \right )^2 \right )\left ( 1 - \left ( \frac{x}{3\pi } \right )^2 \right )\cdots\]

- Expand this product to deduce \[\sum_{n=1}^{\infty }\frac{1}{n^2} = \frac{\pi ^2}{6}\]

References

1 A few seconds of thought should convince you that the solution of this problem is \(y(x) = e^x\). We will ignore this for now in favor of emphasizing the technique.

2 These two representations probably look the same at first. Take a moment and be sure you see where they differ. Hint: The “1” is missing in the last expression.