12.4: The Cross Product

- Page ID

- 925

Another useful operation: Given two vectors, find a third vector perpendicular to the first two. There are of course an infinite number of such vectors of different lengths. Nevertheless, let us find one. Suppose \( {\bf A}=\langle a_1,a_2,a_3\rangle\) and \( {\bf B}=\langle b_1,b_2,b_3\rangle\). We want to find a vector \( {\bf v} = \langle v_1,v_2,v_3\rangle\) with \({\bf v}\cdot{\bf A}={\bf v}\cdot{\bf B}=0\), or

\[\eqalign{ a_1v_1+a_2v_2+a_3v_3&=0,\cr b_1v_1+b_2v_2+b_3v_3&=0.\cr }\]

Multiply the first equation by \( b_3\) and the second by \( a_3\) and subtract to get

\[\eqalign{ b_3a_1v_1+b_3a_2v_2+b_3a_3v_3&=0\cr a_3b_1v_1+a_3b_2v_2+a_3b_3v_3&=0\cr (a_1b_3-b_1a_3)v_1 + (a_2b_3-b_2a_3)v_2&=0\cr }\]

Of course, this equation in two variables has many solutions; a particularly easy one to see is \( v_1=a_2b_3-b_2a_3\), \( v_2=b_1a_3-a_1b_3\). Substituting back into either of the original equations and solving for \( v_3\) gives \( v_3=a_1b_2-b_1a_2\).

This particular answer to the problem turns out to have some nice properties, and it is dignified with a name: the cross product:

\[ {\bf A}\times{\bf B} = \langle a_2b_3-b_2a_3,b_1a_3-a_1b_3,a_1b_2-b_1a_2\rangle. \]

While there is a nice pattern to this vector, it can be a bit difficult to memorize; here is a convenient mnemonic. The determinant of a two by two matrix is \[\left|\matrix{a&b\cr c&d\cr}\right|=ad-cb.\] This is extended to the determinant of a three by three matrix:

\[\eqalign{ \left|\matrix{x&y&z\cr a_1&a_2&a_3\cr b_1&b_2&b_3\cr}\right|&=x\left|\matrix{a_2&a_3\cr b_2&b_3\cr}\right|-y\left|\matrix{a_1&a_3\cr b_1&b_3\cr}\right|+z\left|\matrix{a_1&a_2\cr b_1&b_2\cr}\right|\cr &=x(a_2b_3-b_2a_3)-y(a_1b_3-b_1a_3)+z(a_1b_2-b_1a_2)\cr &=x(a_2b_3-b_2a_3)+y(b_1a_3-a_1b_3)+z(a_1b_2-b_1a_2).\cr} \]

Each of the two by two matrices is formed by deleting the top row and one column of the three by three matrix; the subtraction of the middle term must also be memorized. This is not the place to extol the uses of the determinant; suffice it to say that determinants are extraordinarily useful and important. Here we want to use it merely as a mnemonic device. You will have noticed that the three expressions in parentheses on the last line are precisely the three coordinates of the cross product; replacing \(x\), \(y\), \(z\) by \(\bf i\), \(\bf j\), \(\bf k\) gives us

\[\eqalign{ \left|\matrix{{\bf i}&{\bf j}&{\bf k}\cr a_1&a_2&a_3\cr b_1&b_2&b_3\cr}\right| &=(a_2b_3-b_2a_3){\bf i}-(a_1b_3-b_1a_3){\bf j}+(a_1b_2-b_1a_2){\bf k}\cr &=(a_2b_3-b_2a_3){\bf i}+(b_1a_3-a_1b_3){\bf j}+(a_1b_2-b_1a_2){\bf k}\cr &=\langle a_2b_3-b_2a_3,b_1a_3-a_1b_3,a_1b_2-b_1a_2\rangle\cr &={\bf A}\times{\bf B}.\cr} \]

Given \(\bf A\) and \(\bf B\), there are typically two possible directions and an infinite number of magnitudes that will give a vector perpendicular to both \(\bf A\) and \(\bf B\). As we have picked a particular one, we should investigate the magnitude and direction.

We know how to compute the magnitude of \({\bf A}\times{\bf B}\); it's a bit messy but not difficult. It is somewhat easier to work initially with the square of the magnitude, so as to avoid the square root:

\[\eqalign{ |{\bf A}\times{\bf B}|^2&= (a_2b_3-b_2a_3)^2+(b_1a_3-a_1b_3)^2+(a_1b_2-b_1a_2)^2\cr &=a_2^2b_3^2-2a_2b_3b_2a_3+b_2^2a_3^2+b_1^2a_3^2-2b_1a_3a_1b_3+a_1^2b_3^2+a_1^2b_2^2-2a_1b_2b_1a_2+b_1^2a_2^2\cr }\]

While it is far from obvious, this nasty looking expression can be simplified:

\[\eqalign{ |{\bf A}\times{\bf B}|^2&= (a_1^2+a_2^2+a_3^2)(b_1^2+b_2^2+b_3^2)-(a_1b_1+a_2b_2+a_3b_3)^2\cr &=|{\bf A}|^2|{\bf B}|^2-({\bf A}\cdot{\bf B})^2\cr &=|{\bf A}|^2|{\bf B}|^2-|{\bf A}|^2|{\bf B}|^2\cos^2\theta\cr &=|{\bf A}|^2|{\bf B}|^2(1-\cos^2\theta)\cr &=|{\bf A}|^2|{\bf B}|^2\sin^2\theta\cr |{\bf A}\times{\bf B}|&=|{\bf A}||{\bf B}|\sin\theta\cr }\]

The magnitude of \({\bf A}\times{\bf B}\) is thus very similar to the dot product. In particular, notice that if \(\bf A\) is parallel to \(\bf B\), the angle between them is zero, so \(\sin\theta=0\), so \(|{\bf A}\times{\bf B}|=0\), and likewise if they are anti-parallel, \(\sin\theta=0\), and \(|{\bf A}\times{\bf B}|=0\). Conversely, if \(|{\bf A}\times{\bf B}|=0\) and \(|{\bf A}|\) and \(|{\bf B}|\) are not zero, it must be that \(\sin\theta=0\), so \(\bf A\) is parallel or anti-parallel to \(\bf B\).

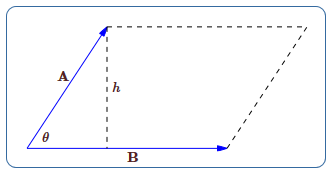

Here is a curious fact about this quantity that turns out to be quite useful later on: Given two vectors, we can put them tail to tail and form a parallelogram, as in Figure 12.4.1. The height of the parallelogram, \(h\), is \(|{\bf A}|\sin\theta\), and the base is \(|{\bf B}|\), so the area of the parallelogram is \(|{\bf A}||{\bf B}|\sin\theta\), exactly the magnitude of \(|{\bf A}\times{\bf B}|\).

What about the direction of the cross product? Remarkably, there is a simple rule that describes the direction. Let's look at a simple example: Let \({\bf A}=\langle a,0,0\rangle\), \({\bf B}=\langle b,c,0\rangle\). If the vectors are placed with tails at the origin, \(\bf A\) lies along the \(x\)-axis and \(\bf B\) lies in the \(x\)-\)y\) plane, so we know the cross product will point either up or down. The cross product is

\[\eqalign{ {\bf A}\times {\bf B}=\left|\matrix{{\bf i}&{\bf j}&{\bf k}\cr a&0&0\cr b&c&0\cr}\right| &=\langle 0,0,ac\rangle.\cr} \]

As predicted, this is a vector pointing up or down, depending on the sign of \(ac\). Suppose that \(a>0\), so the sign depends only on \(c\): if \(c>0\), \(ac>0\) and the vector points up; if \(c < 0\), the vector points down. On the other hand, if \(a < 0\) and \(c>0\), the vector points down, while if \(a < 0\) and \(c < 0\), the vector points up. Here is how to interpret these facts with a single rule: Imagine rotating vector \(\bf A\) until it points in the same direction as \(\bf B\); there are two ways to do this---use the rotation that goes through the smaller angle. If \(a>0\) and \(c>0\), or \(a < 0\) and \(c < 0\), the rotation will be counter-clockwise when viewed from above; in the other two cases, \(\bf A\) must be rotated clockwise to reach \(\bf B\). The rule is: counter-clockwise means up, clockwise means down. If \(\bf A\) and \(\bf B\) are any vectors in the \(x\)-\)y\) plane, the same rule applies---\(\bf A\) need not be parallel to the \(x\)-axis.

Although it is somewhat difficult computationally to see how this plays out for any two starting vectors, the rule is essentially the same. Place \(\bf A\) and \(\bf B\) tail to tail. The plane in which \(\bf A\) and \(\bf B\) lie may be viewed from two sides; view it from the side for which \(\bf A\) must rotate counter-clockwise to reach \(\bf B\); then the vector \({\bf A}\times{\bf B}\) points toward you.

This rule is usually called the right hand rule. Imagine placing the heel of your right hand at the point where the tails are joined, so that your slightly curled fingers indicate the direction of rotation from \(\bf A\) to \(\bf B\). Then your thumb points in the direction of the cross product \({\bf A}\times{\bf B}\).

One immediate consequence of these facts is that \({\bf A}\times{\bf B}\not={\bf B}\times{\bf A}\), because the two cross products point in the opposite direction. On the other hand, since

\[ |{\bf A}\times{\bf B}|=|{\bf A}||{\bf B}|\sin\theta =|{\bf B}||{\bf A}|\sin\theta=|{\bf B}\times{\bf A}|,\]

the lengths of the two cross products are equal, so we know that \({\bf A}\times{\bf B}=-({\bf B}\times{\bf A})\).

The cross product has some familiar-looking properties that will be useful later, so we list them here. As with the dot product, these can be proved by performing the appropriate calculations on coordinates, after which we may sometimes avoid such calculations by using the properties.

Theroem 12.4.1

If \({\bf u}\), \({\bf v}\), and \({\bf w}\) are vectors and \(a\) is a real number, then

- \({\bf u}\times({\bf v}+{\bf w}) = {\bf u}\times{\bf v}+{\bf u}\times{\bf w}\)

- \(({\bf v}+{\bf w})\times{\bf u} = {\bf v}\times{\bf u}+{\bf w}\times{\bf u}\)

- \((a{\bf u})\times{\bf v}=a({\bf u}\times{\bf v}) ={\bf u}\times(a{\bf v})\)

- \({\bf u}\cdot({\bf v}\times{\bf w}) = ({\bf u}\times{\bf v})\cdot{\bf w}\)

- \({\bf u}\times({\bf v}\times{\bf w}) = ({\bf u}\cdot{\bf w}){\bf v}-({\bf u}\cdot{\bf v}){\bf w}\)

Contributors

Integrated by Justin Marshall.