8.2: Uniform Convergence- Integrals and Derivatives

- Page ID

- 7973

- Explain the convergence of integrals and derivatives

- Cauchy sequences

We saw in the previous section that if (\(f_n\)) is a sequence of continuous functions which converges uniformly to \(f\) on an interval, then \(f\) must be continuous on the interval as well. This was not necessarily true if the convergence was only pointwise, as we saw a sequence of continuous functions defined on \((-∞,∞)\) converging pointwise to a Fourier series that was not continuous on the real line. Uniform convergence guarantees some other nice properties as well.

Suppose \(f_n\) and \(f\) are integrable and \(f_n \xrightarrow[]{unif}f\) on \([a,b]\). Then

\[\lim_{n \to \infty }\int_{x=a}^{b}f_n(x)dx = \int_{x=a}^{b}f(x)dx\]

Prove Theorem \(\PageIndex{1}\).

- Hint

-

For \(ε > 0\), we need to make \(|f_n(x) - f(x)| < \frac{ε}{b-a}\), for all \(x ∈ [a,b]\).

Notice that this theorem is not true if the convergence is only pointwise, as illustrated by the following.

Consider the sequence of functions (\(f_n\)) given by

\[f_n(x) = \begin{cases} n & \text{ if } x \; \epsilon \; \left ( 0, \frac{1}{n} \right ) \\ 0 & \text{otherwise} \end{cases}\]

- Show that \(f_n \xrightarrow[]{ptwise}0\) on \([0,1]\), but \(\lim_{n \to \infty }\int_{x=0}^{1}f_n(x)dx \neq \int_{x=0}^{1}0 dx\).

- Can the convergence be uniform? Explain.

Applying this result to power series we have the following.

If \(\sum_{n=0}^{\infty }a_n x^n\) converges uniformly1 to \(f\) on an interval containing \(0\) and \(x\) then \(\int_{t=0}^{x}f(t)dt = \sum_{n=1}^{\infty }\left ( \frac{a_n}{n+1}x^{n+1} \right )\).

Prove Corollary \(\PageIndex{1}\).

- Hint

-

Remember that

\[\sum_{n=0}^{\infty }f_n(x) = \lim_{N \to \infty }\sum_{n=0}^{N}f_n(x)\]

Surprisingly, the issue of term-by-term differentiation depends not on the uniform convergence of (\(f_n\)), but on the uniform convergence of (\(f'_n\)). More precisely, we have the following result.

Suppose for every \(n ∈ N\) \(f_n\) is differentiable, \(f'_n\) is continuous, \(f_n \xrightarrow[]{ptwise}f\), and \(f'_n \xrightarrow[]{unif}g\) on an interval, \(I\). Then \(f\) is differentiable and \(f' = g\) on \(I\).

Prove Theorem \(\PageIndex{2}\).

- Hint

-

Let \(a\) be an arbitrary fixed point in \(I\) and let \(x ∈ I\). By the Fundamental Theorem of Calculus, we have

\[\int_{t=a}^{x}f'_n(t)dt = f_n(x) - f_n(a) \nonumber\]

Take the limit of both sides and differentiate with respect to \(x\).

As before, applying this to power series gives the following result.

If \(\sum_{n=0}^{\infty }a_n x^n\) converges pointwise to \(f\) on an interval containing \(0\) and \(x\) and \(\sum_{n=1}^{\infty }a_n nx^{n-1}\) converges uniformly on an interval containing \(0\) and \(x\), then \(f'(x) = \sum_{n=1}^{\infty }a_n nx^{n-1}\).

Prove Corollary \(\PageIndex{2}\).

The above results say that a power series can be differentiated and integrated term-by-term as long as the convergence is uniform. Fortunately it is, in general, true that when a power series converges the convergence of it and its integrated and differentiated series is also uniform (almost).

However we do not yet have all of the tools necessary to see this. To build these tools requires that we return briefly to our study, begun in Chapter 4, of the convergence of sequences.

Cauchy Sequences

Knowing that a sequence or a series converges and knowing what it converges to are typically two different matters. For example, we know that \(\sum_{n=0}^{\infty }\frac{1}{n!}\) and \(\sum_{n=0}^{\infty }\frac{1}{n!n!}\) both converge. The first converges to \(e\), which has meaning in other contexts. We don’t know what the second one converges to, other than to say it converges to \(\sum_{n=0}^{\infty }\frac{1}{n!n!}\). In fact, that question might not have much meaning without some other context in which \(\sum_{n=0}^{\infty }\frac{1}{n!n!}\) arises naturally. Be that as it may, we need to look at the convergence of a series (or a sequence for that matter) without necessarily knowing what it might converge to. We make the following definition.

Let (\(s_n\)) be a sequence of real numbers. We say that (\(s_n\)) is a Cauchy sequence if for any \(ε > 0\), there exists a real number \(N\) such that if \(m\), \(n > N\), then \(|s_m - s_n| < ε\).

Notice that this definition says that the terms in a Cauchy sequence get arbitrarily close to each other and that there is no reference to getting close to any particular fixed real number. Furthermore, you have already seen lots of examples of Cauchy sequences as illustrated by the following result.

Suppose (\(s_n\)) is a sequence of real numbers which converges to \(s\). Then (\(s_n\)) is a Cauchy sequence.

Intuitively, this result makes sense. If the terms in a sequence are getting arbitrarily close to \(s\), then they should be getting arbitrarily close to each other.2 This is the basis of the proof.

Prove Theorem \(\PageIndex{3}\).

- Hint

-

\(|s_m - s_n| = |s_m - s + s - s_n| ≤ |s_m - s|+|s - s_n|\)

So any convergent sequence is automatically Cauchy. For the real number system, the converse is also true and, in fact, is equivalent to any of our completeness axioms: the NIP, the Bolzano-Weierstrass Theorem, or the LUB Property. Thus, this could have been taken as our completeness axiom and we could have used it to prove the others. One of the most convenient ways to prove this converse is to use the Bolzano-Weierstrass Theorem. To do that, we must first show that a Cauchy sequence must be bounded. This result is reminiscent of the fact that a convergent sequence is bounded (Lemma 4.2.2 of Chapter 4) and the proof is very similar.

Suppose (\(s_n\)) is a Cauchy sequence. Then there exists \(B > 0\) such that \(|s_n|≤ B\) for all \(n\).

Prove Lemma \(\PageIndex{1}\)

- Hint

-

This is similar to Exercise 4.2.4 of Chapter 4. There exists \(N\) such that if \(m\), \(n > N\) then \(|s_n - s_m| < 1\). Choose a fixed \(m > N\) and let \(B = \max \left (|s_1|, |s_2|,..., |s_{\left \lceil N \right \rceil}|,|s_m|+ 1 \right )\).

Suppose (\(s_n\))is a Cauchy sequence of real numbers. There exists a real number \(s\) such that \(\lim_{n \to \infty }s_n = s\).

- Sketch of Proof

-

We know that (\(s_n\)) is bounded, so by the Bolzano-Weierstrass Theorem, it has a convergent subsequence (\(s_{n_k}\)) converging to some real number \(s\). We have \(|s_n - s| = |s_n - s_{n_k} + s_{n_k} - s| ≤ |s_n - s_{n_k}|+|s_{n_k} - s|\). If we choose \(n\) and \(n_k\) large enough, we should be able to make each term arbitrarily small.

Provide a formal proof of Theorem \(\PageIndex{4}\).

From Theorem \(\PageIndex{3}\) we see that every Cauchy sequence converges in \(\mathbb{R}\). Moreover the proof of this fact depends on the Bolzano-Weierstrass Theorem which, as we have seen, is equivalent to our completeness axiom, the Nested Interval Property. What this means is that if there is a Cauchy sequence which does not converge then the NIP is not true. A natural question to ask is if every Cauchy sequence converges does the NIP follow? That is, is the convergence of Cauchy sequences also equivalent to our completeness axiom? The following theorem shows that the answer is yes.

Suppose every Cauchy sequence converges. Then the Nested Interval Property is true.

Prove Theorem \(\PageIndex{5}\).

- Hint

-

If we start with two sequences (\(x_n\)) and (\(y_n\)), satisfying all of the conditions of the NIP, you should be able to show that these are both Cauchy sequences.

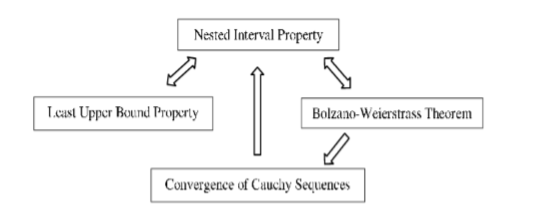

Exercises \(\PageIndex{8}\) and \(\PageIndex{9}\) tell us that the following are equivalent: the Nested Interval Property, the Bolzano-Weierstrass Theorem, the Least Upper Bound Property, and the convergence of Cauchy sequences. Thus any one of these could have been taken as the completeness axiom of the real number system and then used to prove the each of the others as a theorem according to the following dependency graph:

Figure \(\PageIndex{1}\): Dependency graph.

Since we can get from any node on the graph to any other, simply by following the implications (indicated with arrows), any one of these statements is logically equivalent to each of the others.

Since the convergence of Cauchy sequences can be taken as the completeness axiom for the real number system, it does not hold for the rational number system. Give an example of a Cauchy sequence of rational numbers which does not converge to a rational number.

If we apply the above ideas to series we obtain the following important result, which will provide the basis for our investigation of power series.

The series \(\sum_{k=0}^{\infty }a_k\) converges if and only if \(∀ ε > 0, ∃N\) such that if \(m > n > N\) then \(\left |\sum_{k=n+1}^{m}a_k \right | < \varepsilon\).

Prove the Cauchy criterion.

At this point several of the tests for convergence that you probably learned in calculus are easily proved. For example:

Show that if \(\sum_{n=1}^{\infty }a_n\) converges then \(\lim_{n \to \infty }a_n = 0\).

Show that \(\sum_{k=1}^{\infty }a_k\) converges if and only if \(\lim_{n \to \infty }\sum_{k=n+1}^{\infty }a_k = 0\).

- Hint

-

The hardest part of this problem is recognizing that it is really about the limit of a sequence as in Chapter 4.

You may also recall the Comparison Test from studying series in calculus: suppose \(0 ≤ a_n ≤ b_n\), if \(\sum b_n\) converges then \(\sum a_n\) converges. This result follows from the fact that the partial sums of \(\sum a_n\) form an increasing sequence which is bounded above by \(\sum b_n\). (See Corollary 7.4.1 of Chapter 7.) The Cauchy Criterion allows us to extend this to the case where the terms an could be negative as well. This can be seen in the following theorem.

Suppose \(|a_n| ≤ b_n\) for all \(n\). If \(\sum b_n\) converges then \(\sum a_n\) also converges.

Prove Theorem \(\PageIndex{7}\).

- Hint

-

Use the Cauchy criterion with the fact that \(\left |\sum_{k=n+1}^{m}a_k \right | \leq \sum_{k=n+1}^{m}\left |a_k \right |\).

The following definition is of marked importance in the study of series.

Given a series \(\sum a_n\), the series \(\sum \left |a_n \right |\) is called the absolute series of \(\sum a_n\) and if \(\sum \left |a_n \right |\) converges then we say that \(\sum a_n\) converges absolutely.

The significance of this definition comes from the following result.

If \(\sum a_n\) converges absolutely, then \(\sum a_n\) converges.

Show that Corollary \(\PageIndex{3}\) is a direct consequence of Theorem \(\PageIndex{7}\).

If \(\sum_{n=0}^{\infty } \left | a_n \right | = s\), then does it follow that \(s = \left | \sum_{n=0}^{\infty } a_n \right |\)? Justify your answer. What can be said?

The converse of Corollary \(\PageIndex{3}\) is not true as evidenced by the series \(\sum_{n=0}^{\infty } \frac{(-1)^n}{n+1}\). As we noted in Chapter 3, this series converges to \(\ln 2\). However, its absolute series is the Harmonic Series which diverges. Any such series which converges, but not absolutely, is said to converge conditionally. Recall also that in Chapter 3, we showed that we could rearrange the terms of the series \(\sum_{n=0}^{\infty } \frac{(-1)^n}{n+1}\) to make it converge to any number we wished. We noted further that all rearrangements of the series \(\sum_{n=0}^{\infty } \frac{(-1)^n}{(n+1)^2}\) converged to the same value. The difference between the two series is that the latter converges absolutely whereas the former does not. Specifically, we have the following result.

Suppose \(\sum a_n\) converges absolutely and let \(s = \sum_{n=0}^{\infty } a_n\). Then any rearrangement of \(\sum a_n\) must converge to \(s\).

- Sketch of Proof

-

We will first show that this result is true in the case where \(a_n ≥ 0\). If \(\sum b_n\) represents a rearrangement of \(\sum a_n\), then notice that the sequence of partial sums \(\left ( \sum_{k=0}^{n}b_k \right )_{n=0}^{\infty }\) is an increasing sequence which is bounded by \(s\). By Corollary 7.4.1 of Chapter 7, this sequence must converge to some number \(t\) and \(t ≤ s\). Furthermore \(\sum a_n\) is also a rearrangement of \(\sum b_n\). Thus the result holds for this special case. (Why?) For the general case, notice that \(a_n = \frac{|a_n|+a_n}{2} - \frac{|a_n| - a_n}{2}\) and that \(\sum \frac{\left | a_n \right | + a_n}{2}\) and \(\sum \frac{\left | a_n \right | - a_n}{2}\)are both convergent series with nonnegative terms. By the special case \(\sum \frac{\left | b_n \right | + b_n}{2} = \sum \frac{\left | a_n \right | + a_n}{2}\) and \(\sum \frac{\left | b_n \right | - b_n}{2} = \sum \frac{\left | a_n \right | - a_n}{2}\)

Fill in the details and provide a formal proof of Theorem \(\PageIndex{8}\).