12.3: Forced Systems

- Page ID

- 90996

Many problems can be modeled by nonhomogeneous second order equations. Thus, we want to find solutions of equations of the form \[\text { Ly }(x)=a(x) y^{\prime \prime}(x)+b(x) y^{\prime}(x)+c(x) y(x)=f(x) .\label{eq:1}\] As noted in Section 12.2, one solves this equation by finding the general solution of the homogeneous problem, \[L y_{h}=0\nonumber \] and a particular solution of the nonhomogeneous problem, \[L y_{p}=f .\nonumber \] Then, the general solution of (12.2.1) is simply given as \(y=y_{h}+y_{p}\)

So far, we only know how to solve constant coefficient, homogeneous equations. So, by adding a nonhomogeneous term to such equations we will need to find the particular solution to the nonhomogeneous equation.

We could guess a solution, but that is not usually possible without a little bit of experience. So, we need some other methods. There are two main methods. In the first case, the Method of Undetermined Coefficients, one makes an intelligent guess based on the form of \(f(x)\). In the second method, one can systematically developed the particular solution. We will come back to the Method of Variation of Parameters and we will also introduce the powerful machinery of Green’s functions later in this section.

Method of Undetermined Coefficients

Let's solve a simple differential equation highlighting how we can handle nonhomogeneous equations.

Consider the equation \[y^{\prime \prime}+2 y^{\prime}-3 y=4 \text {. }\label{eq:2}\]

Solution

The first step is to determine the solution of the homogeneous equation. Thus, we solve \[y_{h}^{\prime \prime}+2 y_{h}^{\prime}-3 y_{h}=0 .\label{eq:3}\] The characteristic equation is \(r^{2}+2 r-3=0\). The roots are \(r=1,-3\). So, we can immediately write the solution \[y_{h}(x)=c_{1} e^{x}+c_{2} e^{-3 x} .\nonumber \]

The second step is to find a particular solution of \(\eqref{eq:2}\). What possible function can we insert into this equation such that only a 4 remains? If we try something proportional to \(x\), then we are left with a linear function after inserting \(x\) and its derivatives. Perhaps a constant function you might think. \(y=4\) does not work. But, we could try an arbitrary constant, \(y=A\).

Let’s see. Inserting \(y=A\) into \(\eqref{eq:2}\), we obtain \[-3 A=4 \text {. }\nonumber \] Ah ha! We see that we can choose \(A=-\frac{4}{3}\) and this works. So, we have a particular solution, \(y_{p}(x)=-\frac{4}{3}\). This step is done.

Combining the two solutions, we have the general solution to the original nonhomogeneous equation \(\eqref{eq:2}\). Namely, \[y(x)=y_{h}(x)+y_{p}(x)=c_{1} e^{x}+c_{2} e^{-3 x}-\frac{4}{3} .\nonumber \] Insert this solution into the equation and verify that it is indeed a solution. If we had been given initial conditions, we could now use them to determine the arbitrary constants.

What if we had a different source term? Consider the equation \[y^{\prime \prime}+2 y^{\prime}-3 y=4 x .\label{eq:4}\] The only thing that would change is the particular solution. So, we need a guess.

Solution

We know a constant function does not work by the last example. So, let’s try \(y_{p}=A x\). Inserting this function into Equation \(\eqref{eq:4}\), we obtain \[2 A-3 A x=4 x \text {. }\nonumber \] Picking \(A=-4 / 3\) would get rid of the \(x\) terms, but will not cancel everything. We still have a constant left. So, we need something more general.

Let’s try a linear function, \(y_{p}(x)=A x+B\). Then we get after substitution into \(\eqref{eq:4}\) \[2 A-3(A x+B)=4 x \text {. }\nonumber \] Equating the coefficients of the different powers of \(x\) on both sides, we find a system of equations for the undetermined coefficients: \[\begin{align} 2 A-3 B &=0\nonumber \\ -3 A &=4 .\label{eq:5} \end{align}\] These are easily solved to obtain \[\begin{align} A &=-\frac{4}{3}\nonumber \\ B &=\frac{2}{3} A=-\frac{8}{9} .\label{eq:6} \end{align}\] So, the particular solution is \[y_{p}(x)=-\frac{4}{3} x-\frac{8}{9}\nonumber \] This gives the general solution to the nonhomogeneous problem as \[y(x)=y_{h}(x)+y_{p}(x)=c_{1} e^{x}+c_{2} e^{-3 x}-\frac{4}{3} x-\frac{8}{9} .\nonumber \]

There are general forms that you can guess based upon the form of the driving term, \(f(x)\). Some examples are given in Table \(\PageIndex{1}\). More general applications are covered in a standard text on differential equations. However, the procedure is simple. Given \(f(x)\) in a particular form, you make an appropriate guess up to some unknown parameters, or coefficients. Inserting the guess leads to a system of equations for the unknown coefficients. Solve the system and you have the solution. This solution is then added to the general solution of the homogeneous differential equation.

| \(f(x)\) | Guess |

|---|---|

| \(a_{n} x^{n}+a_{n-1} x^{n-1}+\cdots+a_{1} x+a_{0}\) | \(A_{n} x^{n}+A_{n-1} x^{n-1}+\cdots+A_{1} x+A_{0}\) |

| \(a e^{b x}\) | \(A e^{b x}\) |

| \(a \cos \omega x+b \sin \omega x\) | \(A \cos \omega x+B \sin \omega x\) |

Solve \[y^{\prime \prime}+2 y^{\prime}-3 y=2 e^{-3 x} \text {. }\label{eq:7}\]

Solution

According to the above, we would guess a solution of the form \(y_{p}=A e^{-3 x}\). Inserting our guess, we find \[0=2 e^{-3 x} \text {. }\nonumber \] Oops! The coefficient, \(A\), disappeared! We cannot solve for it. What went wrong?

The answer lies in the general solution of the homogeneous problem. Note that \(e^{x}\) and \(e^{-3 x}\) are solutions to the homogeneous problem. So, a multiple of \(e^{-3 x}\) will not get us anywhere. It turns out that there is one further modification of the method. If the driving term contains terms that are solutions of the homogeneous problem, then we need to make a guess consisting of the smallest possible power of \(x\) times the function which is no longer a solution of the homogeneous problem. Namely, we guess \(y_{p}(x)=A x e^{-3 x}\) and differentiate this guess to obtain the derivatives \(y_{p}^{\prime}=A(1-3 x) e^{-3 x}\) and \(y_{p}^{\prime \prime}=A(9 x-6) e^{-3 x}\).

Inserting these derivatives into the differential equation, we obtain \[[(9 x-6)+2(1-3 x)-3 x] A e^{-3 x}=2 e^{-3 x} .\nonumber \] Comparing coefficients, we have \[-4 A=2 \text {. }\nonumber \] So, \(A=-1 / 2\) and \(y_{p}(x)=-\frac{1}{2} x e^{-3 x}\). Thus, the solution to the problem is \[y(x)=\left(2-\frac{1}{2} x\right) e^{-3 x} .\nonumber \]

In general, if any term in the guess \(y_{p}(x)\) is a solution of the homogeneous equation, then multiply the guess by \(x^{k}\), where \(k\) is the smallest positive integer such that no term in \(x^{k} y_{p}(x)\) is a solution of the homogeneous problem.

Periodically Forced Oscillations

A special type of forcing is periodic forcing. Realistic oscillations will dampen and eventually stop if left unattended. For example, mechanical clocks are driven by compound or torsional pendula and electric oscillators are often designed with the need to continue for long periods of time. However, they are not perpetual motion machines and will need a periodic injection of energy. This can be done systematically by adding periodic forcing. Another simple example is the motion of a child on a swing in the park. This simple damped pendulum system will naturally slow down to equilibrium (stopped) if left alone. However, if the child pumps energy into the swing at the right time, or if an adult pushes the child at the right time, then the amplitude of the swing can be increased.

There are other systems, such as airplane wings and long bridge spans, in which external driving forces might cause damage to the system. A well know example is the wind induced collapse of the Tacoma Narrows Bridge due to strong winds. Of course, if one is not careful, the child in the last example might get too much energy pumped into the system causing a similar failure of the desired motion.

The Tacoma Narrows Bridge opened in Washington State (U.S.) in mid \(1940 .\) However, in November of the same year the winds excited a transverse mode of vibration, which eventually (in a few hours) lead to large amplitude oscillations and then collapse.

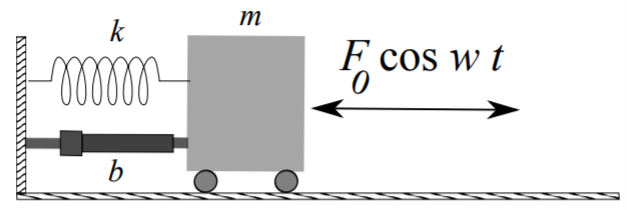

While there are many types of forced systems, and some fairly complicated, we can easily get to the basic characteristics of forced oscillations by modifying the mass-spring system by adding an external, time-dependent, driving force. Such as system satisfies the equation \[m \ddot{x}+b(x)+k x=F(t),\label{eq:8}\] where \(m\) is the mass, \(b\) is the damping constant, \(k\) is the spring constant, and \(F(t)\) is the driving force. If \(F(t)\) is of simple form, then we can employ the Method of Undetermined Coefficients. Since the systems we have considered so far are similar, one could easily apply the following to pendula or circuits.

As the damping term only complicates the solution, we will consider the simpler case of undamped motion and assume that \(b=0\). Furthermore, we will introduce a sinusoidal driving force, \(F(t)=F_{0} \cos \omega t\) in order to study periodic forcing. This leads to the simple periodically driven mass on a spring system \[m \ddot{x}+k x=F_{0} \cos \omega t .\label{eq:9}\]

In order to find the general solution, we first obtain the solution to the homogeneous problem, \[x_{h}=c_{1} \cos \omega_{0} t+c_{2} \sin \omega_{0} t\nonumber \] where \(\omega_{0}=\sqrt{\frac{k}{m}}\). Next, we seek a particular solution to the nonhomogeneous problem. We will apply the Method of Undetermined Coefficients.

A natural guess for the particular solution would be to use \(x_{p}=A \cos \omega t+\) \(B \sin \omega t\). However, recall that the guess should not be a solution of the homogeneous problem. Comparing \(x_{p}\) with \(x_{h}\), this would hold if \(\omega \neq \omega_{0}\). Otherwise, one would need to use the Modified Method of Undetermined Coefficients as described in the last section. So, we have two cases to consider.

Dividing through by the mass, we solve the simple driven system, \[\ddot{x}+\omega_{0}^{2} x=\frac{F_{0}}{m} \cos \omega t .\nonumber \]

Solve \(\ddot{x}+\omega_{0}^{2} x=\frac{F_{0}}{m} \cos \omega t\), for \(\omega \neq \omega_{0}\).

Solution

In this case we continue with the guess \(x_{p}=A \cos \omega t+B\) sinct. Since there is no damping term, one quickly finds that \(B=0\). Inserting \(x_{p}=A \cos \omega t\) into the differential equation, we find that \[\left(-\omega^{2}+\omega_{0}^{2}\right) A \cos \omega t=\frac{F_{0}}{m} \cos \omega t .\nonumber \] Solving for \(A\), we obtain \[A=\frac{F_{0}}{m\left(\omega_{0}^{2}-\omega^{2}\right)} \text {. }\nonumber \]

The general solution for this case is thus, \[x(t)=c_{1} \cos \omega_{0} t+c_{2} \sin \omega_{0} t+\frac{F_{0}}{m\left(\omega_{0}^{2}-\omega^{2}\right)} \cos \omega t .\label{eq:10}\]

Solve \(\ddot{x}+\omega_{0}^{2} x=\frac{F_{0}}{m} \cos \omega_{0} t\).

Solution

In this case, we need to employ the Modified Method of Undetermined Coefficients. So, we make the guess \(x_p = t(A \cos\omega_0 t + B \sin\omega_0 t)\). Since there is no damping term, one finds that \(A = 0\). Inserting the guess in to the differential equation, we find that \[B=\frac{F_0}{2m\omega_0},\nonumber\] or the general solution is \[x(t)=c_1\cos\omega_0t+c_2\sin\omega_0t+\frac{F_0}{2m\omega}t\sin\omega t.\label{eq:11}\]

The general solution to the problem is thus \[ x(t)=c_{1} \cos \omega_{0} t+c_{2} \sin \omega_{0} t+\left\{\begin{array}{c} \frac{F_{0}}{m\left(\omega_{0}^{2}-\omega^{2}\right)} \cos \omega t \\ \frac{F_{0}}{2 m \omega_{0}} t \sin \omega_{0} t \end{array} \omega=\omega_{0},\right.\label{eq:12} \]

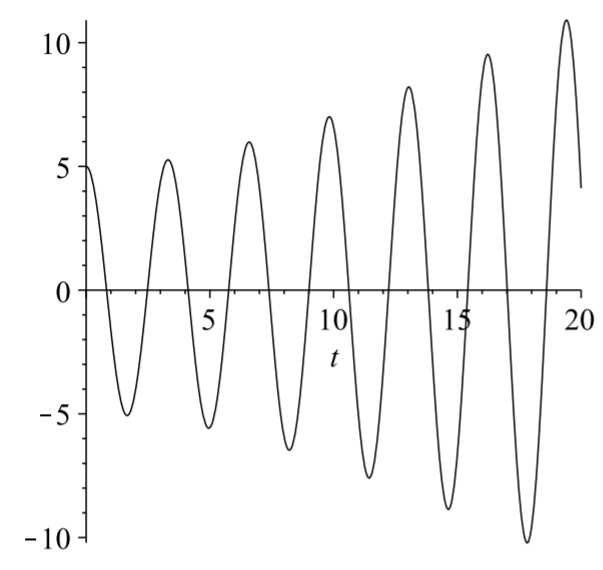

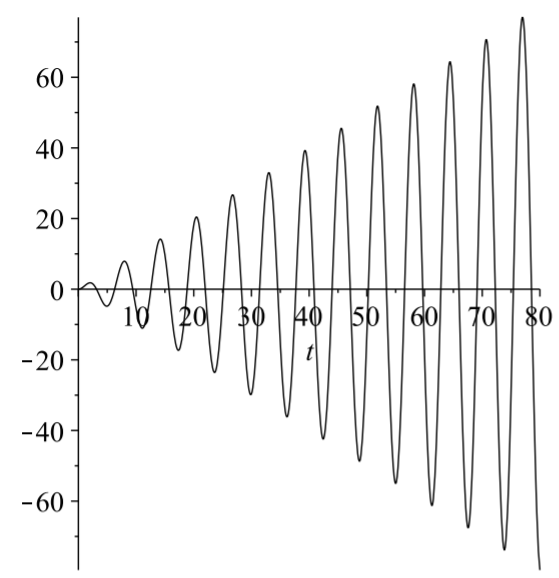

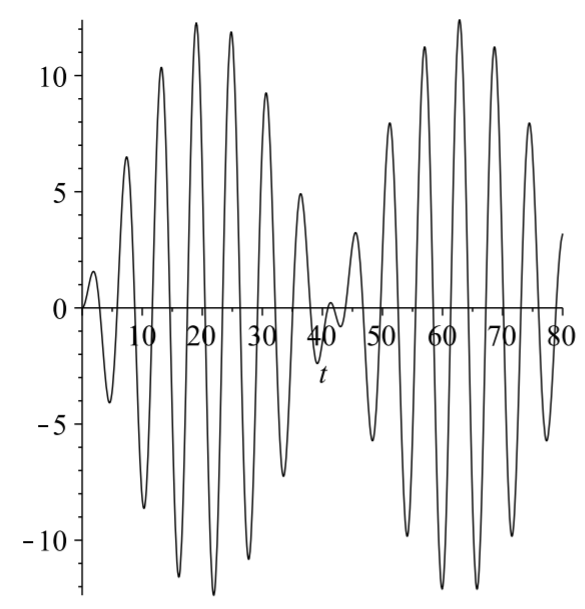

Special cases of these solutions provide interesting physics, which can be explored by the reader in the homework. In the case that \(\omega=\omega_{0}\), we see that the solution tends to grow as \(t\) gets large. This is what is called a resonance. Essentially, one is driving the system at its natural frequency. As the system is moving to the left, one pushes it to the left. If it is moving to the right, one is adding energy in that direction. This forces the amplitude of oscillation to continue to grow until the system breaks. An example of such an oscillation is shown in Figure \(\PageIndex{2}\).

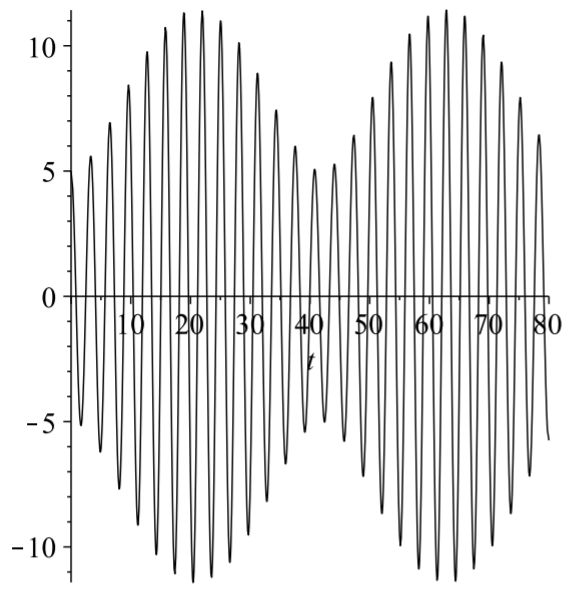

In the case that \(\omega \neq \omega_{0}\), one can rewrite the solution in a simple form. Let's choose the initial conditions that \( c_{1}=-F_{0} /\left(m\left(\omega_{0}^{2}-\omega^{2}\right)\right), c_{2}=0\). Then one has (see Problem ??) \[x(t)=\frac{2 F_{0}}{m\left(\omega_{0}^{2}-\omega^{2}\right)} \sin \frac{\left(\omega_{0}-\omega\right) t}{2} \sin \frac{\left(\omega_{0}+\omega\right) t}{2} .\label{eq:13}\] For values of \(\omega\) near \(\omega_{0}\), one finds the solution consists of a rapid oscillation, due to the \(\sin \frac{\left(\omega_{0}+\omega\right) t}{2}\) factor, with a slowly varying amplitude, \(\frac{2 F_{0}}{m\left(\omega_{0}^{2}-\omega^{2}\right)} \sin \frac{\left(\omega_{0}-\omega\right) t}{2}\). The reader can investigate this solution.

This slow variation is called a beat and the beat frequency is given by \(f=\) \(\frac{\left|\omega_{0}-\omega\right|}{4 \pi}\). In Figure \(\PageIndex{3}\) we see the high frequency oscillations are contained by the lower beat frequency, \(f=\frac{0.15}{4 \pi} \mathrm{s}\). This corresponds to a period of \(T=1 / f \approx 83.7 \mathrm{~Hz}\), which looks about right from the figure.

Solve \(\ddot{x}+x=2 \cos \omega t, x(0)=0, \dot{x}(0)=0\), for \(\omega=1,1.15\).

Solution

For each case, we need the solution of the homogeneous problem, \[x_{h}(t)=c_{1} \cos t+c_{2} \sin t .\nonumber \] The particular solution depends on the value of \(\omega\).

For \(\omega=1\), the driving term, \(2 \cos \omega t\), is a solution of the homogeneous problem. Thus, we assume \[x_{p}(t)=A t \cos t+B t \sin t .\nonumber \] Inserting this into the differential equation, we find \(A=0\) and \(B=1\). So, the general solution is \[x(t)=c_{1} \cos t+c_{2} \sin t+t \sin t .\nonumber \] Imposing the initial conditions, we find \[x(t)=t \sin t .\nonumber \] This solution is shown in Figure \(\PageIndex{4}\).

For \(\omega=1.15\), the driving term, \(2 \cos \omega 1.15 t\), is not a solution of the homogeneous problem. Thus, we assume \[x_{p}(t)=A \cos 1.15 t+B \sin 1.15 t .\nonumber \] Inserting this into the differential equation, we find \(A=-\frac{800}{129}\) and \(B=0\). So, the general solution is \[x(t)=c_{1} \cos t+c_{2} \sin t-\frac{800}{129} \cos t .\nonumber \] Imposing the initial conditions, we find \[x(t)=\frac{800}{129}(\cos t-\cos 1.15 t) .\nonumber \]

This solution is shown in Figure \(\PageIndex{5}\). The beat frequency in this case is the same as with Figure \(\PageIndex{3}\).

Method of Variation of Parameters

A more systematic way to find particular solutions is through the use of the Method of Variation of Parameters. The derivation is a little detailed and the solution is sometimes messy, but the application of the method is straight forward if you can do the required integrals. We will first derive the needed equations and then do some examples.

We begin with the nonhomogeneous equation. Let’s assume it is of the standard form \[a(x) y^{\prime \prime}(x)+b(x) y^{\prime}(x)+c(x) y(x)=f(x) .\label{eq:14}\] We know that the solution of the homogeneous equation can be written in terms of two linearly independent solutions, which we will call \(y_{1}(x)\) and \(y_{2}(x)\) : \[y_{h}(x)=c_{1} y_{1}(x)+c_{2} y_{2}(x) .\nonumber \]

Replacing the constants with functions, then we no longer have a solution to the homogeneous equation. Is it possible that we could stumble across the right functions with which to replace the constants and somehow end up with \(f(x)\) when inserted into the left side of the differential equation? It turns out that we can.

So, let’s assume that the constants are replaced with two unknown functions, which we will call \(c_1(x)\) and \(c_2(x)\). This change of the parameters is where the name of the method derives. Thus, we are assuming that a particular solution takes the form \[y_{p}(x)=c_{1}(x) y_{1}(x)+c_{2}(x) y_{2}(x) .\label{eq:15}\] If this is to be a solution, then insertion into the differential equation should make the equation hold. To do this we will first need to compute some derivatives.

We assume the nonhomogeneous equation has a particular solution of the form \[y_{p}(x)=c_{1}(x) y_{1}(x)+c_{2}(x) y_{2}(x) .\nonumber \]

The first derivative is given by \[y_{p}^{\prime}(x)=c_{1}(x) y_{1}^{\prime}(x)+c_{2}(x) y_{2}^{\prime}(x)+c_{1}^{\prime}(x) y_{1}(x)+c_{2}^{\prime}(x) y_{2}(x) .\label{eq:16}\] Next we will need the second derivative. But, this will yield eight terms. So, we will first make a simplifying assumption. Let’s assume that the last two terms add to zero: \[c_{1}^{\prime}(x) y_{1}(x)+c_{2}^{\prime}(x) y_{2}(x)=0 .\label{eq:17}\] It turns out that we will get the same results in the end if we did not assume this. The important thing is that it works!

Under the assumption the first derivative simplifies to \[y_{p}^{\prime}(x)=c_{1}(x) y_{1}^{\prime}(x)+c_{2}(x) y_{2}^{\prime}(x) .\label{eq:18}\] The second derivative now only has four terms: \[y_{p}^{\prime}(x)=c_{1}(x) y_{1}^{\prime \prime}(x)+c_{2}(x) y_{2}^{\prime \prime}(x)+c_{1}^{\prime}(x) y_{1}^{\prime}(x)+c_{2}^{\prime}(x) y_{2}^{\prime}(x) .\label{eq:19}\]

Now that we have the derivatives, we can insert the guess into the differential equation. Thus, we have \[\begin{align} f(x)=& a(x)\left[c_{1}(x) y_{1}^{\prime \prime}(x)+c_{2}(x) y_{2}^{\prime \prime}(x)+c_{1}^{\prime}(x) y_{1}^{\prime}(x)+c_{2}^{\prime}(x) y_{2}^{\prime}(x)\right]\nonumber \\ &+b(x)\left[c_{1}(x) y_{1}^{\prime}(x)+c_{2}(x) y_{2}^{\prime}(x)\right]\nonumber \\ &+c(x)\left[c_{1}(x) y_{1}(x)+c_{2}(x) y_{2}(x)\right] .\label{eq:20} \end{align}\]

Regrouping the terms, we obtain \[\begin{align} f(x)=& c_{1}(x)\left[a(x) y_{1}^{\prime \prime}(x)+b(x) y_{1}^{\prime}(x)+c(x) y_{1}(x)\right]\nonumber \\ &+c_{2}(x)\left[a(x) y_{2}^{\prime \prime}(x)+b(x) y_{2}^{\prime}(x)+c(x) y_{2}(x)\right]\nonumber \\ &+a(x)\left[c_{1}^{\prime}(x) y_{1}^{\prime}(x)+c_{2}^{\prime}(x) y_{2}^{\prime}(x)\right] .\label{eq:21} \end{align}\] Note that the first two rows vanish since \(y_{1}\) and \(y_{2}\) are solutions of the homogeneous problem. This leaves the equation \[f(x)=a(x)\left[c_{1}^{\prime}(x) y_{1}^{\prime}(x)+c_{2}^{\prime}(x) y_{2}^{\prime}(x)\right],\nonumber \] which can be rearranged as \[c_{1}^{\prime}(x) y_{1}^{\prime}(x)+c_{2}^{\prime}(x) y_{2}^{\prime}(x)=\frac{f(x)}{a(x)} .\label{eq:22}\]

In order to solve the differential equation \(L y=f\), we assume \[y_{p}(x)=c_{1}(x) y_{1}(x)+c_{2}(x) y_{2}(x),\nonumber \] for \(L y_{1,2}=0 .\) Then, one need only solve a simple system of equations \(\eqref{eq:23}\).

In summary, we have assumed a particular solution of the form \[y_{p}(x)=c_{1}(x) y_{1}(x)+c_{2}(x) y_{2}(x) .\nonumber \] This is only possible if the unknown functions \(c_{1}(x)\) and \(c_{2}(x)\) satisfy the system of equations \[\begin{align} &c_{1}^{\prime}(x) y_{1}(x)+c_{2}^{\prime}(x) y_{2}(x)=0\nonumber \\ &c_{1}^{\prime}(x) y_{1}^{\prime}(x)+c_{2}^{\prime}(x) y_{2}^{\prime}(x)=\frac{f(x)}{a(x)}\label{eq:23} \end{align}\]

It is standard to solve this system for the derivatives of the unknown functions and then present the integrated forms. However, one could just as easily start from this system and solve the system for each problem encountered.

Find the general solution of the nonhomogeneous problem: \(y^{\prime \prime}-\) \(y=e^{2 x}\).

Solution

The general solution to the homogeneous problem \(y_{h}^{\prime \prime}-y_{h}=0\) is \[y_{h}(x)=c_{1} e^{x}+c_{2} e^{-x} .\nonumber \]

In order to use the Method of Variation of Parameters, we seek a solution of the form \[y_{p}(x)=c_{1}(x) e^{x}+c_{2}(x) e^{-x} .\nonumber \] We find the unknown functions by solving the system in \(\eqref{eq:23}\), which in this case becomes \[\begin{align} &c_{1}^{\prime}(x) e^{x}+c_{2}^{\prime}(x) e^{-x}=0\nonumber \\ &c_{1}^{\prime}(x) e^{x}-c_{2}^{\prime}(x) e^{-x}=e^{2 x} .\label{eq:24} \end{align}\]

Adding these equations we find that \[2 c_{1}^{\prime} e^{x}=e^{2 x} \rightarrow c_{1}^{\prime}=\frac{1}{2} e^{x}\nonumber \] Solving for \(c_{1}(x)\) we find \[c_{1}(x)=\frac{1}{2} \int e^{x} d x=\frac{1}{2} e^{x} .\nonumber \]

Subtracting the equations in the system yields \[2 c_{2}^{\prime} e^{-x}=-e^{2 x} \rightarrow c_{2}^{\prime}=-\frac{1}{2} e^{3 x}\nonumber \] Thus, \[c_{2}(x)=-\frac{1}{2} \int e^{3 x} d x=-\frac{1}{6} e^{3 x} .\nonumber \]

The particular solution is found by inserting these results into \(y_{p}\) : \[\begin{align} y_{p}(x) &=c_{1}(x) y_{1}(x)+c_{2}(x) y_{2}(x)\nonumber \\ &=\left(\frac{1}{2} e^{x}\right) e^{x}+\left(-\frac{1}{6} e^{3 x}\right) e^{-x}\nonumber \\ &=\frac{1}{3} e^{2 x}\label{eq:25} \end{align}\] Thus, we have the general solution of the nonhomogeneous problem as \[y(x)=c_{1} e^{x}+c_{2} e^{-x}+\frac{1}{3} e^{2 x} .\nonumber \]

Now consider the problem: \(y^{\prime \prime}+4 y=\sin x\).

Solution

The solution to the homogeneous problem is \[y_{h}(x)=c_{1} \cos 2 x+c_{2} \sin 2 x \text {. }\label{eq:26}\]

We now seek a particular solution of the form \[y_{h}(x)=c_{1}(x) \cos 2 x+c_{2}(x) \sin 2 x .\nonumber \] We let \(y_{1}(x)=\cos 2 x\) and \(y_{2}(x)=\sin 2 x, a(x)=1, f(x)=\sin x\) in system \(\eqref{eq:23}\): \[\begin{align} c_{1}^{\prime}(x) \cos 2 x+c_{2}^{\prime}(x) \sin 2 x &=0\nonumber \\ -2 c_{1}^{\prime}(x) \sin 2 x+2 c_{2}^{\prime}(x) \cos 2 x &=\sin x .\label{eq:27} \end{align}\]

Now, use your favorite method for solving a system of two equations and two unknowns. In this case, we can multiply the first equation by \(2 \sin 2 x\) and the second equation by \(\cos 2 x\). Adding the resulting equations will eliminate the \(c_{1}^{\prime}\) terms. Thus, we have \[c_{2}^{\prime}(x)=\frac{1}{2} \sin x \cos 2 x=\frac{1}{2}\left(2 \cos ^{2} x-1\right) \sin x .\nonumber \] Inserting this into the first equation of the system, we have \[c_{1}^{\prime}(x)=-c_{2}^{\prime}(x) \frac{\sin 2 x}{\cos 2 x}=-\frac{1}{2} \sin x \sin 2 x=-\sin ^{2} x \cos x \text {. }\nonumber \]

These can easily be solved: \[\begin{gathered} c_{2}(x)=\frac{1}{2} \int\left(2 \cos ^{2} x-1\right) \sin x d x=\frac{1}{2}\left(\cos x-\frac{2}{3} \cos ^{3} x\right) . \\ c_{1}(x)=-\int \sin ^{x} \cos x d x=-\frac{1}{3} \sin ^{3} x . \end{gathered}\]

The final step in getting the particular solution is to insert these functions into \(y_{p}(x)\). This gives \[\begin{align} y_{p}(x) &=c_{1}(x) y_{1}(x)+c_{2}(x) y_{2}(x)\nonumber \\ &=\left(-\frac{1}{3} \sin ^{3} x\right) \cos 2 x+\left(\frac{1}{2} \cos x-\frac{1}{3} \cos ^{3} x\right) \sin x\nonumber \\ &=\frac{1}{3} \sin x .\label{eq:28} \end{align}\]

So, the general solution is \[y(x)=c_{1} \cos 2 x+c_{2} \sin 2 x+\frac{1}{3} \sin x .\label{eq:29}\]