2.3: Logical Equivalences

( \newcommand{\kernel}{\mathrm{null}\,}\)

Some logical statements are “the same.” For example, in the last section, we discussed the fact that a conditional and its contrapositive have the same logical content. Wouldn’t we be justified in writing something like the following?

Well, one pretty serious objection to doing that is that the equals sign

Thus we can either write

or

I like the latter, but use whichever form you like – no one will have any problem understanding either.

The formal definition of logical equivalence, which is what we’ve been describing, is this: two compound sentences are logically equivalent if in a truth table (that contains all possible combinations of the truth values of the predicate variables in its rows) the truth values of the two sentences are equal in every row.

Consider the two compound sentences

One could, in principle, verify all logical equivalences by filling out truth tables. Indeed, in the exercises for this section, we will ask you to develop a certain facility at this task. While this activity can be somewhat fun, and many of my students want the filling-out of truth tables to be a significant portion of their midterm exam, you will probably eventually come to find it somewhat tedious. A slightly more mature approach to logical equivalences is this: use a set of basic equivalences – which themselves may be verified via truth tables – as the basic rules or laws of logical equivalence, and develop a strategy for converting one sentence into another using these rules. This process will feel very familiar, it is like “doing” algebra, but the rules one is allowed to use are subtly different.

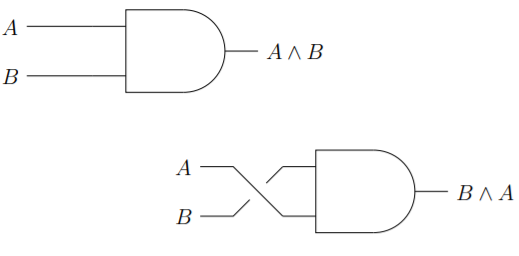

First, we have the commutative laws, one each for conjunction and disjunction. It’s worth noting that there isn’t a commutative law for implication.

The commutative property of conjunction says that

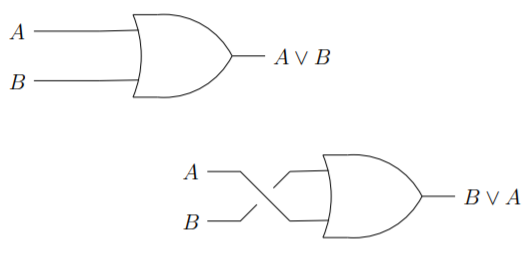

The commutative property of disjunctions is equally transparent from the perspective of a circuit diagram.

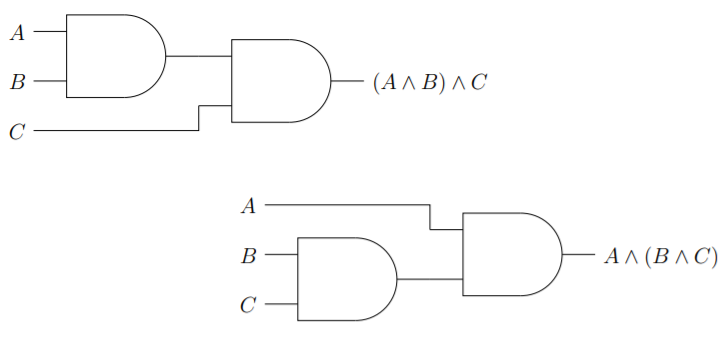

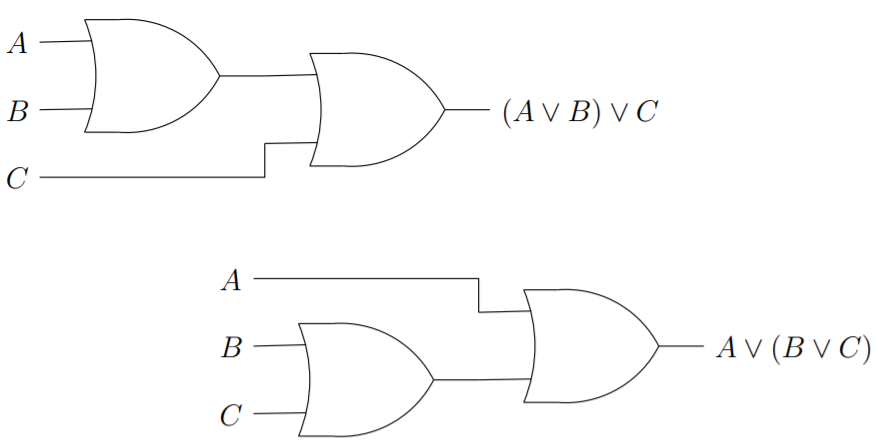

The associative laws also have something to do with what order operations are done. One could think of the difference in the following terms: Commutative properties involve spatial or physical order and the associative properties involve temporal order. The associative law of addition could be used to say we’ll get the same result if we add

The associative law of conjunction states that

The associative law of disjunction states that

In a situation where both associativity and commutativity pertain the symbols involved can appear in any order and with any reasonable parenthesization. In how many different ways can the sum

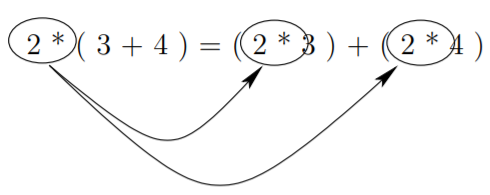

The next type of basic logical equivalences we’ll consider are the so-called distributive laws. Distributive laws involve the interaction of two operations, when we distribute multiplication over a sum, we effectively replace one instance of an operand and the associated operator, with two instances, as is illustrated below.

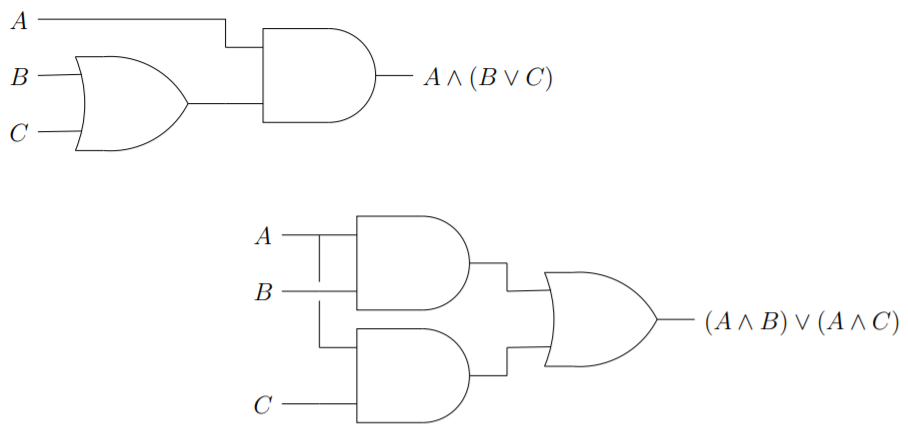

The logical operators ∧ and ∨ each distribute over the other. Thus we have the distributive law of conjunction over disjunction, which is expressed in the equivalence

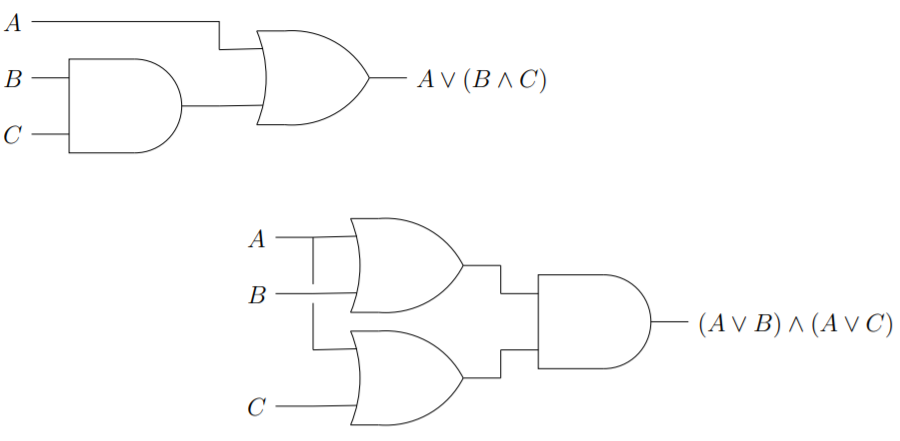

We also have the distributive law of disjunction over conjunction which is given by the equivalence

Traditionally, the laws we’ve just stated would be called left-distributive laws and we would also need to state that there are right-distributive laws that apply. Since, in the current setting, we have already said that the commutative law is valid, this isn’t really necessary.

State the right-hand versions of the distributive laws.

The next set of laws we’ll consider come from trying to figure out what the distribution of a minus sign over a sum

What actually works is a set of rules known as DeMorgan’s laws, which basically say that you distribute the negative sign but you also must change the operator. As logical equivalences, DeMorgan’s laws are

and

\(¬(A ∨ B) \cong ¬A ∧ ¬B.)

In ordinary arithmetic, there are two notions of “inverse.” The negative of a number is known as its additive inverse and the reciprocal of a number is its multiplicative inverse. These notions lead to a couple of equations,

and

Boolean algebra only has one “inverse” concept, the denial of a predicate (i.e. logical negation), but the equations above have analogues, as do the symbols

Boolean algebra something like a zero (

Now that we have the special logical sentences represented by

The number

In mathematics, the word idempotent is used to describe situations where the powers of a thing are equal to that thing. For example, because every power of

There are a couple of properties of the logical negation operator that should be stated, though probably they seem self-evident. If you form the denial of a denial, you come back to the same thing as the original; also the symbols

Finally, we should mention a really strange property, called absorption, which states that the expressions

In Table 2.3.1, we have collected all of these basic logical equivalences in one place.

| Table 2.3.1: Basic Logical Equivalences. | |||

|---|---|---|---|

| Conjunctive Version | Disjunctive Version | Algebraic Analog | |

| Commutative Laws | |||

| Associative Laws | |||

| Distributive Laws | |||

| DeMorgan's Laws | |||

| Double Negation | |||

| Complementarity | |||

| Identity Laws | |||

| Domination | |||

| Idempotence | |||

| Absorption | |||

Exercises:

There are

Use truth tables to verify or disprove the following logical equivalences.

- The absorption laws.

Draw pairs of related digital logic circuits that illustrate DeMorgan’s laws.

Find the negation of each of the following and simplify as much as possible.

Because a conditional sentence is equivalent to a certain disjunction, and because DeMorgan’s law tells us that the negation of a disjunction is a conjunction, it follows that the negation of a conditional is a conjunction. Find denials (the negation of a sentence is often called its “denial”) for each of the following conditionals.

- “If you smoke, you’ll get lung cancer.”

- “If a substance glitters, it is not necessarily gold.”

- “If there is smoke, there must also be fire.”

- “If a number is squared, the result is positive.”

- “If a matrix is square, it is invertible.”

The so-called “ethic of reciprocity” is an idea that has come up in many of the world’s religions and philosophies. Below are statements of the ethic from several sources. Discuss their logical meanings and determine which (if any) are logically equivalent.

- “One should not behave towards others in a way which is disagreeable to oneself.” Mencius Vii.A.4 (Hinduism)

- “None of you [truly] believes until he wishes for his brother what he wishes for himself.” Number 13 of Imam “Al-Nawawi’s Forty Hadiths.” (Islam)

- “And as ye would that men should do to you, do ye also to them likewise.” Luke 6:31, King James Version. (Christianity)

- “What is hateful to you, do not to your fellow man. This is the law: all the rest is commentary.” Talmud, Shabbat 31a. (Judaism)

- “An it harm no one, do what thou wilt” (Wicca)

- “What you would avoid suffering yourself, seek not to impose on others.” (the Greek philosopher Epictetus – first century A.D.)

- “Do not do unto others as you expect they should do unto you. Their tastes may not be the same.” (the Irish playwright George Bernard Shaw –

You encounter two natives of the land of knights and knaves. Fill in an explanation for each line of the proofs of their identities.

- Natasha says, “Boris is a knave.” Boris says, “Natasha and I are knights."

Claim: Natasha is a knight, and Boris is a knave.

Proof: If Natasha is a knave, then Boris is a knight.

If Boris is a knight, then Natasha is a knight.

Therefore, if Natasha is a knave, then Natasha is a knight.

Hence Natasha is a knight.

Therefore, Boris is a knave.

Q.E.D.

- Bonaparte says “I am a knight and Wellington is a knave.” Wellington says “I would tell you that B is a knight.”

Claim: Bonaparte is a knight and Wellington is a knave.

Proof: Either Wellington is a knave or Wellington is a knight.

If Wellington is a knight it follows that Bonaparte is a knight.

If Bonaparte is a knight then Wellington is a knave.

So, if Wellington is a knight then Wellington is a knave (which is impossible!)

Thus, Wellington is a knave.

Since Wellington is a knave, his statement “I would tell you that Bonaparte is a knight” is false.

So Wellington would in fact tell us that Bonaparte is a knave.

Since Wellington is a knave we conclude that Bonaparte is a knight.

Thus Bonaparte is a knight and Wellington is a knave (as claimed).

Q.E.D.