5.1: The Principle of Mathematical Induction

- Page ID

- 19388

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\(\newcommand{\avec}{\mathbf a}\) \(\newcommand{\bvec}{\mathbf b}\) \(\newcommand{\cvec}{\mathbf c}\) \(\newcommand{\dvec}{\mathbf d}\) \(\newcommand{\dtil}{\widetilde{\mathbf d}}\) \(\newcommand{\evec}{\mathbf e}\) \(\newcommand{\fvec}{\mathbf f}\) \(\newcommand{\nvec}{\mathbf n}\) \(\newcommand{\pvec}{\mathbf p}\) \(\newcommand{\qvec}{\mathbf q}\) \(\newcommand{\svec}{\mathbf s}\) \(\newcommand{\tvec}{\mathbf t}\) \(\newcommand{\uvec}{\mathbf u}\) \(\newcommand{\vvec}{\mathbf v}\) \(\newcommand{\wvec}{\mathbf w}\) \(\newcommand{\xvec}{\mathbf x}\) \(\newcommand{\yvec}{\mathbf y}\) \(\newcommand{\zvec}{\mathbf z}\) \(\newcommand{\rvec}{\mathbf r}\) \(\newcommand{\mvec}{\mathbf m}\) \(\newcommand{\zerovec}{\mathbf 0}\) \(\newcommand{\onevec}{\mathbf 1}\) \(\newcommand{\real}{\mathbb R}\) \(\newcommand{\twovec}[2]{\left[\begin{array}{r}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\ctwovec}[2]{\left[\begin{array}{c}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\threevec}[3]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\cthreevec}[3]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\fourvec}[4]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\cfourvec}[4]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\fivevec}[5]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\cfivevec}[5]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\mattwo}[4]{\left[\begin{array}{rr}#1 \amp #2 \\ #3 \amp #4 \\ \end{array}\right]}\) \(\newcommand{\laspan}[1]{\text{Span}\{#1\}}\) \(\newcommand{\bcal}{\cal B}\) \(\newcommand{\ccal}{\cal C}\) \(\newcommand{\scal}{\cal S}\) \(\newcommand{\wcal}{\cal W}\) \(\newcommand{\ecal}{\cal E}\) \(\newcommand{\coords}[2]{\left\{#1\right\}_{#2}}\) \(\newcommand{\gray}[1]{\color{gray}{#1}}\) \(\newcommand{\lgray}[1]{\color{lightgray}{#1}}\) \(\newcommand{\rank}{\operatorname{rank}}\) \(\newcommand{\row}{\text{Row}}\) \(\newcommand{\col}{\text{Col}}\) \(\renewcommand{\row}{\text{Row}}\) \(\newcommand{\nul}{\text{Nul}}\) \(\newcommand{\var}{\text{Var}}\) \(\newcommand{\corr}{\text{corr}}\) \(\newcommand{\len}[1]{\left|#1\right|}\) \(\newcommand{\bbar}{\overline{\bvec}}\) \(\newcommand{\bhat}{\widehat{\bvec}}\) \(\newcommand{\bperp}{\bvec^\perp}\) \(\newcommand{\xhat}{\widehat{\xvec}}\) \(\newcommand{\vhat}{\widehat{\vvec}}\) \(\newcommand{\uhat}{\widehat{\uvec}}\) \(\newcommand{\what}{\widehat{\wvec}}\) \(\newcommand{\Sighat}{\widehat{\Sigma}}\) \(\newcommand{\lt}{<}\) \(\newcommand{\gt}{>}\) \(\newcommand{\amp}{&}\) \(\definecolor{fillinmathshade}{gray}{0.9}\)The Principle of Mathematical Induction (PMI) may be the least intuitive proof method available to us. Indeed, at first, PMI may feel somewhat like grabbing yourself by the seat of your pants and lifting yourself into the air. Despite the indisputable fact that proofs by PMI often feel like magic, we need to convince you of the validity of this proof technique. It is one of the most important tools in your mathematical kit!

The simplest argument in favor of the validity of PMI is simply that it is axiomatic. This may seem somewhat unsatisfying, but the axioms for the natural number system, known as the Peano axioms, include one that justifies PMI. The Peano axioms will not be treated thoroughly in this book, but here they are:

- There is a least element of \(\mathbb{N}\) that we denote by \(0\).

- Every natural number a has a successor denoted by \(s(a)\). (Intuitively, think of \(s(a) = a + 1\).)

- There is no natural number whose successor is \(0\). (In other words, \(-1\) isn’t in \(\mathbb{N}\).)

- Distinct natural numbers have distinct successors. (\(a \neq b \implies s(a) \neq s(b)\))

- If a subset of the natural numbers contains \(0\) and also has the property that whenever \(a ∈ S\) it follows that \(s(a) ∈ S\), then the subset \(S\) is actually equal to \(\mathbb{N}\).

The last axiom is the one that justifies PMI. Basically, if \(0\) is in a subset, and the subset has this property about successors1, then \(1\) must be in it. But if \(1\) is in it, then \(1\)’s successor (\(2\)) must be in it. And so on . . .

The subset ends up having every natural number in it.

Verify that the following symbolic formulation has the same content as the version of the \(5^{\text{th}}\) Peano axiom given above.

\(∀S ⊆ N (0 ∈ S) ∧ (∀a ∈ \mathbb{N}, a ∈ S \implies s(a) ∈ S) \implies S = \mathbb{N}\)

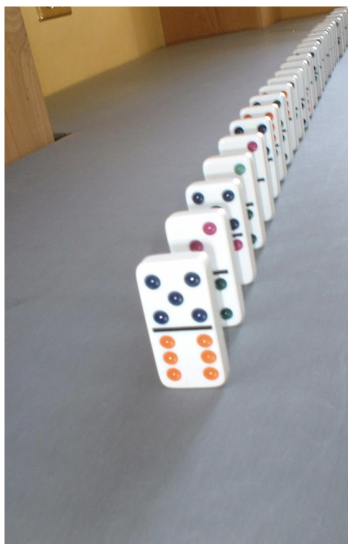

On August \(16^{\text{th}}\) \(2003\), Ma Lihua of Beijing, China earned her place in the record books by single-handedly setting up an arrangement of dominoes standing on end (actually, the setup took \(7\) weeks and was almost ruined by some cockroaches in the Singapore Expo Hall) and toppling them. After the first domino was tipped over it took about six minutes before \(303,621\) out of the \(303,628\) dominoes had fallen. (One has to wonder what kept those other \(7\) dominoes upright . . . )

This is the model one should keep in mind when thinking about PMI: domino toppling. In setting up a line of dominoes, what do we need to do in order to ensure that they will all fall when the toppling begins? Every domino must be placed so that it will hit and topple its successor. This is exactly analogous to \((a ∈ S \implies s(a) ∈ S)\). (Think of \(S\) having the membership criterion, \(x ∈ S =\) “\(x\) will have fallen when the toppling is over.”) The other thing that has to happen (barring the action of cockroaches) is for someone to knock over the first domino. This is analogous to \(0 ∈ S\).

Rather than continuing to talk about subsets of the naturals, it will be convenient to recast our discussion in terms of infinite families of logical statements. If we have a sequence of statements, (one for each natural number) \(P_0, P_1, P_2, P_3, . . .\) we can prove them all to be true using PMI. We have to do two things. First – and this is usually the easy part – we must show that P0 is true (i.e. the first domino will get knocked over). Second, we must show, for every possible value of \(k\), \(P_k \implies P_{k+1}\) (i.e. each domino will knock down its successor). These two parts of an inductive proof are known, respectively, as the basis and the inductive step.

An outline for a proof using PMI:

\[∀n ∈ N, P_n\]

- Proof

-

(By induction)

Basis: . . . (Here we must show that \(P_0\) is true.)

Inductive step: . . . (Here we must show that \(∀k, P_k \implies P_{k+1}\) is true.)

Q.E.D.

Soon we’ll do an actual example of an inductive proof, but first we have to say something REALLY IMPORTANT about such proofs. Pay attention! This is REALLY IMPORTANT! When doing the second part of an inductive proof (the inductive step), you are proving a UCS, and if you recall how that’s done, you start by assuming the antecedent is true. But the particular UCS we’ll be dealing with is \(∀k, P_k \implies P_{k+1}\). That means that in the course of proving \(∀n\), \(P_n\) we have to assume that \(P_k\) is true. Now this sounds very much like the error known as “circular reasoning,” especially as many authors don’t even use different letters (\(n\) versus \(k\) in our outline) to distinguish the two statements. (And, quite honestly, we only introduced the variable \(k\) to assuage a certain lingering guilt regarding circular reasoning.) The sentence \(∀n\), \(P_n\) is what we’re trying to prove, but the sentence we need to prove in order to do that is \(∀k, P_k \implies P_{k+1}\). This is subtly different – in proving that \(∀k\), \(P_k \implies P_{k+1}\) (which is a UCS!) we assume that \(P_k\) is true for some particular value of \(k\).

The sentence Pk is known as the inductive hypothesis. Think about it this way: If we were doing an entirely separate proof of \(∀n\), \(P_n \implies P_{n+1}\), it would certainly be fair to use the inductive hypothesis, and once that proof was done, it would be okay to quote that result in an inductive proof of \(∀n\), \(P_n\). Thus we can compartmentalize our way out of the difficulty!

Okay, so on to an example. In Section 4.1, we discovered a formula relating the sizes of a set \(A\) and its power set \(\mathcal{P}(A)\). If \(|A| = n\) then \(|\mathcal{P}(A)| = 2^n\). What we’ve got here is an infinite family of logical sentences, one for each value of \(n\) in the natural numbers,

\(|A| = 0 \implies |\mathcal{P}(A)| = 2^0, \\ |A| = 1 \implies |\mathcal{P}(A)| = 2^1, \\ |A| = 2 \implies |\mathcal{P}(A)| = 2^2, \\ |A| = 3 \implies |\mathcal{P}(A)| = 2^3,\)

et cetera.

This is exactly the sort of situation in which we use induction

For all finite sets \(A\),

\[|A| = n \implies |\mathcal{P}(A)| = 2^n.\]

- Proof

-

Let \(n = |A|\) and proceed by induction on \(n\).

Basis: Suppose \(A\) is a finite set and \(|A| = 0\), it follows that \(A = ∅\). The power set of \(∅\) is \(\{∅\}\) which is a set having \(1\) element. Note that \(2^0 = 1\).

Inductive step: Suppose that \(A\) is a finite set with \(|A| = k + 1\). Choose some particular element of \(A\), say \(a\), and note that we can divide the subsets of \(A\) (i.e. elements of \(\mathcal{P}(A)\)) into two categories, those that contain \(a\) and those that don’t.

Let \(S_1 = \{X ∈ \mathcal{P}(A) a ∈ X\}\) and let \(S_2 = \{X ∈ \mathcal{P}(A) a \notin X\}\). We have created two sets that contain all the elements of \(\mathcal{P}(A)\), and which are disjoint from one another. In symbolic form, \(S_1 ∪ S_2 = \mathcal{P}(A)\) and \(S_1 ∩ S_2 = ∅\). It follows that \(|\mathcal{P}(A)| = |S_1| + |S_2|\).

Notice that \(S_2\) is actually the power set of the \(k\)-element set \(A \setminus \{a\}\). By the inductive hypothesis, \(|S_2| = 2^k\). Also, notice that each set in \(S_1\) corresponds uniquely to a set in \(S_2\) if we just remove the element a from it. This shows that \(|S_1| = |S_2|\). Putting this all together we get that \(|P(A)| = 2^k + 2^k = 2(2^k) = 2^{k+1}\).

Q.E.D.

Here are a few pieces of advice about proofs by induction:

- Statements that can be proved inductively don’t always start out with \(P_0\). Sometimes \(P_1\) is the first statement in an infinite family. Sometimes it's \(P_5\). Don’t get hung up about something that could be handled by renumbering things.

- In your final write-up you only need to prove the initial case (whatever it may be) for the basis, but it is a good idea to try the first several cases while you are in the “draft” stage. This can provide insights into how to prove the inductive step, and it may also help you avoid a classic error in which the inductive approach fails essentially just because there is a gap between two of the earlier dominoes.2

- It is a good idea to write down somewhere just what it is that needs to be proved in the inductive step — just don’t make it look like you’re assuming what needs to be shown. For instance, in the proof above it might have been nice to start the inductive step with a comment along the following lines, “What we need to show is that under the assumption that any set of size \(k\) has a power set of size \(2^k\), it follows that a set of size \(k + 1\) will have a power set of size \(2^{k+1}\).”

We’ll close this section with a short discussion about nothing.

When we first introduced the natural numbers (\(\mathbb{N}\)) in Chapter 1 we decided to follow the convention that the smallest natural number is \(1\). You may have noticed that the Peano axioms mentioned in the beginning of this section treat \(0\) as the smallest natural number. So, from here on out we are going to switch things up and side with Dr. Peano. That is, from now on we will use the convention

\(\mathbb{N} = \{0, 1, 2, 3, . . .\}\)

Hmmm. . . Maybe that was a short discussion about something after all.

Exercises:

Consider the sequence of numbers that are \(1\) greater than a multiple of \(4\). (Such numbers are of the form \(4j + 1\).)

\(1, 5, 9, 13, 17, 21, 25, 29, . . .\)

The sum of the first several numbers in this sequence can be expressed as a polynomial.

\(\sum^{n}_{j=0} 4j + 1 = 2n^2 + 3n + 1\)

Complete the following table in order to provide evidence that the formula above is correct.

| \(n\) | \(\sum^{n}_{j=0} 4j + 1\) | \(2n^2 + 3n + 1\) |

|---|---|---|

| \(0\) | \(1\) | \(1\) |

| \(1\) | \(1 + 5\) | \(2 · 1^2 + 3 · 1 + 1 = 6\) |

| \(2\) | \(1 + 5 + 9 =\) | |

| \(3\) | ||

| \(4\) |

What is wrong with the following inductive proof of “all horses are the same color.”?

Let \(H\) be a set of \(n\) horses, all horses in \(H\) are the same color.

Proof: We proceed by induction on \(n\).

Basis: Suppose \(H\) is a set containing \(1\) horse. Clearly, this horse is the same color as itself.

Inductive step: Given a set of \(k + 1\) horses \(H\) we can construct two sets of \(k\) horses. Suppose \(H = \{h_1, h_2, h_3, . . . h_{k+1}\}\). Define \(H_a = \{h_1, h_2, h_3, . . . h_k\}\) (i.e. \(H_a\) contains just the first \(k\) horses) and \(H_b = \{h_2, h_3, h_4, . . . h_{k+1}\}\) (i.e. \(H_b\) contains the last \(k\) horses). By the inductive hypothesis both these sets contain horses that are “all the same color.” Also, all the horses from \(h_2\) to \(h_k\) are in both sets so both \(H_a\) and \(H_b\) contain only horses of this (same) color. Finally, we conclude that all the horses in \(H\) are the same color.

Q.E.D.

For each of the following theorems, write the statement that must be proved for the basis – then prove it, if you can!

The sum of the first \(n\) positive integers is \(\dfrac{(n^2 + n)}{2}\).

The sum of the first \(n\) (positive) odd numbers is \(n^2\).

If \(n\) coins are flipped, the probability that all of them are “heads” is \(\dfrac{1}{2}^n\).

Every \(2^n × 2^n\) chessboard – with one square removed – can be tiled perfectly3 by L-shaped trominoes. (A trominoe is like a domino but made up of \(3\) little squares. There are two kinds, straight  and L-shaped

and L-shaped  . This problem is only concerned with the L-shaped trominoes.)

. This problem is only concerned with the L-shaped trominoes.)

Suppose that the rules of the game for PMI were changed so that one did the following: ˆ

- Basis: Prove that \(P(0)\) is true.

- Inductive step: Prove that for all \(k\), \(P_k\) implies \(P_{k+2}\)

Explain why this would not constitute a valid proof that \(P_n\) holds for all natural numbers \(n\). How could we change the basis in this outline to obtain a valid proof?

If we wanted to prove statements that were indexed by the integers,

\(∀z ∈ \mathbb{Z}\), \(P_z\),

what changes should be made to PMI?