1.4: Rotation Matrices and Orthogonal Matrices

- Page ID

- 96140

View Rotation Matrix on YouTube

View Orthogonal Matrices on YouTube

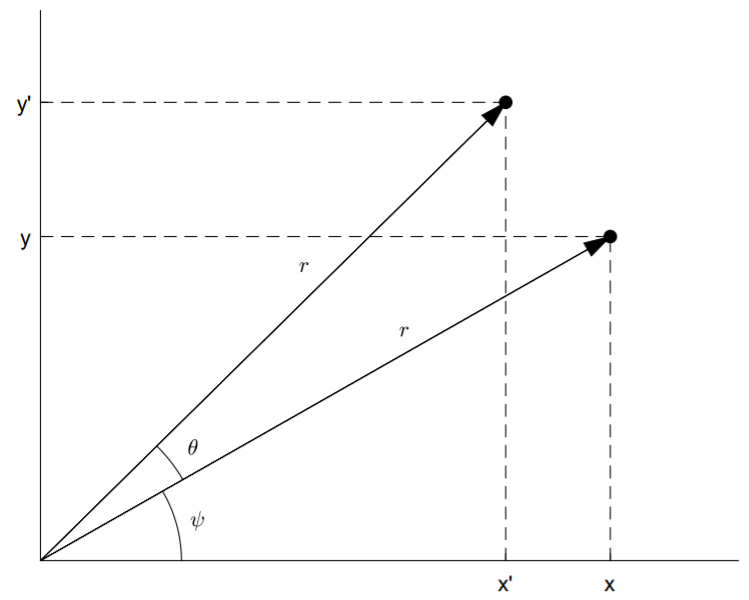

Consider the two-by-two rotation matrix that rotates a vector through an angle \(θ\) in the \(x\)-\(y\) plane, shown above. Trigonometry and the addition formula for cosine and sine results in

\[\begin{aligned} x'&=r\cos(\theta+\psi) \\ &=r(\cos\theta\cos\psi -\sin\theta\sin\psi )\\&=x\cos\theta-y\sin\theta \\ y'&=r\sin(\theta+\psi)\\&=r(\sin\theta\cos\psi+\cos\theta\sin\psi) \\ &=x\sin\theta+y\cos\theta.\end{aligned} \nonumber \]

Writing the equations for \(x'\) and \(y'\) in matrix form, we have

\[\left(\begin{array}{c}x'\\y'\end{array}\right)=\left(\begin{array}{rr}\cos\theta&-\sin\theta \\ \sin\theta&\cos\theta\end{array}\right)\left(\begin{array}{c}x\\y\end{array}\right).\nonumber \]

The above two-by-two matrix is called a rotation matrix and is given by

\[\text{R}_\theta =\left(\begin{array}{rr}\cos\theta&-\sin\theta \\ \sin\theta&\cos\theta\end{array}\right).\nonumber \]

Find the inverse of the rotation matrix \(\text{R}_\theta\).

Solution

The inverse of \(\text{R}_θ\) rotates a vector clockwise by \(θ\). To find \(\text{R}^{−1}_θ\), we need only change \(θ → −θ\):

\[\text{R}_\theta^{-1}=\text{R}_{-\theta}=\left(\begin{array}{rr}\cos\theta&\sin\theta \\ -\sin\theta&\cos\theta\end{array}\right).\nonumber \]

This result agrees with (1.4.4) since \(\det\text{ R}_\theta =1\).

Notice that \(\text{R}^{−1}_θ = \text{R}^{\text{T}}_θ\). In general, a square \(n\)-by-\(n\) matrix \(\text{Q}\) with real entries that satisfies

\[\text{Q}^{-1}=\text{Q}^{\text{T}}\nonumber \]

is called an orthogonal matrix. Since \(\text{QQ}^{\text{T}} = \text{I}\) and \(\text{Q}^{\text{T}}\text{Q} = \text{I}\), and since \(\text{QQ}^{\text{T}}\) multiplies the rows of \(\text{Q}\) against themselves, and \(\text{Q}^{\text{T}}\text{Q}\) multiplies the columns of \(\text{Q}\) against themselves, both the rows of \(\text{Q}\) and the columns of \(\text{Q}\) must form an orthonormal set of vectors (normalized and mutually orthogonal). For example, the column vectors of \(\text{R}\), given by

\[\left(\begin{array}{c}\cos\theta \\ \sin\theta\end{array}\right),\quad\left(\begin{array}{r}-\sin\theta \\ \cos\theta\end{array}\right),\nonumber \]

are orthonormal.

It is clear that rotating a vector around the origin doesn’t change its length. More generally, orthogonal matrices preserve inner products. To prove, let \(\text{Q}\) be an orthogonal matrix and \(x\) a column vector. Then

\[(\text{Qx})^{\text{T}}(\text{Qx})=\text{x}^{\text{T}}\text{Q}^{\text{T}}\text{Qx}=\text{x}^{\text{T}}\text{x}.\nonumber \]

The complex matrix analogue of an orthogonal matrix is a unitary matrix \(\text{U}\). Here, the relationship is

\[\text{U}^{-1}=\text{U}^\dagger .\nonumber \]

Like Hermitian matrices, unitary matrices also play a fundamental role in quantum physics.