18.9: Movie Scripts 5-6

- Page ID

- 2195

G.5: Linear Transformations

Hint for Review Question 5

The first thing we see in the problem is a definition of this new space \(P_{n}\). Elements of \(P_{n}\) are polynomials that look like \[a_{0} + a_{1} t +a_{2} t^{2} + \ldots + a_{n} t^{n}\] where the \(a_{i}\)'s are constants. So this means if \(L\) is a linear transformation from \(P_{2} \to P_{3}\) that the inputs of \(L\) are degree two polynomials which look like \[a_{0} + a_{1} t +a_{2} t^{2}\] and the output will have degree three and look like \[b_{0} + b_{1} t +b_{2} t^{2} + b_{3} t^{3}\]

We also know that \(L\) is a linear transformation, so what does that mean in this case? Well, by linearity we know that we can separate out the sum, and pull out the constants so we get \[ L (a_{0} + a_{1} t +a_{2} t^{2}) = a_{0}L(1) + a_{1}L( t) +a_{2} L( t^{2})\] Just this should be really helpful for the first two parts of the problem. The third part of the problem is asking us to think about this as a linear algebra problem, so lets think about how we could write this in the vector notation we use in the class. We could write \[a_{0} + a_{1} t +a_{2} t^{2} \text{ as } \begin{pmatrix}a_{0} \\ a_{1} \\ a_{2}\end{pmatrix} \] And think for a second about how you add polynomials, you match up terms of the same degree and add the constants component-wise. So it makes some sense to think about polynomials this way, since vector addition is also component-wise.

We could also write the output \[ b_{0} + b_{1} t +b_{2} t^{2} + b_{3} t^{3} \text{ as } \begin{pmatrix}b_{0} \\ b_{1} \\ b_{2} \\ b_{3}\end{pmatrix}\] Then lets look at the information given in the problem and think about it in terms of column vectors

- \(L(1) = 4\) but we can think of the input \(1= 1+ 0t + 0t^{2}\) and the output \(4 = 4+ 0t + 0t^{2} 0t^{3}\) and write this as \(L\begin{pmatrix}1\\0\\0\end{pmatrix} = \begin{pmatrix}4\\0\\0\\0\end{pmatrix}\)

- \(L(t) = t^{3}\) This can be written as \(L \begin{pmatrix}0\\1\\0\end{pmatrix} = \begin{pmatrix}0\\0\\0\\1\end{pmatrix}\)

- \(L(t^{2}) = t-1\) It might be a little trickier to figure out how to write \(t-1\) but if we write the polynomial out with the terms in order and with zeroes next to the terms that do not appear, we can see that \[ t-1 = -1 + t +0t^{2} + 0t^{3} \text{ corresponds to } \begin{pmatrix}-1 \\ 1\\ 0 \\ 0\end{pmatrix}\] So this can be written as \(L \begin{pmatrix}0\\0\\1\end{pmatrix} = \begin{pmatrix}-1\\1\\0\\0\end{pmatrix}\)

Now to think about how you would write the linear transformation \(L\) as a matrix, first think about what the dimensions of the matrix would be. Then look at the first two parts of this problem to help you figure out what the entries should be.

G.6: Matrices

Adjacency Matrix Example

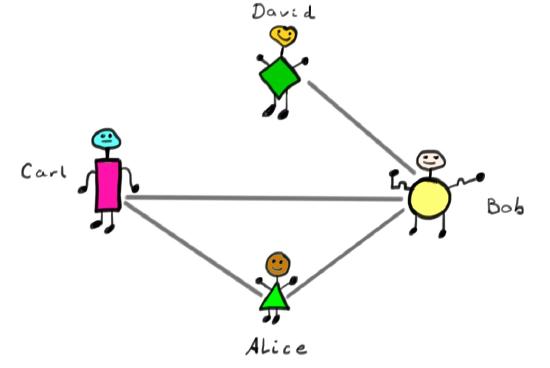

Lets think about a graph as a mini-facebook. In this tiny facebook there are only four people, Alice, Bob, Carl, and David.

Suppose we have the following relationships

- Alice and Bob are friends.

- Alice and Carl are friends.

- Carl and Bob are friends.

- David and Bob are friends.

Now draw a picture where each person is a dot, and then draw a line between the dots of people who are friends. This is an example of a graph if you think of the people as nodes, and the friendships as edges.

Now lets make a \(4 \times 4\) matrix, which is an adjacency matrix for the graph. Make a column and a row for each of the four people. It will look a lot like a table. When two people are friends put a 1 in the row of one and the column of the other. For example Alice and Carl are friends so we can label the table below.

\[

\begin{array}{c|cccc}

& A & B & C & D \\ \hline A & & & 1 & \\

B & & & & \\

C & 1 & & & \\

D & & & & \\

\end{array}

\]

We can continue to label the entries for each friendship. Here lets assume that people are friends with themselves, so the diagonal will be all ones.

\[

\begin{array}{c|cccc}

&A &B &C &D \\ \hline A &1 &1 &1 &0 \\

B &1 &1 &1 &1 \\

C &1 &1 &1 &0 \\

D &0 &1 &0 &1 \\

\end{array}

\]

Then take the entries of this table as a matrix

\[

\left(

\begin{array}{cccc}

1 &1 &1 &0 \\

1 &1 &1 &1 \\

1 &1 &1 &0 \\

0 &1 &0 &1 \\

\end{array} \right)

\]

Notice that this table is symmetric across the diagonal, the same way a multiplication table would be symmetric. This is because on facebook friendship is symmetric in the sense that you can't be friends with someone if they aren't friends with you too. This is an example of a symmetric matrix.

You could think about what you would have to do differently to draw a graph for something like twitter where you don't have to follow everyone who follows you. The adjacency matrix might not be symmetric then.

Do Matrices Commute?

This video shows you a funny property of matrices. Some matrix properties look just like those for numbers. For example numbers obey

$$

a(bc) = (ab)c\,

$$

and so do matrices:

$$

A(BC)=(AB)C.

$$

This says the order of bracketing does not matter and is called associativity. Now we ask ourselves whether the basic property of numbers

$$ab=ba\, ,$$

holds for matrices

$$AB\stackrel?=BA\, .$$

For this, firstly note that we need to work with square matrices even for both orderings to even make sense. Lets take a simple \(2\times 2\) example, let

$$

A=\begin{pmatrix}1&a\\0&1\end{pmatrix}\, ,\qquad

B=\begin{pmatrix}1&b\\0&1\end{pmatrix}\, ,\qquad

C=\begin{pmatrix}1&0\\a&1\end{pmatrix}\, .

$$

In fact, computing \(AB\) and \(BA\) we get the same result $$AB=BA=\begin{pmatrix}1&a+b\\0&1\end{pmatrix}\, ,$$

so this pair of matrices do commute. Lets try \(A\) and \(C\):

$$

AC=\begin{pmatrix}1+a^{2}&a\\a&1\end{pmatrix}\, ,\qquad\mbox{and}\qquad CA =\begin{pmatrix}1&a\\a&1+a^{2}\end{pmatrix}

$$

so $$AC\neq CA$$

and this pair of matrices does \(\textit{not}\) commute. Generally, matrices usually do not commute, and the problem of finding those that do is a very interesting one.

Matrix Exponential Example

This video shows you how to compute

\[\exp\begin{pmatrix}0&\theta\\-\theta & 0\end{pmatrix}\, .\]

For this we need to remember that the matrix exponential is defined by its power series

\[\exp M := I + M + \frac{1}{2!} M^{2} + \frac{1}{3!} M^{3}+\cdots\, .\]

Now lets call

\[\begin{pmatrix}0&\theta\\-\theta & 0\end{pmatrix}=i\theta\]

where the \(\textit{matrix}\)

\[i:=\begin{pmatrix}0&1\\-1 & 0\end{pmatrix}\]

and by matrix multiplication is seen to obey

\[i^{2} =-I\, ,\qquad i^{3}=-i\, , i^{4} = I\, .\]

Using these facts we compute by organizing terms according to whether they have an \(i\) or not:

\begin{eqnarray*}\exp i\theta &=& I + \frac{1}{2!}\, \theta^{2} (-I) + \frac{1}{4!} \,(+I) + \cdots \\&+&i \theta + \frac{1}{3!} \,\theta^{3} (-i) + \frac{1}{5!} \,i+\cdots\\&=& I ( 1-\frac{1}{2!}\, \theta^{2} + \frac{1}{4!}\,\theta^{4} + \cdots)\\&+&i( \theta- \frac{1}{3!}\, \theta^{3} + \frac{1}{5!}\,\theta^{5} +\cdots)\\&=&I\cos\theta + i \sin\theta \\&=&\begin{pmatrix}\cos\theta & \sin\theta \\ -\sin\theta & \cos\theta\end{pmatrix}\,.\end{eqnarray*}

Here we used the familiar Taylor series for the cosine and sine functions. A fun thing to think about is how the above matrix acts on vector in the plane.

Proof Explanation

In this video we will talk through the steps required to prove $$tr M N = tr N M\).$$ We want to show that for \(S=\{v_{1}, \ldots, v_{n} \}\) a basis for a vector space \(V\), then every vector \(w \in V\) can be written \(\textit{uniquely}\) as a linear combination of vectors in the basis \(S\):

\[w=c^{1}v_{1}+\cdots + c^{n}v_{n}.\]

We should remember that since \(S\) is a basis for \(V\), we know two things

- \(V = span S\)

- \(v_{1}, \ldots , v_{n}\) are linearly independent, which means that whenever we have \(a^{1}v_{1}+ \ldots + a^{n} v_{n} = 0\) this implies that \(a^{i} =0\) for all \(i=1, \ldots, n\).

This first fact makes it easy to say that there exist constants \(c^{i}\) such that \(w=c^{1}v_{1}+\cdots + c^{n}v_{n}\). What we don't yet know is that these \(c^{1}, \ldots c^{n}\) are unique.

In order to show that these are unique, we will suppose that they are not, and show that this causes a contradiction. So suppose there exists a second set of constants \(d^{i}\) such that

\[w=d^{1}v_{1}+\cdots + d^{n}v_{n}\, .\]

For this to be a contradiction we need to have \(c^{i} \neq d^{i}\) for some \(i\). Then look what happens when we take the difference of these two versions of \(w\):

\begin{eqnarray*}0_{V}&=&w-w\\&=&(c^{1}v_{1}+\cdots + c^{n}v_{n})-(d^{1}v_{1}+\cdots + d^{n}v_{n} )\\&=&(c^{1}-d^{1})v_{1}+\cdots + (c^{n}-d^{n})v_{n}. \\\end{eqnarray*}

Since the \(v_{i}\)'s are linearly independent this implies that \(c^{i} - d^{i} = 0\) for all \(i\), this means that we cannot have \(c^{i} \neq d^{i}\), which is a contradiction.

Hint for Review Question 4

This problem just amounts to remembering that the dot product of \(x=(x_{1},x_{2},\ldots,x_{n})\) and \(y=(y_{1},y_{2},\ldots,y_{n})\) is

$$

x_{1}y_{1}+ x_{2}y_{2}+\cdots + x_{n} y_{n}\, .

$$

Then try multiplying the above row vector times \(y^{T}\) and compare.

Hint for Review Problem 5

The majority of the problem comes down to showing that matrices are right distributive. Let \(M_{k}\) is all \(n \times k\) matrices for any \(n\), and define the map \(f_{R} \colon M_{k} \rightarrow M_{m}\) by \(f_{R}(M) = MR\) where \(R\) is some \(k \times m\) matrix. It should be clear that \(f_{R}(\alpha \cdot M) = (\alpha M)R = \alpha (MR) = \alpha f_{R}(M)\) for any scalar \(\alpha\). Now all that needs to be proved is that

\[

f_{R}(M + N) = (M + N)R = MR + NR = f_{R}(M) + f_{R}(N),

\]

and you can show this by looking at each entry.

We can actually generalize the concept of this problem. Let \(V\) be some vector space and \(\mathbb{M}\) be some collection of matrices, and we say that \(\mathbb{M}\) is a \(\textit{left-action}\) on \(V\) if

\[

(M \cdot N) \circ v = M \circ (N \circ v)

\]

for all \(M, N \in \mathbb{N}\) and \(v \in V\) where \(\cdot\) denoted multiplication in \(\mathbb{M}\) (i.e. standard matrix multiplication) and \(\circ\) denotes the matrix is a linear map on a vector (i.e. \(M(v)\)). There is a corresponding notion of a right action where

\[

v \circ (M \cdot N) = (v \circ M) \circ N

\]

where we treat \(v \circ M\) as \(M(v)\) as before, and note the order in which the matrices are applied. People will often omit the left or right because they are essentially the same, and just say that \(\mathbb{M}\) acts on \(V\).

Hint for Review Question 8

This is a hint for computing exponents of matrices. So what is \(e^{A}\) if \(A\) is a matrix? We remember that the Taylor series for

\[

e^{x} = \sum^{\infty}_{n=0} \frac{x^{n}}{n!}.

\]

So as matrices we can think about

\[

e^{A} = \sum^{\infty}_{n=0} \frac{A^{n}}{n!}.

\]

This means we are going to have an idea of what \(A^{n}\) looks like for any \(n\). Lets look at the example of one of the matrices in the problem. Let

\[

A =

\left(

\begin{array}{cc}

1 & \lambda\\

0 & 1\\

\end{array}

\right).

\]

Lets compute \(A^{n}\) for the first few \(n\).

\begin{align*}

A^{0} & =

\left(

\begin{array}{cc}

1 & 0\\

0 & 1\\

\end{array}

\right)

\\ A^{1} & =

\left(

\begin{array}{cc}

1 & \lambda \\

0 & 1\\

\end{array}

\right)

\\ A^{2} & = A\cdot A =

\left(

\begin{array}{cc}

1 & 2 \lambda \\

0 & 1\\

\end{array}

\right)

\\ A^{3} & = A^{2}\cdot A =

\left(

\begin{array}{cc}

1 & 3 \lambda \\

0 & 1\\

\end{array}

\right).

\end{align*}

There is a pattern here which is that

\[ A^{n} =

\left(

\begin{array}{cc}

1 & n \lambda \\

0 & 1\\

\end{array}

\right),

\]

then we can think about the first few terms of the sequence

\[

e^{A} = \sum^{\infty}_{n=0} \frac{A^{n}}{n!} = A^{0} + A + \frac{1}{2!ß} A^{2} + \frac{1}{3!} A^{3} + \ldots.

\]

Looking at the entries when we add this we get that the upper left-most entry looks like this:

\[

1 + 1 + \frac{1}{2} + \frac{1}{3!} + \ldots = \sum^{\infty}_{n=0} \frac{1}{n!} = e^{1}.

\]

Continue this process with each of the entries using what you know about Taylor series expansions to find the sum of each entry.

Hint for Review Problem 3

First note that (b) implies (a) is the easy direction: just think about what it means for \(M\) to be non-singular and for a linear function to be well-defined. Therefore we assume that \(M\) is singular which implies that there exists a non-zero vector \(X_{0}\) such that \(MX_{0} = 0\). Now assume there exists some vector \(X_{V}\) such that \(M X_{V} = V\), and look at what happens to \(X_{V} + c \cdot X_{0}\) for any \(c\) in your field. Lastly don't forget to address what happens if \(X_{V}\) does not exist.

Hint for Review Problem 4

In the text, only inverses for square matrices were discussed, but there is a notion of left and right inverses for matrices that are not square. It helps to look at an example with bits to see why. To start with we look at vector spaces

$$\mathbb{Z}_{2}^{3}=\{(x,y,z)|x,y,z=0,1\}\qquad \mbox{ and } \qquad \mathbb{Z}_{2}^{2}=\{(x,y)|x,y=0,1\}\, .$$

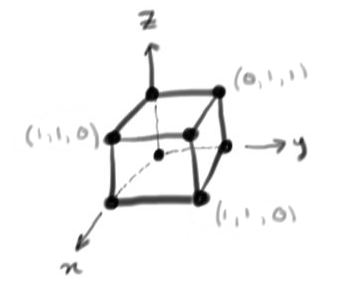

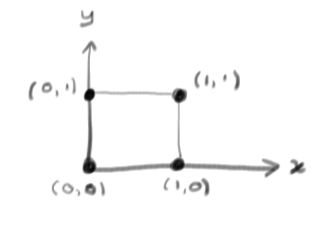

These have 8 and 4 vectors, respectively, that can be depicted as corners of a cube or square:

$$\mathbb{Z}_{2}^{3}$$

or $$\mathbb{Z}_{2}^{2}$$

Now lets consider a linear transformation

$$

L:\mathbb{Z}_{2}^{3}\longrightarrow\mathbb{Z}_{2}^{2}\, .

$$

This must be represented by a matrix, and lets take the example

$$

L\begin{pmatrix}x\\y\\z\end{pmatrix}=\begin{pmatrix}0 & 1 & 1\\1 & 1 &0\end{pmatrix}\begin{pmatrix}x\\y\\z\end{pmatrix}:=A X\, .

$$

Since we have bits, we can work out what $L$ does to every vector, this is listed below

$$

\begin{array}{ccc}

(0,0,0)&\stackrel{L}\mapsto&(0,0)\\

(0,0,1)&\stackrel{L}\mapsto&(1,0)\\

(1,1,0)&\stackrel{L}\mapsto&(1,0)\\

(1,0,0)&\stackrel{L}\mapsto&(0,1)\\

(0,1,1)&\stackrel{L}\mapsto&(0,1)\\

(0,1,0)&\stackrel{L}\mapsto&(1,1)\\

(1,0,1)&\stackrel{L}\mapsto&(1,1)\\

(1,1,1)&\stackrel{L}\mapsto&(1,1)

\end{array}

$$

Now lets think about left and right inverses. A left inverse \(B\) to the matrix \(A\)

would obey

$$

BA=I

$$

and since the identity matrix is square, \(B\) must be \(2\times3\). It would have to undo the action of \(A\) and return vectors in \(\mathbb{Z}_{2}^{3}\) to where they started from. But above, we see that different vectors in \(\mathbb{Z}_{2}^{3}\) are mapped to the same vector in \(\mathbb{Z}_{2}^{2}\) by the linear transformation \(L\) with matrix \(A\). So \(B\) cannot exist. However a right inverse \(C\) obeying

$$

AC=I

$$

can. It would be \(2\times2\). Its job is to take a vector in \(\mathbb{Z}_{2}^{2}\) back to one in \(\mathbb{Z}_{2}^{3}\) in a way that gets undone by the action of \(A\). This can be done, but not uniquely.

Using an \(LU\) Decomposition

Lets go through how to use a LU decomposition to speed up solving a system of equations. Suppose you want to solve for \(x\) in the equation \(Mx=b\)

\[

\left( \begin{array}{ccc}

1 & 0 & -5 \\

3 & -1 & -14 \\

1& 0& -3\\

\end{array} \right) x

= \begin{pmatrix}6 \\ 19\\ 4\end{pmatrix}

\]

where you are given the decomposition of \(M\) into the product of \(L\) and \(U\) which are lower and upper and lower triangular matrices respectively.

\[

M =

\left( \begin{array}{ccc}

1 & 0 & -5 \\

3 & -1 & -14 \\

1& 0& -3\\

\end{array} \right)

=

\left( \begin{array}{ccc}

1 & 0 & 0 \\

3 & 1 &0 \\

1& 0& 2\\

\end{array} \right)

\left( \begin{array}{ccc}

1 & 0 & -5 \\

0 & -1 &1 \\

0 & 0 & 1\\

\end{array} \right)

=LU

\]

First you should solve \(L(Ux) =b\) for \(Ux\). The augmented matrix you would use looks like this

\[

\left( \begin{array}{ccc|c}

1 & 0 & 0 & 6\\

3 & 1 & 0 &19\\

1 & 0 & 2 & 4\\

\end{array} \right)

\]

This is an easy augmented matrix to solve because it is upper triangular. If you were to write out the three equations using variables, you would find that the first equation has already been solved, and is ready to be plugged into the second equation. This backward substitution makes solving the system much faster. Try it and in a few steps you should be able to get

\[

\left( \begin{array}{ccc|c}

1& 0& 0 & 6 \\

0& 1& 0 & 1 \\

0& 0& 1 & -1\\

\end{array} \right)

\]

This tells us that \(Ux = \begin{pmatrix}6\\1\\-1\end{pmatrix}\). Now the second part of the problem is to solve for \(x\). The augmented matrix you get is

\[

\left( \begin{array}{ccc|c}

1 & 0 & -5 & 6 \\

0 & -1 &1 & 1 \\

0 & 0 & 1 & -1 \\

\end{array} \right)

\]

It should take only a few step to transform it into

\[

\left( \begin{array}{ccc|c}

1& 0& 0 & 1 \\

0& 1& 0 & -2 \\

0& 0& 1 & -1\\

\end{array} \right) \, ,

\]

which gives us the answer \(x= \begin{pmatrix}1\\ -2\\-1\end{pmatrix}\).

Another \(LU\) Decomposition Example

Here we will perform an \(LU\) decomposition on the matrix

\[

M = \begin{pmatrix}

1 & 7 & 2 \\

-3 & -21 & 4 \\

1 & 6 & 3

\end{pmatrix}

\]

following the procedure outlined in Section 7.7.2. So initially we have \(L_{1} = I_{3}\) and \(U_{1} = M\), and hence

\begin{align*}

L_{2} & = \begin{pmatrix}

1 & 0 & 0 \\

-3 & 1 & 0 \\

1 & 0 & 1

\end{pmatrix}

& U_{2} & = \begin{pmatrix}

1 & 7 & 2 \\

0 & 0 & 10 \\

0 & -1 & -1

\end{pmatrix}.

\end{align*}

However we now have a problem since \(0 \cdot c = 0\) for any value of \(c\) since we are working over a field, but we can quickly remedy this by swapping the second and third rows of \(U_{2}\) to get \(U_{2}^{\prime}\) and note that we just interchange the corresponding rows all columns left of and including the column we added values to in \(L_{2}\) to get \(L_{2}^{\prime}\). Yet this gives us a small problem as \(L_{2}^{\prime} U_{2}^{\prime} \neq M\); in fact it gives us the similar matrix \(M^{\prime}\) with the second and third rows swapped. In our original problem \(MX = V\), we also need to make the corresponding swap on our vector \(V\) to get a \(V^{\prime}\) since all of this amounts to changing the order of our two equations, and note that this clearly does not change the solution. Back to our example, we have

\begin{align*}

L_{2}^{\prime} & = \begin{pmatrix}

1 & 0 & 0 \\

1 & 1 & 0 \\

-3 & 0 & 1

\end{pmatrix}

& U_{2}^{\prime} & = \begin{pmatrix}

1 & 7 & 2 \\

0 & -1 & -1 \\

0 & 0 & 10

\end{pmatrix},

\end{align*}

and note that \(U_{2}^{\prime}\) is upper triangular. Finally you can easily see that

\[

L_{2}^{\prime} U_{2}^{\prime} = \begin{pmatrix}

1 & 7 & 2 \\

1 & 6 & 3 \\

-3 & -21 & 4

\end{pmatrix} = M^{\prime}

\]

which solves the problem of \(L_{2}^{\prime} U_{2}^{\prime} X = M^{\prime} X = V^{\prime}\). (We note that as augmented matrices \(( M^{\prime} | V^{\prime} ) \sim (M | V)\).)

Block \(LDU\) Explanation

This video explains how to do a block \(LDU\) decomposition. Firstly remember some key facts about block matrices: It is important that the blocks fit together properly. For example, if we have matrices

$$

\begin{array}{c|c} \mbox{matrix}&\mbox{shape}\\\hline

X&r\times r\\

Y&r\times t\\

Z&t\times r\\

W& t\times t

\end{array}

$$

we could fit these together as a \((r+t)\times(r+t)\) square block matrix

$$

M=\left(\begin{array}{c|c}X&Y\\\hline Z&W\end{array}\right)\, .

$$

Matrix multiplication works for blocks just as for matrix entries:

$$

M^{2}= \left(\begin{array}{c|c}X&Y\\\hline Z&W\end{array}\right)\left(\begin{array}{c|c}X&Y\\\hline Z&W\end{array}\right)

=\left(\begin{array}{c|c}X^{2} + YZ&XY+YW\\\hline ZX+WZ&ZY+W^{2}\end{array}\right)\, .

$$

Now lets specialize to the case where the square matrix \(X\) has an inverse. Then we can multiply out the following triple product of a lower triangular, a block diagonal and an upper triangular matrix:

$$

\left(\begin{array}{c|c}I&0\\\hline ZX^{-1}&I\end{array}\right)\left(\begin{array}{c|c}X&0\\\hline 0&W-ZX^{-1}Y\end{array}\right)

\left(\begin{array}{c|c}I&X^{-1}Y\\\hline 0&I\end{array}\right)$$ $$=

\left(\begin{array}{c|c}X&0\\\hline Z&W-ZX^{-1}Y\end{array}\right)

\left(\begin{array}{c|c}I&X^{-1}Y\\\hline 0&I\end{array}\right)

$$ $$=

\left(\begin{array}{c|c}X&Y\\\hline ZX^{-1}Y+Z&W-ZX^{-1}Y\end{array}\right)

$$

$$

=\left(\begin{array}{c|c}X&Y\\\hline Z&W\end{array}\right)=M\, .

$$

This shows that the \(LDU\) decomposition given in Section 7.7 is correct.

Contributor

David Cherney, Tom Denton, and Andrew Waldron (UC Davis)